Formal Learning Theory

Formal learning theory is the mathematical embodiment of a normative epistemology. It deals with the question of how an agent should use observations about her environment to arrive at correct and informative conclusions. Philosophers such as Putnam, Glymour and Kelly have developed learning theory as a normative framework for scientific reasoning and inductive inference.

Terminology. Cognitive science and related fields typically use the term “learning” for the process of gaining information through observation—hence the name “learning theory”. To most cognitive scientists, the term “learning theory” suggests the empirical study of human and animal learning stemming from the behaviourist paradigm in psychology. The epithet “formal” distinguishes the subject of this entry from behaviourist learning theory. Because many developments in, and applications of, formal learning theory come from computer science, the term “computational learning theory” is also common. Philosophical terms for learning-theoretic epistemology include “logical reliability” (Kelly [1996], Glymour [1991]) and “means-ends epistemology” (Schulte [1999a]).

This entry focuses on the philosophical ideas and insights behind learning theory. It eschews theorems and definitions in favour of examples and informal arguments. Those interested in the mathematical substance of learning theory will find some references in the Bibliography, and a summary of the basic definitions in the Supplementary Document.

Philosophical characteristics. We can categorize normative epistemologies according to two criteria: (1) what are the objects of normative evaluation, and (2) what are the evaluation criteria to be employed? In learning theory, the basic object of normative evaluation is an inquirer's disposition to form beliefs given some evidence. The normative question that drives the theory is whether a given doxastic disposition serves the goals of inquiry or not. Most of learning theory examines which investigative strategies reliably lead to correct beliefs about the world.

Overview. A number of examples will illustrate how means-ends analysis can lead us to endorse some ways of drawing inductive inferences and to reject others. Then I outline some of the general insights that lie behind such examples. Finally I relate means-ends epistemology to some other traditions in inductive epistemology.

- 1. Some Basic Examples

- 2. Case Studies in Scientific Practice

- 3. The Long Run in The Short Run

- 4. The Limits of Inquiry and the Complexity of Empirical Problems

- 5. Categorical vs. Hypothetical Imperatives

- Supplementary Document: Basic Formal Definitions

- Bibliography

- Other Internet Resources

- Related Entries

1. Some Basic Examples

Learning-theoretic analysis assesses doxastic dispositions. Several terms for doxastic dispositions are in common use in philosophy; I will use “inductive strategy”, “inference method” and most frequently “inductive method” to mean the same thing. The best way to understand how learning theory evaluates inductive methods is to work through some examples. The following presentation begins with some very simple inductive problems and moves on to more complicated—and more realistic—settings.

Universal Generalization

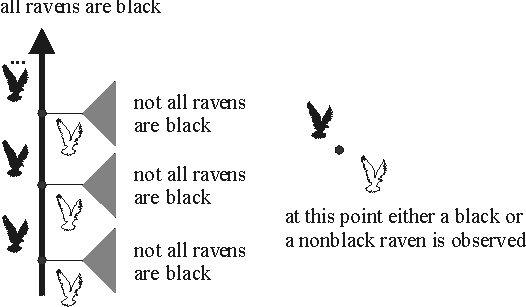

Let's revisit the classic question of whether all ravens are black. Imagine an ornithologist who tackles this problem by examining one raven after another. There is exactly one observation sequence in which only black ravens are found; all others feature at least one nonblack raven. The figure below illustrates the possible observation sequences. Dots in the figure denote points at which an observation may be made. A black bird to the left of a dot indicates that at this stage, a black raven is observed; similarly for a white bird to the right of a dot. Given a complete sequence of observations, either all observed ravens are black or not; the figure labels complete observation sequences with the statement that is true of them. The gray fan indicates that after the observation of a white raven, the claim that not all ravens are black holds on all observation sequences resulting from further observations.

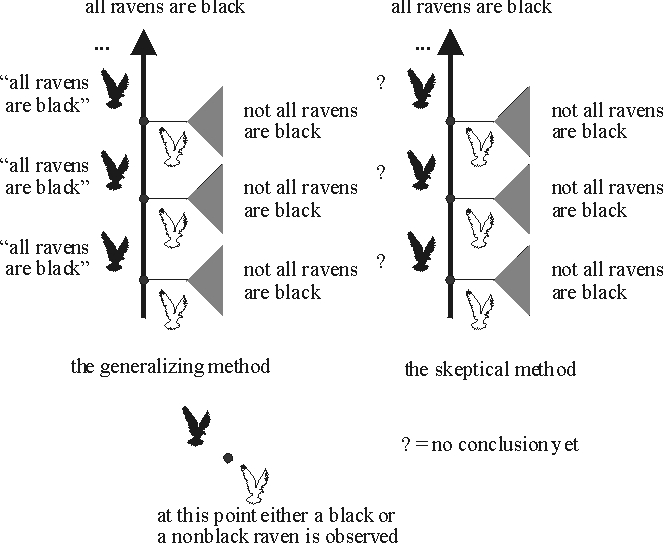

If the world is such that only black ravens are found, we would like the ornithologist to settle on this generalization. (It may be possible that some nonblack ravens remain forever hidden from sight, but even then the generalization “all ravens are black” at least gets the observations right.) If the world is such that eventually a nonblack raven is found, then we would like the ornithologist to arrive at the conclusion that not all ravens are black. This specifies a set of goals of inquiry. For any given inductive method that might represent the ornithologist's disposition to adopt conjectures in the light of the evidence, we can ask whether that method measures up to these goals or not. There are infinitely many possible methods to consider; we'll look at just two, a sceptical one and one that boldly generalizes. The bold method conjectures that all ravens are black after seeing that the first raven is black. It hangs on to this conjecture unless some nonblack raven appears. The sceptical method does not go beyond what is entailed by the evidence. So if a nonblack raven is found, the skeptical method concludes that not all ravens are black, but otherwise the method does not make a conjecture one way or another. The figure below illustrates both the generalizing and the sceptical method.

Do these methods attain the goals we set out? Consider the bold method. There are two possibilities: either all observed ravens are black, or some nonblack raven is found. In the first case, the method conjectures that all ravens are black and never abandons this conjecture. In the second case, the method concludes that not all ravens are black as soon as the first nonblack raven is found. Hence no matter how the evidence comes in, eventually the method gives the right answer as to whether all ravens are black and sticks with this answer. Learning theorists call such methods reliable because they settle on the right answer no matter what observations the world provides.

The skeptical method does not measure up so well. If a nonblack raven appears, then the method does arrive at the correct conclusion that not all ravens are black. But if all ravens are black, the skeptic never takes an “inductive leap” to adopt this generalization. So in that case, the skeptic fails to provide the right answer to the question of whether all ravens are black.

This illustrates how means-ends analysis can evaluate methods: the bold method meets the goal of reliably arriving at the right answer, whereas the skeptical method does not. Note the character of this argument against the skeptic: The problem, in this view, is not that the skeptic violates some canon of rationality, or fails to appreciate the “uniformity of nature”. The learning-theoretic analysis concedes to the skeptic that no matter how many black ravens have been observed in the past, the next one could be white. The issue is that if all observed ravens are indeed black, then the skeptic never answers the question “are all ravens black?”. Getting the right answer to that question requires generalizing from the evidence even though the generalization could be wrong.

As for the bold method, it's important to be clear on what it does and does not achieve. The method will eventually settle on the right answer—but it (or we) may never be certain that it has done so. As William James put it, “no bell tolls” when science has found the right answer. We are certain that the method will eventually settle on the right answer; but we may never be certain that the current answer is the right one. This is a subtle point. The next example illustrates this point further.

A Riddle of Induction

Nelson Goodman posed a famous puzzle about inductive inference known as the (New) Riddle of Induction ([Goodman 1983]). Our next example is inspired by his puzzle. Goodman considered generalizations about emeralds, involving the familiar colours of green and blue, as well as certain unusual ones:

Suppose that all emeralds examined before a certain time t are green ... Our evidence statements assert that emerald a is green, that emerald b is green, and so on...

Now let us introduce another predicate less familiar than “green”. It is the predicate “grue” and it applies to all things examined before t just in case they are green but to other things just in case they are blue. Then at time t we have, for each evidence statement asserting that a given emerald is green, a parallel evidence statement asserting that emerald is grue.

The question is whether we should conjecture that all emeralds are green rather than that all emeralds are grue when we obtain a sample of green emeralds examined before time t, and if so, why.

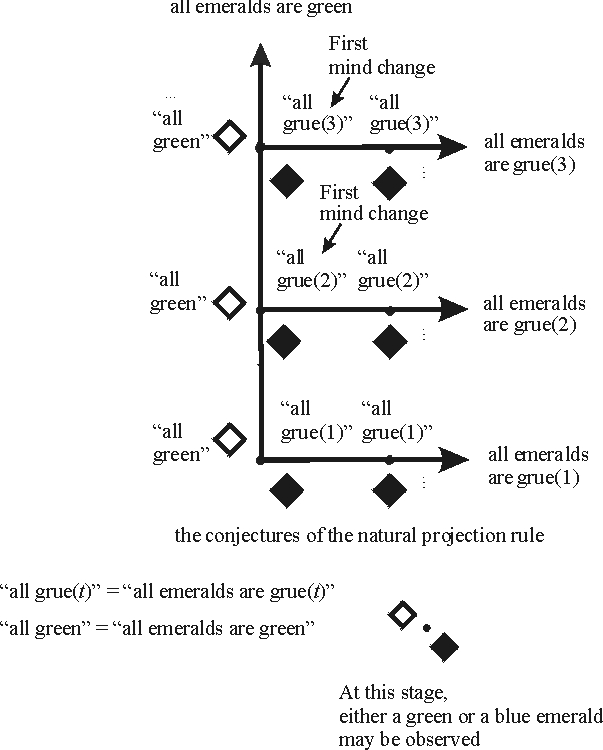

Clearly we have a family of grue predicates in this problem, corresponding to different “critical times” t; let's write grue(t) to denote these. Following Goodman, let us refer to methods as projection rules in discussing this example. A projection rule succeeds in a world just in case it settles on a generalization that is correct in that world. Thus in a world in which all examined emeralds are found to be green, we want our projection rule to converge to the proposition that all emeralds are green. If all examined emeralds are grue(t), we want our projection rule to converge to the proposition that all emeralds are grue(t). Note that this stipulation treats green and grue predicates completely on a par, with no bias towards either. As before, let us consider two rules: the “natural” projection rule which conjectures that all emeralds are green as long as only green emeralds are found, and the “gruesome” rule which keeps projecting the next grue predicate consistent with the available evidence. Expressed in the green-blue vocabulary, the gruesome projection rule conjectures that after observing some number of n green emeralds, all future ones will be blue. The figure below illustrates the possible observation sequences and the natural projection rule in this model of the New Riddle of Induction.

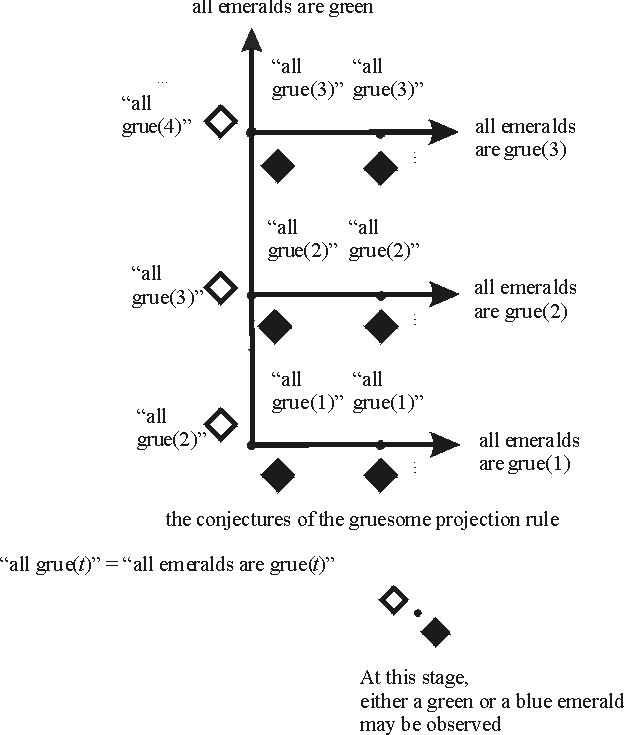

The following figure shows the gruesome projection rule.

How do these rules measure up to the goal of arriving at a true generalization? Suppose for the sake of the example that the only serious possibilities under consideration are that either all emeralds are green or that all emeralds are grue(t) for some critical time t. Then the natural projection rule settles on the correct generalization no matter what the correct generalization is. For if all emeralds are green, the natural projection rule asserts this fact from the beginning. And suppose that all emeralds are grue(t) for some critical time t. Then at time t, a blue emerald will be observed. At this point the natural projection rule settles on the conjecture that all emeralds are grue(t), which must be correct given our assumption about the possible observation sequences. Thus no matter what evidence is obtained in the course of inquiry—consistent with our background assumptions—the natural projection rule eventually settles on a correct generalization about the colour of emeralds.

The gruesome rule does not do as well. For if all emeralds are green, the rule will never conjecture this fact because it keeps projecting grue predicates. Hence there is a possible observation sequence—namely those on which all emeralds are green—on which the gruesome rule fails to converge to the right generalization. So means-ends analysis would recommend the natural projection rule over the gruesome rule. Some comments are in order.

1. As in the previous example, nothing in this argument hinges on arguments to the effect that certain possibilities are not to be taken seriously a priori. In particular, nothing in the argument says that generalizations with grue predicates are ill-formed, unlawlike, or in some other way a priori inferior to “all emeralds are green”.

2. The analysis does not depend on the vocabulary in which the evidence and generalizations are framed. For ease of exposition, I have mostly used the green-blue reference frame. However, grue-bleen speakers would agree that the aim of reliably settling on a correct generalization requires the natural projection rule rather than the gruesome one, even if they would want to express the conjectures of the natural rule in their grue-bleen language rather than the blue-green language that we have used so far. (For more on the language-invariance of means-ends analysis see Section 4 (The Limits of Inquiry and the Complexity of Empirical Problems), as well as Schulte [1999a, 1999b]).

3. Though the analysis does not depend on language, it does depend on assumptions about what the possible observation sequences are. The example as described above seems to comprise the possibilities that correspond to the colour predicates Goodman himself discussed. But means-ends analysis applies just as much to other sets of possible predicates. Schulte [1999a, 1999b] and Chart [2000] discuss a number of other versions of the Riddle of Induction, in some of which means-ends analysis favours projecting that all emeralds are grue on a sample of all green emeralds.

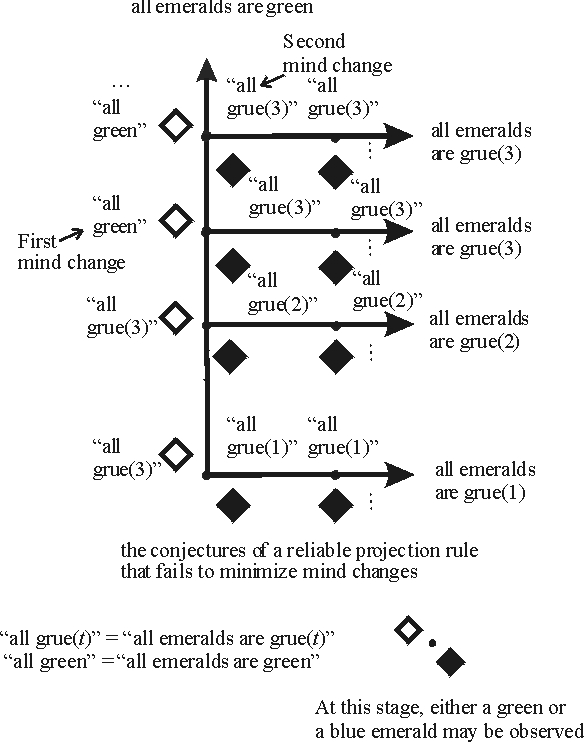

4. Even with the assumptions granted so far, there are reliable projection rules that project that all emeralds are grue(t) on a sample of all green emeralds. For example, the projection rule “conjecture that all emeralds are grue(3) until 3 green emeralds are observed; then conjecture that all emeralds are green until a blue emerald is observed” is guaranteed to eventually settle on a correct generalization just like the natural projection rule. (It's a worthwhile exercise to verify the reliability of this rule.) I will discuss criteria for further restricting the space of rules in Section 3 (The Long Run in The Short Run).

Generalizations with Exceptions

Let's return to the world of ravens. This time the ornithological community is more guarded in its generalizations concerning the colour of ravens. Two competing hypotheses are under investigation: (1) That basically all ravens are black, but there may be a finite number of exceptions to that rule, and (2) that basically all ravens are white, but there may be a finite number of exceptions to that rule. Assuming that one or the other of these hypotheses is correct, is there an inductive method that reliably settles on the right one? What makes this problem more difficult than our first two is that each hypothesis under investigation is consistent with any finite amount of evidence. If 100 white ravens and 50 black ravens are found, either the 50 black ravens or the 100 white ravens may be the exception to the rule. In terminology made familiar by Karl Popper's work, we may say that neither hypothesis is falsifiable. As a consequence, the inductive strategy from the previous two examples will not work here. This strategy was basically to adopt a “bold” universal generalization, such as “all ravens are black” or “all emeralds are green”, and to hang on to this conjecture as long as it “passes muster”. However, when rules with possible exceptions are under investigation, this strategy is unreliable. For example, suppose that an inquirer first adopts the hypothesis that “all but finitely many ravens are white”. It may be the case that from then on, only black ravens are found. But each of these apparent counterinstances can be “explained away” as an exception. If the inquirer follows the principle of hanging on to her conjecture until the evidence is logically inconsistent with the conjecture, she will never abandon her false belief that all but finitely many ravens are white, much less arrive at the correct belief that all but finitely many ravens are black.

Reliable inquiry requires a more subtle investigative strategy. Here is one (of many). Begin inquiry with either competing hypothesis, say “all but finitely many ravens are black”. Choose some cut-off ratio to represent a “clear majority”; for definiteness, let's say 70%. If the current conjecture is that all but finitely many ravens are black, change your mind to conjecture that all but finitely many ravens are white just in case over 70% of observed ravens are in fact white. Proceed likewise if the current conjecture is that all but finitely many ravens are white when over 70% of observed ravens are in fact black.

A bit of thought shows that this rule reliably identifies the correct hypothesis in the long run, no matter which of the two competing hypotheses is correct. For if all but finitely many ravens are black, eventually the nonblack exceptions to the rule will be exhausted, and an arbitrarily large majority of observed ravens will be black. Similarly if all but finitely many ravens are white.

The way in which this reliable method is sensitive to the frequency of occurrences of black resp. white ravens is reminiscent of statistical methods. This suggests considering statistical generalizations such as “the percentage of white ravens in the total population of ravens is 20%”. Hans Reichenbach held that the central aim of inductive inference were generalizations of precisely that sort. More generally, we may consider hypotheses about proportions such as “the proportion of white ravens is between 10% and 20%”. Kelly examines in detail various hypotheses of this kind and establishes when there are reliable methods for testing them. He also provides a learning-theoretic interpretation of classical statistical tests of a parameter for a probability distribution [Kelly 1996, Ch.3.4].

Generalizations with exceptions illustrate some subtle nuances in the relationship between Popperian falsificationism and the learning-theoretic idea of reliable convergence to the truth. In some settings of inquiry, notably those involving universal generalizations, a naively Popperian “conjectures-and-refutations” approach of hanging on to conjectures until the evidence falsifies them does yield a reliable inductive method. In other problems, like the current example, it does not. Generally speaking problems with unfalsifiable hypotheses require something other than the conjectures-and-refutations recipe for reliable methods (this assertion hinges on what exactly one means by “falsifiable hypothesis”; see Section 4 (The Limits of Inquiry and the Complexity of Empirical Problems) as well as [Schulte and Juhl 1996]). A Popperian might respond that such hypotheses are “unscientific” and hence it is no concern that the conjectures-and-refutations approach fails to reliably identify a correct hypothesis when unfalsifiable hypotheses are involved. But intuitively, a claim like “all but finitely many ravens are black” appears to be a respectable empirical hypothesis. More importantly than intuition, at least from the point of view of means-ends epistemology, it is possible to reliably assess the truth of such claims in the long run, even though all hypotheses under investigation are consistent with any finite amount of evidence. This constitutes a clear sense in which inquiry can test these hypotheses against empirical evidence in order to find the truth. If we allow that we would want inductive methodology to extend to problems like this one, the moral is that relying on falsifications is sometimes, but not always, the best way for inquiry to proceed.

2. Case Studies in Scientific Practice

This section provides further examples to illustrate learning-theoretic analysis. The examples in this section are more realistic and address methodological issues arising in scientific practice. The space constraints of the encyclopedia format allow only an outline of the full analysis; there are references to more detailed discussions below.

Conservation Laws in Particle Physics

One of the hallmarks of elementary particle physics is the discovery of new conservation laws that apply only in the subatomic realm [Ford 1963, Ne'eman and Kirsh 1983, Feynman 1965]. (Feynman groups one of them, the conservation of Baryon Number, with the other “great conservation laws” of energy, charge and momentum.) Simplifying somewhat, conservation principles serve to explain why certain processes involving elementary particles do not occur: the explanation is that some conservation principle was violated (cf. Omnes [1971, Ch.2] and Ford [1963]). So a goal of particle inquiry is to find a set of conservation principles, such that for every process that is possible according to the (already known) laws of physics, there is some conservation principle that rules out that process. And if a process is in fact observed to occur, then it ought to satisfy all conservation laws that we have introduced.

This constitutes an inference problem to which we may apply means-ends analysis. An inference method produces a set of conservation principles in response to reports of observed processes. Means-ends analysis asks which methods are guaranteed to settle on conservation principles that account for all observations, that is, that rule out unobserved processes and allow observed processes. [Schulte 2000] describes an inductive method that accomplishes this goal. It turns out that the conservation principles that this method would posit on the currently available evidence are empirically equivalent to the ones that physicists have introduced. That is, the predictions of physicists about which reactions are possible and which are impossible are exactly the ones that the learning-theoretic method would make.

It turns out that for some physical processes, the only way to get empirically adequate conservation principles is by positing that some hidden particles have gone undetected. It is remarkable that to find conservation principles that are consistent with what is observed, sometimes the only option is to form hypotheses about what is unobserved. It is easy to miss this phenomenon without the scrutiny that means-ends analysis requires. Extending our problem so that inference methods not only posit conservation laws but also hidden particles makes inquiry more difficult. But if we grant the particle theorist the assumption that there are only finitely many types of hidden particles, then a method does exist that is guaranteed to settle eventually on a theory that makes correct predictions about the observable phenomena, using a combination of conservation laws and hidden particles. A detailed discussion of the conservation law inference problem is in [Schulte 2000]; the relationship to other topics in the philosophy of science such as simplicity and natural kinds is examined in [Schulte 2008].

Models of Cognitive Architecture

Some philosophers of mind have argued that the mind is composed of fairly independent modules. Each module has its own “input” from other modules and sends “output” to other modules. For example, an “auditory analysis system” module might take as input a heard word and send a phonetic analysis to an “auditory input lexicon”. The idea of modular organization raises the empirical question of what mental modules there are and how they are linked to each other. A prominent tradition of research in cognitive neuroscience has attempted to develop a model of mental architecture along these lines by studying the responses of normal and abnormal subjects to various stimuli. The idea is to compare normal reactions with abnormal ones—often caused by brain damage—so as to draw inferences about which mental capacities depend on each other and how.

Glymour [1994] asked the reliabilist question whether there are inference methods that are guaranteed to eventually settle on a true theory of mental organization, given exhaustive evidence about normal and abnormal capacities and reactions. He argued that for some possible mental architectures, no amount of evidence of the stimulus-response kind can distinguish between them. Since the available evidence determines the conjectures of an inductive method, it follows that there is no guarantee that a method will settle on the true model of cognitive architecture.

In further discussion, Bub [1994] showed that if we grant certain restrictive assumptions about how mental modules are connected, then a complete set of behavioural observations would allow a neuropsychologist to ascertain the module structure of a (normal) mind. In fact, under Bub's assumptions there is a reliable method for identifying the modular structure. Glymour has also explored to what extent richer kinds of evidence would resolve underdetermination of mental architecture by behavioural evidence. (One example of richer evidence are double disassocations. An example of a double dissocation would be a pair of patients, one who has a normal capacity for understanding spoken words, but fails to understand written ones, and another who understands written words but not spoken ones.)

Some more case studies of this sort may be found in [Kelly 1996, Ch. 7.7, Harell 2000]. The main interest of such studies lies of course in what they say about the particular domain under investigation. But they also illustrate some general features of learning theory:

1. Generality. The basic notions of the theory are very general. Essentially, the theory applies whenever one has a question that prompts inquiry, a number of candidate answers, and some evidence for deciding among the answers. Thus means-ends analysis can be applied in any discipline aimed at empirical knowledge, for example physics or psychology.

2. Context Dependence. Learning theory is pure normative a priori epistemology, in the sense that it deals with standards for assessing methods in possible settings of inquiry. But the approach does not aim for universal, context-free methodological maxims. The methodological recommendations depend on contingent factors, such as the operative methodological norms, the questions under investigation, the background assumptions that the agent brings to inquiry, the observational means at her disposal, her cognitive capacities, and her epistemic aims. As a consequence, to evaluate specific methods in a given domain, as in the case studies mentioned, one has to study the details of the case in question. The means-ends analysis often rewards this study by pointing out what the crucial methodological features of a given scientific enterprise are, and by explaining precisely why and how these features are connected to the success of the enterprise in attaining its epistemic aims.

3. Trade-offs. In the perspective of means-ends epistemology, inquiry involves an ongoing struggle with hard choices, rather than the execution of a universal “scientific method”. The inquirer has to balance conflicting values, and may consider various strategies such as accepting difficulties in the short run hoping to resolve them in the long run. For example in the conservation law problem, there can be conflicts between theoretical parsimony, i.e., positing fewer conservation laws, and ontological parsimony, i.e., introducing fewer hidden particles. For another example, a particle theorist may accept positing undetected neutrinos in the hopes that they will eventually be observed as science progresses. An important learning-theoretic project is to examine when such tradeoffs arise and what the options for resolving them are. The next section deals with some aspects of this topic.

3. The Long Run in the Short Run

A longstanding criticism of convergence to the truth as an aim of inquiry is that, while fine in itself, this aim is consistent with any crazy behaviour in the short run [Salmon 1991]. For example, we saw in the New Riddle of Induction that a reliable projection rule can conjecture that the next emerald will be blue no matter how many green emeralds have been found—as long as eventually the rule projects “all emeralds are green”. One response is that if means-ends analysis takes into account other epistemic aims in addition to long-run convergence, then it can provide strong guidance for what to conjecture in the short run.

To illustrate this point, let us return to the Goodmanian Riddle of Induction. Since Plato, philosophers have considered the idea that stable true belief is better than unstable true belief, and epistemologists such as Sklar [1975] have advocated similar principles of “epistemic conservatism”. Kuhn tells us that a major reason for conservatism in paradigm debates is the cost of changing scientific beliefs [Kuhn 1970]. In this spirit, learning theorists have examined methods that minimize the number of times that they change their theories before settling on their final conjecture. Such methods are said to minimize mind changes. The New Riddle of Induction turns out to be a nice illustration of this idea. Consider the natural projection rule (conjecture that all emeralds are green on a sample of green emeralds). If all emeralds are green, this rule never changes its conjecture. And if all emeralds are grue(t) for some critical time t, then the natural projection rule abandons its conjecture “all emeralds are green” at time t—one mind change—and thereafter correctly projects “all emeralds are grue(t)”. Remarkably, rules that project grue rather than green do not do as well. For example, consider a rule that conjectures that all emeralds are grue(3) after observing one green emerald. If two more green emeralds are observed, the rule's conjecture is falsified and it must eventually change its mind, say to conjecture that all emeralds are green (suppose that green emeralds continue to be found). But then at that point, a blue emerald may appear, forcing a second mind change. This argument can be generalized to show that the aim of minimizing mind changes allows only the green predicate to be projected on a sample of all green emeralds [Schulte 1999a]. We saw in a figure above how the natural projection rule changes its mind at most once; the figure below illustrates in a typical case how an unnatural projection rule may have to change its mind twice or more.

The conservation law problem discussed in the previous section provides another illustration of how additional epistemic aims can lead to constraints in the short run. In this problem, reliability and minimizing mind changes require a particle theorist to adopt a conservation theory that rules out as many unobserved reactions as possible. In certain circumstances, the only way to attain this aim is to introduce hidden particles. Long-run reliability by itself may require that the theorist introduce hidden particles eventually; the additional goal of minimizing mind changes—stable belief—dictates exactly when this should occur [Schulte 2000].

Kelly has developed the idea of minimizing mind changes as an explanation of why we should follow Occam's Razor, understood as a preference for simpler theories [Kelly 2007a]. He suggests an application of this understanding of Occam's Razor to the discovery of causal relationships [see also Kelly 2007b, Schulte et al. 2007].

4. The Limits of Inquiry and the Complexity of Empirical Problems

After seeing a number of examples like the ones above, one begins to wonder what the pattern is. What is it about an empirical question that allows inquiry to reliably arrive at the correct answer? What general insights can we gain into how reliable methods go about testing hypotheses? Learning theorists answer these questions with characterization theorems. Characterization theorems are generally of the form “it is possible to attain this standard of empirical success in a given inductive problem if and only if the inductive problem meets the following conditions”. There are a number of standards of success in inquiry—reliable convergence to the truth, fast reliable convergence, reliable convergence with few mind changes—and hence correspondingly many characterization theorems.

A fundamental result describes the conditions under which a method can reliably find the correct hypothesis among a countably infinite or finite number H1, H2, …, Hn, …. of mutually exclusive hypotheses that jointly cover all possibilities consistent with the inquirer's background assumptions. This is possible just in case each of the hypotheses is a countable disjunction of refutable empirical claims. By “refutable” I mean that if the claim is false, the evidence combined with the inquirer's background assumptions will eventually conclusively falsify the hypothesis (see Schulte and Juhl [1996], Kelly [1996, Ch. 3.3]). For illustration, let's return to the ornithological example with two alternative hypotheses: (1) all but finitely many swans are white, and (2) all but finitely many swans are black. As we saw, it is possible in the long run to reliably settle which of these two hypotheses is correct. Hence by the characterization theorem, each of the two hypotheses must be a disjunction of refutable empirical claims. To see that this indeed is so, observe that “all but finitely many swans are white” is logically equivalent to the disjunction

“at most 1 swan is black or at most 2 swans are black … or at most n swans are black … or…”,

and similarly for “all but finitely many swans are black”. Each of the claims in the disjunction is refutable, in the sense of being eventually falsified whenever it is false. For example, take the claim that “at most 3 swans are black”. If this is false, more than 3 black swans will be found, at which point the claim is conclusively falsified.A few points will help explain the significance of characterization theorems like this one.

1. Structure of Reliable Methods. Characterization theorems tell us how the structure of reliable methods corresponds to the structure of the hypotheses under investigation. For example, the theorem mentioned establishes a connection between falsifiability and testability, but one that is more attenuated than the naïve Popperian envisions: it is not necessary that the hypotheses under test be directly falsifiable; rather, there must be ways of strengthening each hypothesis that yield a countable number of refutable “subhypotheses”. We can think of these refutable subhypotheses as different ways in which the main hypothesis may be true. (For example, one way in which “all but finitely many ravens are white” is true is if there are are at most 10 black ravens; another if there are at most 100 black ravens, etc.)

2. Import of Background Assumptions. The characterization result draws a line between the solvable and unsolvable problems. Background knowledge reduces the inductive complexity of a problem; with enough background knowledge, the problem crosses the threshold between the unsolvable and the solvable. In many domains of empirical inquiry, the pivotal background assumptions are those that make reliable inquiry feasible. (Kuhn [1970] makes similar points). For example, in the particle dynamics problem, it is the assumption that some set of linear conservation laws is empirically adequate that permits us to reliably find an empirically adequate theory of particle reactions. It seems that, conversely, in domains in which the available background assumptions do not reduce the complexity of the empirical problems enough, there is a sense that inquiry is not sufficiently constrained for steady progress. One example might be Chomsky's program of universal grammar whose inductive complexity hinges on how broad we assume the set of humanly learnable languages to be. It is an interesting question how much the complexity of an empirical investigation, as determined by the strength of the available background assumptions, connects with the practitioners' sense of progress and feasibility. In the domains considered so far, learning-theoretic complexity corresponds quite well to intuitive methodological difficulty, but more case studies are required. In any case, learning-theoretic characterization theorems help us to pinpoint the sources of inductive complexity, and thus the methodologically central assumptions and the weak spots in a given empirical enterprise.

3. Universal Measure of Inductive Complexity. There are characterization theorems for a number of standards of empirical success. The more demanding the standard, the more stringent the conditions that such standards require. It turns out that a number of natural standards of inductive success fall into a hierarchy of feasibility, in the sense that standards higher in the hierarchy are attainable if standards lower in the hierarchy are. For a trivial example, if it is possible to settle on a correct hypothesis with at most 5 mind changes, then a fortiori it is possible to succeed with 10 mind changes. For other cognitive aims the inclusion is more surprising; see Schulte [1999a]. We may think of the hierarchy of standards of empirical success as establishing a scale for inductive problems: The more difficult the problem, the less we can expect from inquiry. The hierarchy of empirical success allows us to weigh such diverse problems as Goodman's Riddle, particle dynamics and language learning on the same scale. For example, it should be clear that the characteristic condition for reliable inquiry—that each hypothesis be equivalent to a countable disjunction of refutable assertions—applies in every domain of investigation. Thus means-ends analysis uncovers common structure among seemingly disparate problems.

4. Language Invariance. Learning-theoretic characterization theorems concern what Kelly calls the “temporal entanglement” of various observation sequences [Kelly 2000] (see also [Schulte and Juhl 1996]). Ultimately they rest on entailment relations between given evidence, background assumptions and empirical claims. Since logical entailment does not depend on the language we use to frame evidence and hypotheses, the inductive complexity of an empirical problem as determined by the characterization theorems is language-invariant. Indeed, it turns out that inductive complexity can be captured in terms of point-set topology and corresponds to a scale of topological complexity that has much importance in mathematics (the Borel and finite-difference hierarchies [Kelly 1996]).

5. Categorical vs. Hypothetical Imperatives

Kant distinguished between categorical imperatives that one ought to follow regardless of one's personal aim and circumstances, and hypothetical imperatives that direct us to employ our means towards our chosen end. One way to think of learning theory is as the study of hypothetical imperatives for empirical inquiry. Many epistemologists have proposed various categorical imperatives for inductive inquiry, for example in the form of an “inductive logic” or norms of “epistemic rationality”. In principle, there are three possible relationships between hypothetical and categorical imperatives for empirical inquiry.

1. The categorical imperative will lead an inquirer to obtain his cognitive goals. In that case means-ends analysis vindicates the categorical imperative. For example, when faced with a simple universal generalization such as “all ravens are black”, we saw above that following the Popperian recipe of adopting the falsifiable generalization and sticking to it until a counter example appears leads to a reliable method.

2. The categorical imperative may prevent an inquirer from achieving his aims. In that case the categorical imperative restricts the scope of inquiry. For example, in the case of the two alternative generalizations with exceptions, the principle of maintaining a universal generalization until it is falsified leads to an unreliable method (cf. [Kelly 1996, Ch. 9.4]).

3. Some methods meet both the categorical imperative and the goals of inquiry, and others don't. Then we may take the best of both worlds and choose those methods that attain the goals of inquiry and satisfy categorical imperatives. (See the further discussion in this section.)

For a proposed norm of inquiry, we can apply means-ends analysis to ask whether the norm helps or hinders the aims of inquiry. This was the spirit of Putnam's critique of Carnap's confirmation functions [Putnam 1963]: the thrust of his essay was that Carnap's methods were not as reliable in detecting general patterns as other methods would be. More recently, learning theorists have investigated the power of Bayesian conditioning (see the entry on Bayesian epistemology). John Earman has conjectured that if there is any reliable method for a given problem, then there is a reliable method that proceeds by Bayesian updating [Earman 1992, Ch.9, Sec.6]. Cory Juhl [1997] provided a partial confirmation of Earman's conjecture: He proved that it holds when there are only two potential evidence items (e.g., “emerald is green” vs. “emerald is blue”). The general case is still open.

Epistemic conservatism is a methodological norm that has been prominent in philosophy at least since Quine's notion of “minimal mutilation” of our beliefs [1951]. One version of epistemic conservatism, as we saw above, holds that inquiry should seek stable belief. Another formulation, closer to Quine's, is the general precept that belief changes in light of new evidence should be minimal. Fairly recent work in philosophical logic has proposed a number of criteria for minimal belief change known as the AGM axioms [Gärdenfors 1988]. Learning theorists have shown that whenever there is a reliable method for investigating an empirical question, there is one that proceeds via minimal changes (as defined by the AGM postulates). The properties of reliable inquiry with minimal belief changes are investigated in [Kelly et al. 1995, Martin and Osherson 1998, Kelly 1999].

Much of computational learning theory focuses on inquirers with bounded rationality, that is, agents with cognitive limitations such as a finite memory or bounded computational capacities. Many categorical norms that do not interfere with empirical success for logically omniscient agents nonetheless limit the scope of cognitively bounded agents. For example, consider the norm of consistency: Believe that a hypothesis is false as soon as the evidence is logically inconsistent with it. The consistency principle is part of both Bayesian confirmation theory and AGM belief revision. Kelly and Schulte [1995] show that consistency prevents even agents with infinitely uncomputable cognitive powers from reliably assessing certain hypotheses. The moral is that if a theory is sufficiently complex, agents who are not logically omniscient may be unable to determine immediately whether a given piece of evidence is consistent with the theory, and need to collect more data to detect the inconsistency. But the consistency principle—and a fortiori, Bayesian updating and AGM belief revision—rule out this kind of scientific strategy.

More reflection on this and other philosophical issues in means-ends epistemology can be found in sources such as [Glymour 1991], [Kelly 1996, Chs. 2,3], [Glymour and Kelly 1992], [Kelly et al. 1997], [Schulte and Juhl 1996], [Glymour 1994], [Bub 1994]. Of particular interest in the philosophy of science may be learning-theoretic models that accommodate historicist and relativist conceptions of inquiry, chiefly by expanding the notion of an inductive method so that methods may actively select paradigms for inquiry; for more details on this topic, see [Kelly 2000, Kelly 1996, Ch.13]. Booklength introductions to the mathematics of learning theory are [Kelly 1996, Martin and Osherson 1998, Jain et al. 1999]. “Induction, Algorithmic Learning Theory and Philosophy” is a recent collection of writings on learning theory [Friend et al. 2007]. Contributions include introductory papers (Harizanov, Schulte), mathematical advances (Martin, Sharma, Stephan, Kalantari), philosophical reflections on the strengths and implications of learning theory (Glymour, Larvor, Friend), applications of the theory to philosophical problems (Kelly), and a discussion of learning-theoretic thinking in the history of philosophy (Goethe).

Supplementary Document: Basic Formal Definitions

Bibliography

- Bub, J. [1994]: ‘Testing Models of Cognition Through the Analysis of Brain-Damaged Performance’, British Journal for the Philosophy of Science, 45, pp.837-55.

- Chart, D. [2000]: ‘Schulte and Goodman's Riddle’, British Journal for the Philosophy of Science, 51, pp.837-55.

- Earman, J. [1992]: Bayes or Bust?. Cambridge, Mass.: MIT Press.

- Feynman, R. [1965; 19th ed. 1990]: The Character of Physical Law, Cambridge, Mass.: MIT Press.

- Induction, Algorithmic Learning Theory, and Philosophy, eds. M. Friend, N. Goethe and V. Harazinov (2007). Dordrecht: Springer, pp. 111-144.

- Ford, K. [1963]: The World of Elementary Particles, New York: Blaisdell Publishing.

- Gärdenfors, P. [1988]: Knowledge In Flux: modeling the dynamics of epistemic states, Cambridge, Mass.: MIT Press.

- Glymour, C. [1991]: ‘The Hierarchies of Knowledge and the Mathematics of Discovery’, Minds and Machines 1, pp. 75-95.

- ––– [1994]: ‘On the Methods of Cognitive Neuropsychology’, British Journal for the Philosophy of Science 45, pp. 815-35.

- Glymour, C. and Kelly, K. [1992]: ‘Thoroughly Modern Meno’, in: Inference, Explanation and Other Frustrations, ed. John Earman, University of California Press.

- Goodman, N. [1983]. Fact, Fiction and Forecast. Cambridge, MA: Harvard University Press.

- Harrell, M. [2000]: Chaos and Reliable Knowledge, Ph.D. Thesis, University of California at San Diego.

- Jain, S. et al [1999]: Systems That Learn 2nd ed. Cambridge, MA: MIT Press.

- James, W. [1982]: ‘The Will To Believe’, in Pragmatism, ed. H.S. Thayer. Indianapolis: Hackett.

- Juhl, C. [1997]: ‘Objectively Reliable Subjective Probabilities’, Synthese 109, pp. 293-309.

- Kelly, K. [1996]: The Logic of Reliable Inquiry, Oxford: Oxford University Press.

- ––– [1999]: ‘ Iterated Belief Revision, Reliability, and Inductive Amnesia’, Erkenntnis 50: 11-58.

- ––– [2000]: ‘The Logic of Success’, British Journal for the Philosophy of Science 51:4, 639-660.

- ––– [2007a]: ‘How Simplicity Helps You Find the Truth Without Pointing at it’, in Induction, Algorithmic Learning Theory, and Philosophy, eds. M. Friend, N. Goethe and V. Harazinov. Dordrecht: Springer, pp. 111-144.

- ––– [2007b]: ‘Ockham's Razor, Truth, and Information’, in n Handbook of the Philosophy of Information eds. J. van Behthem and P. Adriaans, to appear.

- Kelly, K., and Schulte, O. [1995]: ‘The Computable Testability of Theories Making Uncomputable Predictions’, Erkenntnis 43, pp. 29-66.

- Kelly, K., Schulte, O. and Juhl, C. [1997]: ‘Learning Theory and the Philosophy of Science’, Philosophy of Science 64, 245-67.

- Kelly, K., Schulte, O. and Hendricks, V. [1995]: ‘Reliable Belief Revision’. Proceedings of the XII Joint International Congress for Logic, Methodology and the Philosophy of Science.

- Kuhn, T. [1970]: The Structure of Scientific Revolutions. Chicago: University of Chicago Press.

- Martin, E. and Osherson, D. [1998]: Elements of Scientific Inquiry. Cambridge, MA: MIT Press.

- Ne'eman, Y. and Kirsh, Y. [1983]: The Particle Hunters, Cambridge: Cambridge University Press.

- Omnes, R. [1971]: Introduction to Particle Physics, London, New York: Wiley Interscience.

- Putnam, H. [1963]: “Degree of Confirmation and Inductive Logic”, in The Philosophy of Rudolf Carnap, ed. P.a. Schilpp, La Salle, Ill: Open Court.

- Quine, W.: [1951]: ‘Two Dogmas of Empiricism’, Philosophical Review 60, 20-43.

- Salmon, W. [1991]: ‘Hans Reichenbach's Vindication of Induction,’ Erkenntnis 35:99-122.

- Schulte, O. [1999a]: ‘Means-Ends Epistemology’, The British Journal for the Philosophy of Science, 50, 1-31.

- ––– [1999b]: ‘The Logic of Reliable and Efficient Inquiry’, Journal of Philosophical Logic 28, 399-438.

- ––– [2000]: ‘Inferring Conservation Principles in Particle Physics: A Case Study in the Problem of Induction’, The British Journal for the Philosophy of Science, 51: 771-806.

- ––– [2007]: ‘Mind Change Optimal Learning of Bayes Net Structure’, O. Schulte, W. Luo and R. Greiner (2007). In Proceedings of the 20th Annual Conference on Learning Theory (COLT), San Diego, CA, June 12-15.

- ––– [2008]: ‘The Co-Discovery of Conservation Laws and Particle Families’, O. Schulte (2008). In Studies in History and Philosophy of Modern Physics, Vol 39:2, pp 288-314.

- Schulte, O., and Juhl, C. [1996]: ‘Topology as Epistemology’, The Monist 79, 1:141-147.

- Sklar, L. [1975]: ‘Methodological Conservatism’, Philosophical Review LXXXIV, pp. 374-400.

Other Internet Resources

- A number of Kevin Kelly's papers on Occam's Razor are posted on his website.

- Learning Theory in Computer Science

- Inductive Logic Website on Formal Learning Theory and Belief Revision

Related Entries

confirmation | epistemology: Bayesian | induction: new problem of | induction: problem of | James, William | logic: inductive | Peirce, Charles Sanders | Popper, Karl