Action-based Theories of Perception

Action is a means of acquiring perceptual information about the environment. Turning around, for example, alters your spatial relations to surrounding objects and, hence, which of their properties you visually perceive. Moving your hand over an object’s surface enables you to feel its shape, temperature, and texture. Sniffing and walking around a room enables you to track down the source of an unpleasant smell. Active or passive movements of the body can also generate useful sources of perceptual information (Gibson 1966, 1979). The pattern of optic flow in the retinal image produced by forward locomotion, for example, contains information about the direction in which you are heading, while motion parallax is a “cue” used by the visual system to estimate the relative distances of objects in your field of view. In these uncontroversial ways and others, perception is instrumentally dependent on action. According to an explanatory framework that Susan Hurley (1998) dubs the “Input-Output Picture”, the dependence of perception on action is purely instrumental:

Movement can alter sensory inputs and so result in different perceptions… changes in output are merely a means to changes in input, on which perception depends directly. (1998: 342)

The action-based theories of perception, reviewed in this entry, challenge the Input-Output Picture. They maintain that perception can also depend in a noninstrumental or constitutive way on action (or, more generally, on capacities for object-directed motor control). This position has taken many different forms in the history of philosophy and psychology. Most action-based theories of perception in the last 300 years, however, have looked to action in order to explain how vision, in particular, acquires either all or some of its spatial representational content. Accordingly, these are the theories on which we shall focus here.

We begin in Section 1 by discussing George Berkeley’s Towards a New Theory of Vision (1709), the historical locus classicus of action-based theories of perception, and one of the most influential texts on vision ever written. Berkeley argues that the basic or “proper” deliverance of vision is not an arrangement of voluminous objects in three-dimensional space, but rather a two-dimensional manifold of light and color. We then turn to a discussion of Lotze, Helmholtz, and the local sign doctrine. The “local signs” were felt cues for the mind to know what sort of spatial content to imbue visual experience with. For Lotze, these cues were “inflowing” kinaesthetic feelings that result from actually moving the eyes, while, for Helmholtz, they were “outflowing” motor commands sent to move the eyes.

In Section 2, we discuss sensorimotor contingency theories, which became prominent in the 20th century. These views maintain that an ability to predict the sensory consequences of self-initiated actions is necessary for perception. Among the motivations for this family of theories is the problem of visual direction constancy—why do objects appear to be stationary even though the locations on the retina to which they reflect light change with every eye movement?—as well as experiments on adaptation to optical rearrangement devices (ORDs) and sensory substitution.

Section 3 examines two other important 20th century theories. According to what we shall call the motor component theory, efference copies generated in the oculomotor system and/or proprioceptive feedback from eye-movements are used together with incoming sensory inputs to determine the spatial attributes of perceived objects. Efferent readiness theories, by contrast, look to the particular ways in which perceptual states prepare the observer to move and act in relation to the environment. The modest readiness theory, as we shall call it, claims that the way an object’s spatial attributes are represented in visual experience can be modulated by one or another form of covert action planning. The bold readiness theory argues for the stronger claim that perception just is covert readiness for action.

In Section 4, we move to the disposition theory, most influentially articulated by Gareth Evans (1982, 1985), but more recently defended by Rick Grush (2000, 2007). Evans’ theory is, at its core, very similar to the bold efferent readiness theory. There are some notable differences, though. Evans’ account is more finely articulated in some philosophical respects. It also does not posit a reduction of perception to behavioral dispositions, but rather posits that certain complicated relations between perceptual input and behavioral provide spatial content. Grush proposes a very specific theory that is like Evans’ in that it does not posit a reduction, but unlike Evans’ view, does not put behavioral dispositions and sensory input on an undifferentiated footing.

- 1. Early Action-Based Theories

- 2. Sensorimotor Contingency Theories

- 3. Motor Component and Efferent Readiness Theories

- 4. Skill/Disposition Theories

- Bibliography

- Academic Tools

- Other Internet Resources

- Related Entries

1. Early Action-Based Theories

Two doctrines dominate philosophical and psychological discussions of the relationship between action and space perception from the 18th to the early 20th century. The first is that the immediate objects of sight are two-dimensional manifolds of light and color, lacking perceptible extension in depth. The second is that vision must be “educated” by the sense of touch—understood as including both kinaesthesis and proprioceptive position sense—if the former is to acquire its apparent outward, three-dimensional spatial significance. The relevant learning process is associationist: normal vision results when tangible ideas of distance (derived from experiences of unimpeded movement) and solid shape (derived from experiences of contact and differential resistance) are elicited by the visible ideas of light and color with which they have been habitually associated. The widespread acceptance of both doctrines owes much to the influence of George Berkeley’s New Theory of Vision (1709).

The Berkeleyan approach looks to action in order to explain how depth is “added” to a phenomenally two-dimensional visual field. The spatial ordering of the visual field itself, however, is taken to be immediately given in experience (Hatfield & Epstein 1979; Falkenstein 1994; but see Grush 2007). Starting in the 19th century, a number of theorists, including Johann Steinbuch (1770–1818), Hermann Lotze (1817–1881), Hermann von Helmholtz (1821–1894), Wilhelm Wundt (1832–1920), and Ernst Mach (1838–1916), argued that all abilities for visual spatial localization, including representation of up/down and left/right direction within the two-dimensional visual field, depend on motor factors, in particular, gaze-directing movements of the eye (Hatfield 1990: chaps. 4–5). This idea is the basis of the “local sign” doctrine, which we examine in Section 2.3.

1.1 Movement and Touch in the New Theory Of Vision

There are three principal respects in which motor action is central to Berkeley’s project in the New Theory of Vision (1709). First, Berkeley argues that visual experiences convey information about three-dimensional space only to the extent that they enable perceivers to anticipate the tactile consequences of actions directed at surrounding objects. In §45 of the New Theory, Berkeley writes:

…I say, neither distance, nor things placed at a distance are themselves, or their ideas, truly perceived by sight…. whoever will look narrowly into his own thoughts, and examine what he means by saying, he sees this or that thing at a distance, will agree with me, that what he sees only suggests to his understanding, that after having passed a certain distance, to be measured by the motion of his body, which is perceivable by touch, he shall come to perceive such and such tangible ideas which have been usually connected with such and such visible ideas.

And later in the Treatise Concerning the Principles of Human Knowledge (1734: §44):

…in strict truth the ideas of sight, when we apprehend by them distance and things placed at a distance, do not suggest or mark out to us things actually existing at a distance, but only admonish us what ideas of touch will be imprinted in our minds at such and such distances of time, and in consequence of such or such actions. …[V]isible ideas are the language whereby the governing spirit … informs us what tangible ideas he is about to imprint upon us, in case we excite this or that motion in our own bodies.

The view Berkeley defends in these passages has recognizable antecedents in Locke’s Essay Concerning Human Understanding (1690: Book II, Chap. 9, §§8–10). There Locke maintained that the immediate objects of sight are “flat” or lack outward depth; that sight must be coordinated with touch in order to mediate judgments concerning the disposition of objects in three-dimensional space; and that visible ideas “excite” in the mind movement-based ideas of distance through an associative process akin to that whereby words suggest their meanings: the process is

performed so constantly, and so quick, that we take that for the perception of our sensation, which is an idea formed by our judgment.

A long line of philosophers—including Condillac (1754), Reid (1785), Smith (1811), Mill (1842, 1843), Bain (1855, 1868), and Dewey (1891)—accepted this view of the relation between sight and touch.

The second respect in which action plays a prominent role in the New Theory is teleological. Sight not only derives its three-dimensional spatial significance from bodily movement, its purpose is to help us engage in such movement adaptively:

…the proper objects of vision constitute an universal language of the Author of nature, whereby we are instructed how to regulate our actions, in order to attain those things, that are necessary to the preservation and well-being of our bodies, as also to avoid whatever may be hurtful and destructive of them. It is by their information that we are principally guided in all the transactions and concerns of life. (1709: §147)

Although Berkeley does not explain how vision instructs us in regulating our actions, the answer is reasonably clear from the preceding account of depth perception: seeing an object or scene can elicit tangible ideas that directly motivate self-preserving action. The tactual ideas associated with a rapidly looming ball in the visual field, for example, can directly motivate the subject to shift position defensively or to catch it before being struck.

The third respect in which action is central to the New Theory is psychological. Tangible ideas of distance are elicited not only by (1) visual or “pictorial” depth cues such as object’s degree of blurriness (objects appear increasingly “confused” as they approach the observer), but also by kinaesthetic, muscular sensations resulting from (2) changes in the vergence angle of the eyes (1709: §16) and (3) accommodation of the lens (1709: §27). Like many contemporary theories of spatial vision, the Berkeleyan account thus acknowledges an important role for oculomotor factors in our perception of distance.

1.2 Objections to Berkeley’s Theory

Critics of Berkeley’s theory in the 18th and 19th centuries (for reviews, see Bain 1868; Smith 2000; Atherton 2005) principally targeted three claims:

- (a)

- that “distance, of it self and immediately, cannot be seen” (Berkeley 1709: §1);

- (b)

- that sight depends on learned connections with experiences of movement and touch for its outward, spatial significance; and

- (c)

- that “habitual connexion”, i.e., association, would by itself enable touch to “educate” vision in the manner required by (b).

Most philosophers and perceptual psychologists now concur with Armstrong’s (1960) assessment that the “single point” argument for claim (a)—“distance being a line directed end-wise to the eye, it projects only one point in the fund of the eye, which point remains invariably the same, whether the distance be longer or shorter” (Berkeley 1709: §2)—conflates spatial properties of the retinal image with those of the objects of sight (also see Condillac 1746/2001: 102; Abbott 1864: chap. 1). In contrast with claim (a), we should note, both contemporary “ecological” and information-processing approaches in vision science assume that the spatial representational contents of visual experience are robustly three-dimensional: vision is no less a distance sense than touch.

Three sorts of objections targeted on claim (b) were prominent. First, it is not evident to introspection that visual experiences reliably elicit tactile and kinaesthetic images as Berkeley suggests. As Bain succinctly formulates this objection:

In perceiving distance, we are not conscious of tactual feelings or locomotive reminiscences; what we see is a visible quality, and nothing more. (1868: 194)

Second, sight is often the refractory party when conflicts with touch arise. Consider the experience of seeing a three-dimensional scene in a painting: “I know, without any doubt”, writes Condillac,

that it is painted on a flat surface; I have touched it, and yet this knowledge, repeated experience, and all the judgments I can make do not prevent me from seeing convex figures. Why does this appearance persist? (1746/2001: I, §6, 3)

Last, vision in many animals does not need tutoring by touch before it is able to guide spatially directed movement and action. Cases in which non-human neonates respond adaptively to the distal sources of visual stimulation

imply that external objects are seen to be so…. They prove, at least, the possibility that the opening of the eye may be at once followed by the perception of external objects as such, or, in other words, by the perception or sensation of outness. (Bailey 1842: 30; for replies, see Smith 1811: 385–390)

Here it would be in principle possible for a proponent of Berkeley’s position to maintain that, at least for such animals, the connection between visual ideas and ideas of touch is innate and not learned (see Stewart 1829: 241–243; Mill 1842: 106–110). While this would abandon Berkeley’s empiricism and associationism, it would maintain the claim that vision provides depth information only because its ideas are connected to tangible ideas.

Regarding claim (c), many critics denied that the supposed “habitual connexion” between vision and touch actually obtains. Suppose that the novice perceiver sees a remote tree at time1 and walks in its direction until she makes contact with it at time2. The problem is that the perceiver’s initial visual experience of the tree at time1 is not temporally contiguous with the locomotion-based experience of the tree’s distance completed at time2. Indeed, at time2 the former experience no longer exists. “The association required”, Abbott thus writes,

cannot take place, for the simple reason that the ideas to be associated cannot co-exist. We cannot at one and the same moment be looking at an object five, ten, fifty yards off, and be achieving our last step towards it. (1864: 24)

Finally, findings from perceptual psychology have more recently been leveled against the view that vision is educated by touch. Numerous studies of how subjects respond to lens-, mirror-, and prism-induced distortions of visual experience (Gibson 1933; Harris 1965, 1980; Hay et al. 1965; Rock & Harris 1967) indicate that not only is sight resistant to correction from touch, it will often dominate or “capture” the latter when intermodal conflicts arise. This point will be discussed in greater depth in Section 3 below.

1.3 Lotze, Helmholtz, and the Local Sign Doctrine

Like Berkeley, Hermann Lotze (1817–1881) and Hermann von Helmholtz (1821–1894) affirm the role played by active movement and touch in the genesis of three-dimensional visuospatial awareness:

…there can be no possible sense in speaking of any other truth of our perceptions other than practical truth. Our perceptions of things cannot be anything other than symbols, naturally given signs for things, which we have learned to use in order to control our motions and actions. When we have learned to read those signs in the proper manner, we are in a condition to use them to orient our actions such that they achieve their intended effect; that is to say, that new sensations arise in an expected manner (Helmholtz 2005 [1924]: 19, our emphasis).

Lotze and Helmholtz go further than Berkeley in maintaining that bodily movement also plays a role in the construction of the two-dimensional visual field, taken for granted by most previous accounts of vision (but for exceptions, see Hatfield 1990: ch. 4).

The problem of two-dimensional spatial localization, as Lotze and Helmholtz understand it, is the problem of assigning a unique, eye-relative (or “oculocentric”) direction to every point in the visual field. Lotze’s commitment to mind-body dualism precluded looking to any physical or anatomical spatial ordering in the visual system for a solution to this problem (Lotze 1887 [1879]: §§276–77). Rather, Lotze maintains that every discrete visual impression is attended by a “special extra sensation” whose phenomenal character varies as a function of its origin on the retina. Collectively, these extra sensations or “local signs” constitute a “system of graduated, qualitative tokens” (1887 [1879]: §283) that bridge the gap between the spatial structure of the nonconscious retinal image and the spatial structure represented in conscious visual awareness.

What sort of sensation, however, is suited to play the individuating role attributed to a local sign? Lotze appeals to kinaesthetic sensations that accompany gaze-directing movements of the eyes (1887 [1879]: §§284–86). If P is the location on the retina stimulated by a distal point d and F is the fovea, then PF is the arc that must be traversed in order to align the direction of gaze with d. As the eye moves through arc PF, its changing position gives rise to a corresponding series of kinaesthetic sensations p0, p1, p2, …pn, and it is this consciously experienced series, unique to P, that constitutes P’s local sign. By contrast, if Q were rather the location on the retina stimulated by d, then the eye’s foveating movement through arc QF would elicit a different series of kinaesthetic sensations k0, k1, k2, …kn unique to Q.

Importantly, Lotze allows that retinal stimulation need not trigger an overt movement of the eye. Rather, even in the absence of the corresponding saccade, stimulating point P will elicit kinaesthetic sensation p0, and this sensation will, in turn, recall from memory the rest of the series with which it is associated p1, …pn.

Accordingly, though there is no movement of the eye, there arises the recollection of something, greater or smaller, that must be accomplished if the stimuli at P and Q, which arouse only a weak sensation, are to arouse sensations of the highest degree of strength and clearness. (1887 [1879]: §285)

In this way, Lotze accounts for our ability to perceive multiple locations in the visual field at the same time.

Helmholtz 2005 [1924] fully accepts the need for local signs in two-dimensional spatial localization, but makes an important modification to Lotze’s theory. In particular, he maintains that local signs are not feelings that originate in the adjustment of the ocular musculature, i.e., a form of afferent, sensory “inflow” from the eyes, but rather feelings of innervation (Innervationsgefühlen) produced by the effort of the will (Willensanstrengung) to move the eyes, i.e., a form of efferent, motor “outflow”. In general, to each perceptible location in the visual field there is an associated readiness or impulse of the will (Willensimpuls) to move eyes in the manner required in order to fixate it. As Ernst Mach later formulates Helmholtz’s view: “The will to perform movements of the eyes, or the innervation to the act, is itself the space sensation” (Mach 1897 [1886]: 59).

Helmholtz favored a motor outflow version of the local sign doctrine for two main reasons. First, he was skeptical that afferent registrations of eye position are precise enough to play the role assigned to them by Lotze’s theory (2005 [1924]: 47–49). Recent research has shown that proprioceptive inflow from ocular muscular stretch receptors does in fact play a quantifiable role in estimating direction of gaze, but efferent outflow is normally the more heavily weighted source of information (Bridgeman 2010; see Section 2.1.1 below).

Second, attempting a saccade when the eyes are paralyzed or otherwise immobilized results in an apparent shift of the visual scene in the same direction (Helmholtz 2005 [1924]: 205–06; Mach 1897 [1886]: 59–60). This finding would make sense if efferent signals to the eye are used to determine the direction of gaze: the visual system “infers” that perceived objects are moving because they would have to be in order for retinal stimulation to remain constant despite the change in eye direction predicted on the basis of motor outflow.

Although Helmholtz was primarily concerned to show that “our judgments as to the direction of the visual axis are simply the result of the effort of will involved in trying to alter the adjustment of the eyes” (2005 [1924]: 205–06), the evidence he adduces also implies that efferent signals play a critical role in our perception of stability in the world across saccadic eye movements. In the next section, we trace the influence of this idea on theories in the 20th century.

2. Sensorimotor Contingency Theories

Action-based accounts of perception proliferate diversely in 20th century. In this section, we focus on the reafference theory of Richard Held and the more recent enactive approach of J. Kevin O’Regan and Alva Noë. Central to both accounts is the view that perception and perceptually guided action depend on abilities to anticipate the sensory effects of bodily movements. To be a perceiver it is necessary to have knowledge of what O’Regan and Noë call the laws of sensorimotor contingency—“the structure of the rules governing the sensory changes produced by various motor actions” (O’Regan & Noë 2001: 941).

We start with two sources of motivation for theories that make knowledge of sensorimotor contingencies necessary and/or sufficient for spatially contentful perceptual experience. The first is the idea that the visual system exploits efference copy, i.e., a copy of the outflowing saccade command signal, in order to distinguish changes in visual stimulation caused by movement of the eye from those caused by object movement. The second is a long line of experiments, first performed by Stratton and Helmholtz in the 19th century, on how subjects adapt to lens-, mirror-, and prism-induced modifications of visual experience. We follow up with objections to these theories and alternatives.

2.1 Efference and Visual Direction Constancy

The problem of visual direction constancy (VDC) is the problem of how we perceive a stable world despite variations in visual stimulation caused by saccadic eye movements. When we execute a saccade, the image of the world projected on the retina rapidly displaces in the direction of rotation, yet the directions of perceived objects appear constant. Such perceptual stability is crucial for ordinary visuomotor interaction with surrounding the environment. As Bruce Bridgeman writes,

Perceiving a stable visual world establishes the platform on which all other visual function rests, making possible judgments about the positions and motions of the self and of other objects. (2010: 94)

The problem of VDC divides into two questions (MacKay 1973): First, which sources of information are used to determine whether the observer-relative position of an object has changed between fixations? Second, how are relevant sources of information used by the visual system to achieve this function?

The historically most influential answer to the first question is that the visual system has access to a copy of the efferent or “outflowing” saccade command signal. These signals carry information specifying the direction and magnitude of eye movements that can be used to compensate for or “cancel out” corresponding displacements of the retinal image.

In the 19th century, Bell (1823), Purkyně (1825), and Hering (1861 [1990]), Helmholtz (2005 [1924]), and Mach (1897 [1886]) deployed the efference copy theory to illuminate a variety of experimental findings, e.g., the tendency in subjects with partially paralyzed eye muscles to perceive movement of the visual scene when attempting to execute a saccade (for a review, see Bridgeman 2010.) The theory’s most influential formulation, however, came from Erich von Holst and Horst Mittelstädt in the early 1950s. According to what they dubbed the “reafference principle” (von Holst & Mittelstädt 1950; von Holst 1954), the visual system exploits a copy of motor directives to the eye in order to distinguish between exafferent visual stimulation, caused by changes in the world, and reafferent visual stimulation, caused by changes in the direction of gaze:

Let us imagine an active CNS sending out orders, or “commands” … to the effectors and receiving signals from its sensory organs. Signals that predictably come when nothing occurs in the environment are necessarily a result of its own activity, i.e., are reafferences. All signals that come when no commands are given are exafferences and signify changes in the environment or in the state of the organism caused by external forces. … The difference between that which is to be expected as the result of a command and the totality of what is reported by the sensory organs is the proportion of exafference…. It is only this difference to which there are compensatory reflexes; only this difference determines, for example during a moving glance at movable objects, the actually perceived direction of visual objects. This, then, is the solution that we propose, which we have termed the “reafference principle”: distinction of reafference and exafference by a comparison of the total afference with the system’s state—the “command”. (Mittelstädt 1971; translated by Bridgeman et al. 1994: 251).

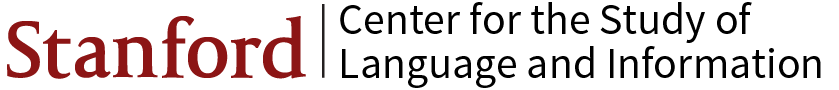

It is only when the displacement of the retinal image differs from the displacement predicted on the basis of the efference copy, i.e., when the latter fails to “cancel out” the former, that subjects experience a change of some sort in the perceived scene (see Figure 1). The relevant upshot is that VDC has an essential motoric component: the apparent stability of an object’s eye-relative position in the world depends on the perceiver’s ability to integrate incoming retinal signals with extraretinal information concerning the magnitude and direction of impending eye movements.

![[Three parts to the image, the first, labeled 'a.', has at the top an apple and at the bottom an eyeball looking straight up with an arrow going from the bottom of the eyeball to the apple; the arrow is labeled EC=0 and the eyeball, A=0. The second, labeled 'b.', is like the first except the eyeball is looking slightly clockwise of straight up and the arrow follows the line of sight; a second arrow goes from the apple to the bottom of the eyeball; the eyeball is labeled A=-10; the first arrow, EC=+10 (there two equations line up horizontally with A=0 and EC=0 respectively from the first part). The third part is not labeled and consists of a circle divided into quarters with a '+' in the top quarter and a '-' in the bottom quarter. The equation 'EC=+10' in part b has an arrow going from it to the '+' quadrant of the circle. The equation 'A=-10' from part b has an arrow going from it to the '-' quadrant of the circle. From the right side of the circle is an arrow that points to an equation 'EA=EC+A=0'; the arrow is labeled 'Comparator'. ]](fig1.png)

Figure 1: (a) When the eye is stationary, both efference copy (EC) and afference produced by displacement of the retinal image (A) are absent. (b) Turning the eye 10° to the right results in a corresponding shift of the retinal image. Since the magnitude of the eye movement specified by EC and the magnitude of retinal image displacement cancel out, no movement in the world or “exafference” (EA) is registered.

2.1.1 Objections to the Efference Copy Theory

The foregoing solution to the problem of VDC faces challenges on multiple, empirical fronts. First, there is evidence that proprioceptive signals from the extraocular muscles make a non-trivial contribution to estimates of eye position, although the gain of efference copy is approximately 2.4 times greater (Bridgeman & Stark 1991). Second, in the autokinetic effect, a fixed luminous dot appears to wander when the field of view is dark and thus completely unstructured. This finding is inconsistent with theories according to which retinotopic location and efference copy are the sole determinants of eye-relative direction. Third, the hypothesized compensation process, if psychologically real, would be highly inaccurate since subjects fail to notice displacements of the visual world up to 30% of total saccade magnitude (Bridgeman et al. 1975), and the locations of flashed stimuli are systematically misperceived when presented near the time of a saccade (Deubel 2004). Last, when image displacements concurrent with a saccade are large, but just below threshold for detection, visually attended objects appear to “jump” or “jiggle” against a stable background (Brune and Lücking 1969; Bridgeman 1981). Efference copy theories, however, as Bridgeman observes,

do not allow the possibility that parts of the image can move relative to one another—the visual world is conceived as a monolithic object. The observation would seem to eliminate all efference copy and related theories in a single stroke. (2010: 102)

2.1.2 Alternatives to the Efference Copy Theory

The reference object theory of Deubel and Bridgeman denies that efference copy is used to “cancel out” displacements of the retinal image caused by saccadic eye-movements (Deubel et al. 2002; Deubel 2004; Bridgeman 2010). According to this theory, visual attention shifts to the saccade target and a small number of other objects in its vicinity (perhaps four or fewer) before eye movement is initiated. Although little visual scene information is preserved from one fixation to the next, the features of these objects as well as precise information about their presaccadic, eye-relative locations is preserved. After the eye has landed, the visual system searches for the target or one of its neighbors within a limited spatial region around the landing site. If the postsaccadic localization of this “landmark” object succeeds, the world appears to be stable. If this object is not found, however, displacement is perceived. On this approach, efference copy does not directly support VDC. Rather, the role of efference copy is to maintain an estimate of the direction of gaze, which can be integrated with incoming retinal stimulation to determine the static, observer-relative locations of perceived objects. For a recent, philosophically oriented discussion, see Wu 2014.

A related alternative to the von Holst-Mittelstädt model is the spatial remapping theory of Duhamel and Colby (Duhamel et al. 1992; Colby et al. 1995). The role of saccade efference copy on this theory is to initiate an updating of the eye-relative locations of a small number of attended or otherwise salient objects. When post-saccadic object locations are sufficiently congruent with the updated map, stability is perceived. Single-cell and fMRI studies show that neurons at various stages in the visual-processing hierarchy exploit a copy of the saccade command signal in order to shift their receptive field locations in the direction of an impending eye movement microseconds before its initiation (Merriam & Colby 2005; Merriam et al. 2007). Efference copy indicating an impending saccade 20° to the right, in effect, tells relevant neurons:

If you are now firing in response to an item x in your receptive field, then stop firing at x. If there is currently an item y in the region of oculocentric visual space that would be coincident with your receptive field after a saccade 20° to the right, then start firing at y.

Such putative updating responses are strongest in parietal cortex and at higher levels in visual processing (V3A and hV4) and weakest at lower levels (V1 and V2).

2.2 The Reafference Theory

In 1961, Richard Held proposed that the reafference principle could be used to construct a general “neural model” of perception and perceptually guided action. Held’s reafference theory goes beyond the account of von Holst and Mittelstädt in three main ways. First, information about movement parameters specified by efference copy is not simply summated with reafferent stimulation. Rather, subjects are assumed to acquire knowledge of the specific sensory consequences of different bodily movements. This knowledge is contained in a hypothesized “correlational storage” area and used to determine whether or not the reafferent stimulations that result from a given type of action match those that resulted in the past (Held 1961: 30). Second, the reafference theory is not limited to eye movements, but extends to “any motor system that can be a source of reafferent visual stimulation”. Third, knowledge of the way reafferent stimulation depends on self-produced movement is used for purposes of sensorimotor control: planning and controlling object-directed actions in the present depends on access to information concerning the visual consequences of performing such actions in the past.

2.2.1 Oh, Inverted World: Early Experiments on Optical Rearrangement

The reafference theory was also significantly motivated by studies of how subjects adapt to devices that alter the relationship between the distal visual world and sensory input by rotating, reversing, or laterally displacing the retinal image (for helpful guides to the literature on this topic, see Rock 1966; Howard & Templeton 1966; Epstein 1967; and Welch 1978). We will refer to these as optical rearrangement devices (or ORDs for short).

2.2.2 Stratton

The American psychologist George Stratton conducted two experiments using a lens system that effected an 180º rotation of the retinal image in his right eye (his left eye was kept covered). The first experiment involved wearing the device for 21.5 hours over the course of three days (1896); the second experiment involved wearing the device for 81.5 hours over the course of 8 days (1897a,b). In both cases, Stratton kept a detailed diary of how his visual, imaginative, and proprioceptive experiences underwent modification as a consequence of inverted vision. In 1899, he performed a lesser-known but equally dramatic three-day experiment, using a pair of mirrors that presented his eyes with a view of his own body from a position in space directly above his head (Figure 2).

![[a line drawing of a man standing and looking up at about a 45 degree angle. Above him is a horizontal line labeled at the left end 'A' and right end 'B'. A second line goes from the 'B' end at about a -60 degree angle to point approximately horizontal to the man's neck that point is labeled 'D'. To the right of D is the dotted line drawing of a horizontal man, head closest to D; feet labeled 'E'. Fromt the first man's eyes is a short line, labeled 'C', going up at about a 45 degree angle approximately in the direction of the point labeled 'B'.]](fig2.png)

Figure 2: The apparatus designed by Stratton (1899). Stratton saw a view of his own body from the perspective of mirror AB, worn above his head.

In both experiments, Stratton reported a brief period of initial visual confusion and breakdown in visuomotor skill:

Almost all movements performed under the direct guidance of sight were laborious and embarrassed. Inappropriate movements were constantly made; for instance, in order to move my hand from a place in the visual field to some other place which I had selected, the muscular contraction which would have accomplished this if the normal visual arrangement had existed, now carried my hand to an entirely different place. (1897a: 344)

Further bewilderment was caused by a “swinging” of the visual field with head movements as well as jarring discord between where things were respectively seen and imagined to be:

Objects lying at the moment outside the visual field (things at the side of the observer, for example) were at first mentally represented as they would have appeared in normal vision…. The actual present perception remained in this way entirely isolated and out of harmony with the larger whole made up by [imaginative] representation. (1896: 615)

After a seemingly short period of adjustment, Stratton reported a gradual re-establishment of harmony between the deliverances of sight and touch. By the end of his experiments on inverted vision, it was not only possible for Stratton to perform many visuomotor actions fluently and without error, the visual world often appeared to him to be “right side up” (1897a: 358) and “in normal position” (1896: 616). Just what this might mean will be discussed below in Section 2.2.6.

2.2.3 Helmholtz

Another influential experiment was performed by Helmholtz (2005 [1924]: §29), who practiced reaching to targets while wearing prisms that displaced the retinal image 16–18° to the left. The initial tendency was to reach too far in the direction of lateral displacement. After a number of trials, however, reaching gradually regained its former level of accuracy. Helmholtz made two additional discoveries. First, there was an intermanual transfer effect: visuomotor adaptation to prisms extended to his non-exposed hand. Second, immediately after removing the prisms from his eyes, errors were made in the opposite direction, i.e., when reaching for a target, Helmholtz now moved his hand too far to the right. This negative after-effect is now standardly used as a measure of adaptation to lateral displacement.

Stratton and Helmholtz’s findings catalyzed a research tradition on ORD adaptation that experienced its heyday in the 1960s and 1970s. Two questions dominated studies conducted during this period. First, what are the necessary and sufficient conditions for adaptation to occur? In particular, which sources of information do subjects use when adapting to the various perceptual and sensorimotor discrepancies caused by ORDs? Second, just what happens when subjects adapt to perceptual rearrangement? What is the “end product” of the relevant form of perceptual learning?

2.2.4 Held’s Experiments On Prism Adaptation

Held’s answer to the first question is that subjects must receive visual feedback from active movement, i.e., reafferent visual stimulation, in order for significant and stable adaptation to occur (Held & Hein 1958; Held 1961; Held & Bossom 1961). Evidence for this conclusion came from experiments in which participants wore laterally displacing prisms during both active and passive movement conditions. In the active movement condition, the subject moved her visible hand back and forth along a fixed arc in synchrony with a metronome. In the passive movement condition, the subject’s hand was passively moved at the same rate by the experimenters. Although the overall pattern of visual stimulation was identical in both conditions, adaptation was reported only when subjects engaged in self-movement. Reafferent stimulation, Held and Bossom concluded on the basis of this and other studies,

is the source of ordered contact with the environment which is responsible for both the stability, under typical conditions, and the adaptability, to certain atypical conditions, of visual-spatial performance. (1961: 37)

Held’s answer to the second question is couched in terms of the reafference theory: subjects adapt to ORDs only when they have relearned the sensory consequences of their bodily movements. In the case of adaptation to lateral displacement, they must relearn the way retinal stimulations vary as a function of reaching for targets at different body-relative locations. This relearning is assumed to involve an updating of the mappings from motor output to reafferent sensory feedback in the hypothesized "correlational storage" module mentioned above.

2.2.5 Challenges to the Reafference Theory

The reafference theory faces a number of objections. First, the theory is an extension of von Holst and Mittelstädt’s reafference principle, according to which efference copy is used to cancel out shifts of the retinal image caused by saccadic eye movements. The latter was specifically intended to explain why we do not experience object displacement in the world whenever we change the direction of gaze. There is nothing, at first blush, however, that is analogous to the putative need for “cancellation” or “discounting” of the retinal image in the case of prism adaptation. As Welch puts it, “There is no visual position constancy here, so why should a model originally devised to explain this constancy be appropriate?” (1978: 16).

Second, the reafference theory fails to explain just how stored efference-reafference correlations are supposed to explain visuomotor control. How does having the ability to anticipate the retinal stimulations that would caused by a certain type of hand movement enable one actually to perform the movement in question? Without elaboration, all that Held’s theory seems to explain is why subjects are surprised when reafferences generated by their movements are non-standard (Rock 1966: 117).

Third, adaptation to ORDs, contrary to the theory, is not restricted to situations in which subjects receive reafferent visual feedback, but may also take place when subjects receive feedback generated by passive effector or whole-body movement (Singer & Day 1966; Templeton et al. 1966; Fishkin 1969). Adaptation is even possible in the complete absence of motor action (Howard et al. 1965; Kravitz & Wallach 1966).

In general, the extent to which adaptation occurs depends not on the availability of reafferent stimulation, but rather on the presence of either of two related kinds of information concerning “the presence and nature of the optical rearrangement” (Welch 1978: 24). Following Welch, we shall refer to this view as the “information hypothesis”.

One source of information present in a displaced visual array concerns the veridical directions of objects from the observer (Rock 1966: chaps. 2–4). Normally, when engaging in forward locomotion, the perceived radial direction of an object straight ahead of the observer’s body remains constant while the perceived radial directions of objects to either side undergo constant change. This pattern also obtains when the observer wears prisms that displace the retinal image to side. Hence, “an object seen through prisms which retains the same radial direction as we approach must be seen to be moving in toward the sagittal plane” (Rock 1966: 105). On Rock’s view, at least some forms of adaptation to ORDs can be explained by our ability to detect and exploit such invariant sources of spatial informational in locomotion-generated patterns of optic flow.

Another related source of information for adaptation derives from the conflict between seen and proprioceptively experienced limb position (Wallach 1968; Ularik & Canon 1971). When this discrepancy is made conspicuous, proponents of the information hypothesis have found that passively moved (Melamed et al. 1973), involuntarily moved (Mather & Lackner 1975), and even immobile subjects (Kravitz & Wallach 1966) exhibit significant adaption. Although self-produced bodily movement is not necessary for adaptation to occur, it provides subjects with especially salient information about the discrepancy between sight and touch (Moulden 1971): subjects are able proprioceptively to determine the location of a moving limb much more accurately than a stationary or passively moved limb. It is the enhancement of the visual-proprioceptive conflict rather than reafferent visual stimulation, on this interpretation, that explains why active movement yields more adaptation than passive movement in Held’s experiments.

2.2.6 The Proprioceptive Change Theory

A final objection to the reafference theory concerns the end product of adaptation to ORDs. According to the theory, adaptation occurs when subjects learn new rules of sensorimotor dependence that govern how actions affect sensory inputs. There is a significant body of evidence, however, that much, if not all, adaptation rather occurs at the proprioceptive level. Stratton, summarizing the results of his experiment on mirror-based optical rearrangement, wrote:

…the principle stated in an earlier paper—that in the end we would feel a thing to be wherever we constantly saw it—can be justified in a wider sense than I then intended it to be taken…. We may now, I think, safely include differences of distance as well, and assert that the spatial coincidence of touch and sight does not require that an object in a given tactual position should appear visually in any particular direction or at any particular distance. In whatever place the tactual impression’s visual counterpart regularly appeared, this would eventually seem the only appropriate place for it to appear in. If we were always to see our bodies a hundred yards away, we would probably also feel them there. (1899: 498, our emphasis)

On this interpretation, the plasticity revealed by ORDs is primarily proprioceptive and kinaesthetic, rather than visual. Stratton’s world came to look “right side up” (1897b: 469) after adaptation to the inverted retinal image because things were felt where they were visually perceived to be—not because, his “entire visual field flipped over” (Kuhn 2012 [1962]: 112). This is clear from the absence of a visual negative aftereffect when Stratton finally removed his inverting lenses at the end of his eight-day experiment:

The visual arrangement was immediately recognized as the old one of pre-experimental days; yet the reversal of everything from the order to which I had grown accustomed during the past week, gave the scene a surprising, bewildering air which lasted for several hours. It was hardly the feeling, though, that things were upside down. (1897b: 470)

Moreover, Stratton reported changes in kinaesthesis during the course of the experiment consistent with the alleged proprioceptive shift:

when one was most at home in the unusual experience the head seemed to be moving in the very opposite direction from that which the motor sensations themselves would suggest. (1907: 156)

On this view, the end product of adaptation to an ORD is a recalibration of proprioceptive position sense at one or more points of articulation in the body (see the entry on bodily awareness). As you practice reaching for a target while wearing laterally displacing prisms, for example, the muscle spindles, joint receptors, and Golgi tendon organs in your shoulder and arm continue to generate the same patterns of action potentials as before, but the proprioceptive and kinaesthetic meaning assigned to them by their “consumers” in the brain undergoes change: whereas before they signified that your arm was moving along one path through the seven-dimensional space of possible arm configurations (the human arm has seven degrees of freedom: three at the wrist, one at the elbow, and three at the shoulder), they gradually come to signify that it is moving along a different path in that kinematic space, namely, the one consistent with the prismatically distorted visual feedback you are receiving. Similar recalibrations are possible with respect to sources of information for head and eye position. After adapting to laterally displacing prisms, signals from receptors in your neck that previously signified the alignment of your head and torso, for example, may come to signify that your head is turned slightly to the side. For discussion, see Harris 1965, 1980 and Welch 1978: chap. 3.

2.3 The Enactive Approach

The enactive approach defended by J. Kevin O’Regan and Alva Noë (O’Regan & Noë 2001; Noë 2004, 2005, 2010; O’Regan 2011) is best viewed as an extension of the reafference theory. According to the enactive approach, spatially contentful, world-presenting perceptual experience depends on implicit knowledge of the way sensory stimulations vary as a function of bodily movement. “Over the course of life”, O’Regan and Noë write,

a person will have encountered myriad visual attributes and visual stimuli, and each of these will have particular sets of sensorimotor contingencies associated with it. Each such set will have been recorded and will be latent, potentially available for recall: the brain thus has mastery of all these sensorimotor sets. (2001: 945)

To see an object o as having the location and shape properties it has it is necessary (1) to receive sensory stimulations from o and (2) to use those stimulations in order to retrieve the set of sensorimotor contingencies associated with o on the basis of past encounters. In this sense, seeing is a “two-step” process (Noë 2004: 164). It is important to emphasize, however, that the enactive approach distances itself from the idea that vision is functionally dedicated, in whole or in part, to the guidance of spatially directed actions: “Our claim”, Noë writes,

is that seeing depends on an appreciation of the sensory effects of movement (not, as it were, on the practical significance of sensation)…. Actionism is not committed to the general claim that seeing is a matter of knowing how to act in respect of or in relation to the things we see. (Noë 2010: 249)

The enactive approach also has strong affinities with the sense-data tradition. According to Noë, an object’s visually apparent shape is the shape of the 2D patch that would occlude the object on a plane perpendicular to the line of sight, i.e., the shape of the patch projected by the object on the frontal plane in accordance with the laws of linear perspective. Noë calls this the object’s “perspectival shape” (P-shape). An object’s visually apparent size, in turn, is the size of the patch projected by the object on the frontal plane. Noë calls this the object’s “perspectival size” (P-size). Appearances are “perceptually basic” (Noë 2004: 81) because in order to see an object’s actual spatial properties it is necessary both to see its 2D P-properties and to understand how they would vary (undergo transformation) with changes in one’s point of view. This conception of what it is to perceive objects as voluminous space-occupiers is closely to akin to views defended by Russell (1918), Broad (1923), and Price (1950). It also worth mentioning that the enactive approach has strong affinities to views in the phenomenological tradition that are beyond the scope of this entry (but for discussion, see Thompson 2005; Hickerson 2007; and the entry on phenomenology).

2.3.1 Some questions about P-properties

Assessment of the enactive approach is complicated by questions concerning the nature of P-properties. First, there is a tendency on the part of its main proponents to speak interchangeably of consciously perceived P-properties (or ‘looks’), on the one hand, and proximal sensory stimulations, on the other. Noë, e.g., writes:

The sensorimotor profile of an object is the way its appearance changes as you move with respect to it (strictly speaking, it is the way sensory stimulation varies as you move). (2004: 78, our emphasis)

It is far from clear how these different characterizations are to be related, however (Briscoe 2008; Kiverstein 2010). P-properties, according to the enactive approach, are distal, relational properties of the objects we see: “If there is a mind/world divide… then P-properties are on the world side of the divide” (2004: 83). Moreover, Noë clearly assumes that they are visible: “P-properties are themselves objects of sight, that is, things that we see” (2004: 83). Sensory stimulations, by contrast, are proximal, subpersonal vehicles of visual perception. They are not objects of sight. Quite different, if not incommensurable, notions of sensorimotor profile and, so, of sensorimotor knowledge would thus seem to be implied by the two characterizations.

There is also an ambiguity with the “-motor” in “sensorimotor knowledge”. On the one hand, Noë argues that perception is active in the sense that perceivers require knowledge of the proximal, sensory effects of movement. E.g., in order to see an object’s shape and size it is necessary to have certain anticipations concerning the way in which retinal stimulations caused by the object would vary as a function of her point of view. “This perspectival aspect”, Noë writes, “marks the place of action in perception” (Noë 2004: 34). On this conception there is no commitment to the view that vision is for the guidance of action, that vision constitutively has something to do with adapting animal behavior to the spatial layout of the distal environment (Noë 2004: 18–19). Rather, vision is active in the sense that it involves learned expectations concerning the ways in which sensory stimulations would be “perturbed” by possible bodily movements (Noë 2010: 247–248).

On the other hand, Noë adverts to a more world-engaging conception of sensorimotor knowledge in order to explain our visual experience of P-properties:

variation in looks reveals how things are. But what of the looks themselves, what of P-properties? Do we see them by seeing how they look? This would threaten to lead to infinite regress…. (2004: 87)

The solution to the regress problem is that seeing an object’s P-properties involves a kind of practical know-how. A tilted plate, e.g., looks elliptical and small from here because one has to move one’s hand in a certain way in order to indicate its shape and size in the visual field (2004: 89). Whereas seeing an object’s intrinsic properties, according to the enactive approach, requires knowledge of the way P-properties would vary as a function of movement, seeing P-properties involves knowing how one would need to move one’s body in relation to what one sees in order to achieve a certain goal.

While this seems to suggest that the first kind of sensorimotor knowledge is asymmetrically dependent on the latter, Noë maintains that just the opposite is the case. “I do not wish to argue”, he writes,

that to experience something as having a certain [P-shape] is to experience it as affording a range of possible movements; rather I want to suggest that one experiences it as having a certain P-shape, and so as affording possible movements, only insofar as, in encountering it, one is able to draw on one’s appreciation of the sensorimotor patterns mediating (or that might be mediating) your relation to it. (2004: 90)

The problem with this suggestion, however, is that it leads the enactive approach directly back to the explanatory regress that the second, affordance-detecting kind of sensorimotor knowledge was introduced to avoid.

2.3.2 Evidence for the Enactive Approach

The enactive approach rests its case on three main sources of empirical support. The first derives from experiments with optical rearrangement devices (ORDs), discussed in Section 2.2 above. Hurley and Noë (2003) maintain that adaptation to ORDs only occurs when subjects relearn the systematic patterns of interdependence between active movement and reafferent visual stimulation. Moreover, contrary to the proprioceptive change theory of Stratton, Harris, and Rock, Hurley and Noë argue that the end product of adaptation to inversion and reversal of the retinal image is genuinely visual in nature: during the final stage of adaptation, visual experience “rights itself”.

In Section 2.2 above, we reviewed empirical evidence against the view that active movement and corresponding reafferent stimulation are necessary for adaptation to ORDs. Accordingly, we will focus here on Hurley and Noë’s objections to the proprioceptive-change theory. According to the latter, “what is actually modified [by the adaptation process] is the interpretation of nonvisual information about positions of body parts” (Harris 1980: 113). Once intermodal harmony is restored, the subject will again be able to perform visuomotor actions without error or difficulty, and she will again feel at home in the visually perceived world.

Hurley and Noë do not contest the numerous sources of empirical and introspective evidence that Stratton, Harris, and Rock adduce for the proprioceptive-change theory. Rather they reject the theory on the basis of what they take to be an untoward epistemic implication concerning adaptation to left-right reversal:

while rightward things really look and feel leftward to you, they come to seem to look and feel rightward. So the true qualities of your experience are no longer self-evident to you. (2003: 155)

The proprioceptive-change theory, however, does not imply such radical introspective error. According to proponents of the theory, experience normalizes after adaptation to reversal not because things that really look leftward “seem to look rightward” (what this might mean is enigmatic at best), but rather because the subjects eventually become familiar with the way things look when reversed—much as ordinary subjects can learn to read mirror-reversed writing fluently (Harris 1965: 435–36). Things seem “normal” after adaptation, in other words, because subjects are again able to cope with the visually perceived world in a fluent and unreflective manner.

A second line of evidence for the enactive approach comes from well-known experiments on tactile-visual sensory substitution (TVSS) devices that transform outputs from a low-resolution video camera into a matrix of vibrotactile stimulation on the skin of one’s back (Bach-y-Rita 1972, 2004) or electrotactile stimulation on the surface of one’s tongue (Sampaio et al. 2001).

At first, blind subjects equipped with a TVSS device experience its outputs as purely tactile. After a short time, however, many subjects cease to notice the tactile stimulations themselves and instead report having quasi-visual experiences of the objects arrayed in space in front of them. Indeed, with a significant amount of supervised training, blind subjects can learn to discriminate spatial properties such as shape, size, and location and even to perform simple “eye”-hand coordination tasks such as catching or batting a ball. A main finding of relevance in early experiments was that subjects learn to “see” by means of TVSS only when they have active control over movement of the video camera. Subjects who receive visual input passively—and therefore lack any knowledge of how (or whether) the camera is moving—experience only meaningless, tactile stimulation.

Hurley and Noë argue that passively stimulated subjects do not learn to “see” by means of sensory substitution because they are unable to learn the laws of sensorimotor contingency that govern the prosthetic modality:

active movement is required in order for the subject to acquire practical knowledge of the change from sensorimotor contingencies characteristic of touch to those characteristic of vision and the ability to exploit this change skillfully. (Hurley & Noë 2003: 145)

An alternative explanation, however, is that subjects who do not control camera movement—and who are not otherwise attuned to how the camera is moving—are simply unable to extract any information about the structure of the distal scene from the incoming pattern of sensory stimulations. In consequence they do not engage in “distal attribution” (Epstein et al. 1986; Loomis 1992; Siegel & Warren 2010): they do not perceive through the changing pattern of proximal stimulation to a spatially external scene in the environment. For development of this alternative explanation in the context of Bayesian perceptual psychology, see Briscoe forthcoming.

A final source of evidence for the enactive approach comes from studies of visuomotor development in the absence of normal, reafferent visual stimulation. Held & Hein 1963 performed an experiment in which pairs of kittens were harnessed to a carousel in a small, cylindrical chamber. One of the kittens was able to engage in free circumambulation while wearing a harness. The other kitten was suspended in the air in a metal gondola whose motions were driven by the first harnessed kitten. When the first kitten walked, both kittens moved and received identical visual stimulation. However, only the first kitten received reafferent visual feedback as the result of self-movement. Held and Hein reported that only mobile kittens developed normal depth perception—as evidenced by their unwillingness to step over the edge of a visual cliff, blinking reactions to looming objects, and visually guided paw placing responses. Noë (2004) argues that this experiment supports the enactive approach: in order to develop normal visual depth perception it is necessary to learn how motor outputs lead to changes to visual inputs.

There are two main reasons to be skeptical of this assessment. First, there is evidence that passive transport in the gondola may have disrupted the development of the kittens’ innate visual paw placing responses (Ganz 1975: 206). Second, the fact that passive kittens were prepared to walk over the edge of a visual cliff does not show that their visual experience of depth was abnormal. Rather, as Jesse Prinz (2006) argues, it may only indicate that they “did not have enough experience walking on edges to anticipate the bodily affordances of the visual world”.

2.3.2 Challenges to the Enactive Approach

The enactive approach confronts objections on multiple fronts. We focus on just three of them here (but see Block 2005; Prinz 2006; Briscoe 2008; Clark 2009: chap. 8; and Block 2012). First, the approach is essentially an elaboration of Held’s reafference theory and, as such, faces many of the same empirical obstacles. Evidence, for example, that active movement per se is not necessary for perceptual adaptation to optical rearrangement (Section 2.2.1) is at variance with predictions made by the reafference theory and the enactive approach alike.

A second line of criticism targets the alleged perceptual priority of P-properties. According to the enactive approach, P-properties are “perceptually basic” (Noë 2004: 81) because in order to see an object’s intrinsic, 3D spatial properties it is necessary to see its 2D P-properties and to understand how they would undergo transformation with variation in one’s point of view. When we view a tilted coin, critics argue, however, we do not see something that looks—in either an epistemic or non-epistemic sense of “looks”—like an upright ellipse. Rather, we see what looks like a disk that is partly nearer and partly farther away from us. In general, the apparent shapes of the objects we perceive are not 2D but have extension in depth (Austin 1962; Gibson 1979; Smith 2000; Schwitzgebel 2006; Briscoe 2008; Hopp 2013).

Support for this objection comes from work in mainstream vision science. In particular, there is abundant empirical evidence that an object’s 3D shape is specified by sources of spatial information in the light reflected or emitted from the object’s surfaces to the perceiver’s eyes as well as by oculomotor factors (for reviews, see Cutting & Vishton 1995; Palmer 1999; and Bruce et al. 2003). Examples include binocular disparity, vergence, accommodation, motion parallax, texture gradients, occlusion, height in the visual field, relative angular size, reflections, and shading. That such shape-diagnostic information having once been processed by the visual system is not lost in conscious visual experience of the object is shown by standard psychophysical methods in which experimenters manipulate the availability of different spatial depth cues and gauge the perceptual effects. Objects, for example, look somewhat flattened under uniform illumination conditions that eliminate shadows and highlights, and egocentric distances are underestimated for objects positioned beyond the operative range of binocular disparity, accommodation, and vergence. Results of such experimentation show that observers can literally see the difference made by the presence or absence of a certain cue in the light available to the eyes (Smith 2000; Briscoe 2008).

According to the influential dual systems model (DSM) of visual processing (Milner & Goodale 1995/2006; Goodale & Milner 2004), visual consciousess and visuomotor control are supported by functionally and anatomically distinct visual subsystems (these are the ventral and dorsal information processing streams, respectively). In particular, proponents of the DSM maintain that the contents of visual experience are not used by motor programming areas in the primate brain:

The visual information used by the dorsal stream for programming and on-line control, according to the model, is not perceptual in nature …[I]t cannot be accessed consciously, even in principle. In other words, although we may be conscious of the actions we perform, the visual information used to program and control those actions can never be experienced. (Milner & Goodale 2008: 775–776)

A final criticism of the enactive approach is that it is empirically falsified by evidence for the DSM (see the commentaries on O’Regan & Noë 2001; Clark 2009: chap. 8; and the essays collected in Gangopadhyay et al. 2010): the bond it posits between what we see and what we do is much too tight to comport with what neuroscience has to tells us about their functional relations.

The enactivist can make two points in reply to this objection. First, experimental findings indicate that there are a number of contexts in which information present in conscious vision is utilized for purposes of motor programming (see Briscoe 2009 and Briscoe & Schwenkler forthcoming). Action and perception are not as sharply dissociated as proponents of DSM sometimes claim.

Second, the enactive approach, as emphasized above, rejects the idea that the function of vision is to guide actions. It

does not claim that visual awareness depends on visuomotor skill, if by “visuomotor skill” one means the ability to make use of vision to reach out and manipulate or grasp. Our claim is that seeing depends on an appreciation of the sensory effects of movement (not, as it were, on the practical significance of sensation). (Noë 2010: 249)

Since the enactive approach is not committed to the idea that seeing depends on knowing how to act in relation to what we see, it is not threatened by empirical evidence for a functional dissociation between visual awareness and visually guided action.

3. Motor Component and Efferent Readiness Theories

At this point, it should be clear that the claim that perception is active or action-based is far from unambiguous. Perceiving may implicate action in the sense that it is taken constitutively to involve associations with touch (Berkeley 1709), kinaesthetic feedback from changes in eye position (Lotze 1887 [1879]), consciously experienced “effort of the will” (Helmholtz 2005 [1924]), or knowledge of the way reafferent sensory stimulation varies as a function of movement (Held 1961; O’Regan & Noë 2001; Hurley & Noë 2003).

In this section, we shall examine two additional conceptions of the role of action in perception. According to the motor component theory, as we shall call it, efference copies generated in the oculomotor system and/or proprioceptive feedback from eye-movements are used in tandem with incoming sensory inputs to determine the spatial attributes of perceived objects (Helmholtz 2005 [1924]; Mack 1979; Shebilske 1984, 1987; Ebenholtz 2002). Efferent readiness theories, by contrast, appeal to the particular ways in which perceptual states prepare the observer to move and act in relation to the environment. The modest readiness theory, as we shall call it, claims that the way an object’s spatial attributes are represented in visual experience is sometimes modulated by one or another form of covert action planning (Festinger et al. 1967; Coren 1986; Vishton et al. 2007). The bold readiness theory argues for the stronger, constitutive claim that, as J.G. Taylor puts its, “perception and multiple simultaneous readiness for action are one and the same thing” (1968: 432).

3.1 The Motor Component Theory (Embodied Visual Perception)

As pointed out in Section 2.3.2, there are numerous, independently variable sources of information about the spatial layout of the environment in the light sampled by the eye. In many cases, however, processing of stimulus information requires or is optimized by recruiting sources of auxiliary information from outside the visual system. These may be directly integrated with incoming visual information or used to change the weighting assigned to one or another source of optical stimulus information (Shams & Kim 2010; Ernst 2012).

An importantly different recruitment strategy involves combining visual input with non-perceptual information originating in the body’s motor control systems, in particular, efference copy, and/or proprioceptive feedback from active movement (kinaesthesis). The motor component theory, as we shall call it, is premised on evidence for such motor-modal processing.

The motor component theory can be made more concrete by examining three situations in which the spatial contents of visual experience are modulated by information concerning recently initiated or impending bodily movements:

- Apparent direction: The retinal image produced by an object is ambiguous with respect to the object’s direction in the absence of extraretinal information concerning the orientation of the eye relative to the head. While there is evidence that proprioceptive inflow from muscle spindles in the extraocular muscles is used to encode eye position, as Sherrington 1918 proposed, outflowing efference copy is generally regarded as the more heavily weighted source of information (Bridgeman & Stark 1991). The problem of visual direction constancy was discussed in detail in Section 2.1 above.

- Apparent distance and size: When an object is at close range, its distance in depth from the perceiver can be determined on the basis of three variables: (1) the distance between the perceiver’s eyes, (2) the vergence angle formed by the line of sight from each eye to the object, and (3) the direction of gaze. Information about (1) is updated in the course of development as the perceiver’s body grows. Information about (2) and (3), which vary from one moment to the next, is obtained from efference copy of the motor command to fixate the object as well as proprioceptive feedback from the extraocular muscles. Since an object’s apparent size is a function of its perceived distance from the perceiver and the angle it subtends on the retina (Emmert 1881), information about (2) and (3) can thus modulate visual size perception (Mon-Williams et al. 1997).

- Apparent motion: In the most familiar case of motion perception, the subject visually tracks a moving target, e.g., a bird in flight, against a stable background using smooth pursuit eye movements. As Bridgeman et al. 1994 note, smooth pursuit “reverses the movement conditions on the retina: the tracked object sweeps across the retina very little, while the background undergoes a brisk motion” (p. 255). Nonetheless, it is the target that appears to be in motion while the environment appears to be stationary. There is evidence that the visual system is able to compensate for pursuit induced retinal motion by means of efference-based information about the changing direction of gaze (for a review, see Furman & Gur 2012). This has been used to explain the travelling moon illusion (Post & Leibowitz 1985: 637). Neuropsychological findings indicate that failure to integrate efference-based information about eye movement leads to a breakdown in perceived background stability during smooth pursuit (Haarmeier et al. 1997; Nakamura & Colby 2002; Fischer et al. 2012).

The motor component theory is a version of the view that perception is embodied in the sense of Prinz 2009 (see the entry on embodied cognition). Prinz explains that

embodied mental capacities, are ones that depend on mental representations or processes that relate to the body…. Such representations and processes come in two forms: there are representations and processes that represent or respond to body, such as a perception of bodily movement, and there are representations and processes that affect the body, such as motor commands. (2009: 420; for relevant discussion of various senses of embodiment, see Alsmith and Vignemount 2012)

The three examples presented above provide empirical support for the thesis that visual perception is embodied in this sense. For additional examples, see Ebenholtz 2002: chap. 4.

3.2 The Efferent Readiness Theory

Patients with frontal lobe damage sometimes exhibit pathological “utilization behaviour” (Lhermitte 1983) in which the sight of an object automatically elicits behaviors typically associated with it, such as automatically pouring water into a glass and drinking it whenever a bottle of water and a glass are present (Frith et al. 2000: 1782). That normal subjects often do not automatically perform actions afforded by a perceived object, however, does not mean that they do not plan, or imaginatively rehearse, or otherwise represent them. (On the contrary, recent neuroscientific findings suggest that merely perceiving an object often covertly prepares the motor system to engage with it in a certain manner. For overviews, see Jeannerod 2006 and Rizzolatti 2008.)

Efferent readiness theories are based on the idea that covert preparation for action is “an integral part of the perceptual process” and not “merely a consequence of the perceptual process that has preceded it” (Coren 1986: 394). According to the modest readiness theory, as we shall call it, covert motor preparation can sometimes influence the way an object’s spatial attributes are represented in perceptual experience. The bold readiness theory, by contrast, argues for the stronger, constitutive claim that to perceive an object’s spatial properties just is to be prepared or ready to act in relation to the object in certain ways (Sperry 1952; Taylor 1962, 1965, 1968).

3.2.1 The Modest Readiness Theory

A number of empirical findings motivate the modest readiness theory. Festinger et al. 1967 tested the view that visual contour perception is

determined by the particular sets of preprogrammed efferent instructions that are activated by the visual input into a state of readiness for immediate use. (p. 34)

Contact lenses that produce curved retinal input were placed on the right eye of three observers, who were instructed to scan a horizontally oriented line with their left eye covered for 40 minutes. The experimenters reported that there was an average of 44% adaptation when the line was physically straight but retinally curved, and an average of 18% adaptation when the line was physically curved but retinally straight (see Miller & Festinger 1977, however, for conflicting results).

An elegantly designed set of experiments by Coren 1986 examined the role of efferent readiness in the visual perception of direction and extent. Coren’s experiments support the hypothesis that the spatial parameter controlling the length of a saccade is not the angular direction of the target relative to the line of sight, but rather the direction of the center of gravity (COG) of all the stimuli in its vicinity (Coren & Hoenig 1972; Findlay 1982). Importantly,

the bias arises from the computation of the saccade that would be made and, hence, is held in readiness, rather than the saccade actually emitted. (Coren 1986: 399)

The COG bias is illustrated in Figure 3. In the first row (top), there are no extraneous stimuli near the saccade target. Hence, the saccade from the point of fixation to the target is unbiased. In the second row, by contrast, the location of an extraneous stimulus (×) results in a saccade from the point of fixation that undershoots its target, while in the third row the saccade overshoots its target. In the fourth row, changing the location of the extraneous stimulus eliminates the COG bias: because the extraneous stimulus is near the point of fixation rather than the saccade target, the saccade is accurate.

![[Two columns of 4 dots each, each pair of dots on the same horizontal. The left column has the words 'Fixation Point' at the top and a blue arrow pointing from those words to the top of the column. The right column has the words 'Saccade Target' and a similar blue arrow. The top (or first) pair of dots has an arrow curving from the left dot to the right dot. The second pair as a 'x' to the left of the right dot and an arrow curving from the left dot to a point between the 'x' and the right dot. The third pair has a 'x' to the right of the right dot and an arrow curving from the left dot to a point between the right dot and the 'x'. The fourth pair as a 'x' to the left of the left dot and an arrow curving from the left dot to the right dot.]](fig3.png)

Figure 3: The effect of starting eye position on saccade programming (after Coren 1986: 405)

The COG bias is evolutionarily adaptive: eye movements will bring both the saccade target as well as nearby objects into high acuity vision, thereby maximizing the amount of information obtained with each saccade. Motor preparation or “efferent readiness” to execute an undershooting or overshooting saccade, Coren found, however, can also give rise to a corresponding illusion of extent (1986: 404–406). Observers, e.g., will perceptually underestimate the length of the distance between the point of fixation and the saccade target when there is an extraneous stimulus on the near side of the target (as in the second row of Figure 3) and will perceptually overestimate the length of the distance when there is an extraneous stimulus on the far side of the target (as in the third row of Figure 3).

According to Coren, the well known Müller-Lyer illusion can be explained within this framework. The outwardly turned wings in Müller-Lyer display shift the COG outward from each vertex, while the inwardly turned wings in this figure shift the COG inward. This influences both saccade length from vertex to vertex as well as the apparent length of the central line segments. The influence of COG on efferent readiness to execute eye movements, Coren argues (1986: 400–403), also explains why the line segments in the Müller-Lyer display can be replaced with small dots while leaving the illusion intact as well as the effects of varying wing length and wing angle on the magnitude of the illusion.