Innateness and Language

The philosophical debate over innate ideas and their role in the acquisition of knowledge has a venerable history. It is thus surprising that very little attention was paid until early last century to the questions of how linguistic knowledge is acquired and what role, if any, innate ideas might play in that process.

To be sure, many theorists have recognized the crucial part played by language in our lives, and have speculated about the (syntactic and/or semantic) properties of language that enable it to play that role. However, few had much to say about the properties of us in virtue of which we can learn and use a natural language. To the extent that philosophers before the 20th century dealt with language acquisition at all, they tended to see it as a product of our general ability to reason — an ability that makes us special, and that sets us apart from other animals, but that is not tailored for language learning in particular.

In Part 5 of the Discourse on the Method, for instance, Descartes identifies the ability to use language as one of two features distinguishing people from “machines” or “beasts” and speculates that even the stupidest people can learn a language (when not even the smartest beast can do so) because human beings have a “rational soul” and beasts “have no intelligence at all.” (Descartes 1984: 140-1.) Like other great philosopher-psychologists of the past, Descartes seems to have regarded our acquisition of concepts and knowledge (‘ideas’) as the main psychological mystery, taking language acquisition to be a relatively trivial matter in comparison; as he puts it, albeit ironically, “it patently requires very little reason to be able to speak.” (1984: 140.)

All this changed in the early twentieth century, when linguists, psychologists, and philosophers began to look more closely at the phenomena of language learning and mastery. With advances in syntax and semantics came the realization that knowing a language was not merely a matter of associating words with concepts. It also crucially involves knowledge of how to put words together, for it's typically sentences that we use to express our thoughts, not words in isolation.

If that's the case, though, language mastery can be no simple matter. Modern linguistic theories have shown that human languages are vastly complex objects. The syntactic rules governing sentence formation and the semantic rules governing the assignment of meanings to sentences and phrases are immensely complicated, yet language users apparently apply them hundreds or thousands of times a day, quite effortlessly and unconsciously. But if knowing a language is a matter of knowing all these obscure rules, then acquiring a language emerges as the monumental task of learning them all. Thus arose the question that has driven much of modern linguistic theory: How could mere children learn the myriad intricate rules that govern linguistic expression and comprehension in their language — and learn them solely from exposure to the language spoken around them?

Clearly, there is something very special about the brains of human beings that enables them to master a natural language — a feat usually more or less completed by age 8 or so. §2.1 of this article introduces the idea, most closely associated with the work of the MIT linguist Noam Chomsky, that what is special about human brains is that they contain a specialized ‘language organ,’ an innate mental ‘module’ or ‘faculty,’ that is dedicated to the task of mastering a language.

On Chomsky's view, the language faculty contains innate knowledge of various linguistic rules, constraints and principles; this innate knowledge constitutes the ‘initial state’ of the language faculty. In interaction with one's experiences of language during childhood — that is, with one's exposure to what Chomsky calls the ‘primary linguistic data’ or ‘pld’ (see §2.1) — it gives rise to a new body of linguistic knowledge, namely, knowledge of a specific language (like Chinese or English). This ‘attained’ or ‘final’ state of the language faculty constitutes one's ‘linguistic competence’ and includes knowledge of the grammar of one's language. This knowledge, according to Chomsky, is essential to our ability to speak and understand a language (although, of course, it is not sufficient for this ability: much additional knowledge is brought to bear in ‘linguistic performance,’ that is, actual language use).[1]

§§2.2-2.5 discuss the main arguments used by Chomsky and others to support this ‘nativist’ view that what makes language acquisition possible is the fact that much of our linguistic knowledge is unlearned; it is innate or inborn, part of the initial state of the language faculty.[2] Section 3 presents a number of other avenues of research that have been argued to bear on the innateness of language, and shows how recent empirical research about language learning and the brain may challenge the nativist position. Because much of this material is very new, and because my conclusions (many of which are tentative) are highly controversial, more references to the empirical literature than are normal in an encyclopedia article are included. The reader is encouraged to follow up on the research cited and assess the plausibility of linguistic nativism for him or herself: whether language is innate or not is, after all, an empirical issue.

- 1. Chomsky's Case against Skinner

- 2. Arguments for the Innateness of Language

- 3. Other Research Bearing on the Innateness of Language: New Problems for the Nativist?

- Bibliography

- Academic Tools

- Other Internet Resources

- Related Entries

1. Chomsky's Case against Skinner

The behaviorist psychologist B.F. Skinner was the first theorist to propose a fully fledged theory of language acquisition in his book, Verbal Behavior (Skinner 1957). His theory of learning was closely related to his theory of linguistic behavior itself. He argued that human linguistic behavior (that is, our own utterances and our responses to the utterances of others) is determined by two factors: (i) the current features of the environment impinging on the speaker, and (ii) the speaker's history of reinforcement (i.e., the giving or withholding of rewards and/or punishments in response to previous linguistic behaviors). Eschewing talk of the mental as unscientific, Skinner argued that ‘knowing’ a language is really just a matter of having a certain set of behavioral dispositions: dispositions to say (and do) appropriate things in response to the world and the utterances of others. Thus, knowing English is, in small part, a matter of being disposed to utter “Please close the door!” when one is cold as a result of a draught from an open door, and of being disposed (other things being equal) to utter “OK” and go shut a door in response to someone else's utterance of that formula.

Given his view that knowing a language is just a matter of having a certain set of behavioral dispositions, Skinner believed that learning a language just amounts to acquiring that set of dispositions. He argued that this occurs through a process that he called operant conditioning. (‘Operants’ are behaviors that have no discernible law-like relation to particular environmental conditions or ‘eliciting stimuli.’ They are to be contrasted with ‘respondents,’ which are reliable or reflex responses to particular stimuli. Thus, blinking when someone pokes at your eye is a respondent; episodes of infant babbling are operants.) Skinner held that most human verbal behaviors are operants: they start off unconnected with any particular stimuli. However, they can acquire connections to stimuli (or other behaviors) as a result of conditioning. In conditioning, the behavior in question is made more (or in some paradigms less) likely to occur in response to a given environmental cue by the imposition of an appropriate ‘schedule of reinforcement’: rewards or punishments are given or withheld as the subject's response to the cue varies over time.

According to Skinner, language is learned when children's verbal operants are brought under the ‘control’ of environmental conditions as a result of training by their caregivers. They are rewarded (by, e.g., parental approval) or punished (by, say, a failure of comprehension) for their various linguistic productions and as a result, their dispositions to verbal behavior gradually converge on those of the wider language community. Likewise, Skinner held, ‘understanding’ the utterances of others is a matter of being trained to perform appropriate behaviors in response to them: one understands ‘Shut the door!’ to the extent that one responds appropriately to that utterance.

In his famous review of Skinner's book, Chomsky (1959) effectively demolishes Skinner's theories of both language mastery and language learning. First, Chomsky argued, mastery of a language is not merely a matter of having one's verbal behaviors ‘controlled’ by various elements of the environment, including others' utterances. For language use is (i) stimulus independent and (ii) historically unbound. Language use is stimulus independent: virtually any words can be spoken in response to any environmental stimulus, depending on one's state of mind. Language use is also historically unbound: what we say is not determined by our history of reinforcement, as is clear from the fact that we can and do say things that we have not been trained to say.

The same points apply to comprehension. We can understand sentences we have never heard before, even when they are spoken in odd or unexpected situations. And how we react to the utterances of others is again dependent largely on our state of mind at the time, rather than any past history of training. There are linguistic conventions in abundance, to be sure, but as Chomsky rightly pointed out, human ‘verbal behavior’ is quite disanalogous to a pigeon's disk-pecking or a rat's maze-running.. Mastery of language is not a matter of having a bunch of mere behavioral dispositions. Instead, it involves a wealth of pragmatic, semantic and syntactic knowledge. What we say in a given circumstance, and how we respond to what others say, is the result of a complex interaction between our history, our beliefs about our current situation, our desires, and our knowledge of how our language works. Skinner's first big mistake, then, was in failing to recognize that language mastery involves knowledge (or, as Chomsky later called it ‘cognizance’) of linguistic rules and conventions.

His second big mistake was related to this one: he failed to recognize that acquiring mastery of a language is not a matter of being trained what to say. It's simply false, says Chomsky, that “a careful arrangement of contingencies of reinforcement by the verbal community is a necessary condition of language learning.” (1959:39) First, children learning language do not appear to be being ‘conditioned’ at all! Explicit training (such as a dog receives when learning to bark on command) is simply not a feature of language acquisition. It's only comparatively rarely that parents correct (or explicitly reward) their children's linguistic sorties; children learn much of what they know about language from watching TV or passively listening to adults; immigrant children learn a second language to native speaker fluency in the school playground; and even very young children are capable of linguistic innovation, saying things undreamt of by their parents. As Chomsky concludes: “It is simply not true that children can learn language only through ‘meticulous care’ on the part of adults who shape their verbal repertoire through careful differential reinforcement.” (1959:42)

Secondly, Chomsky argued — and here we see his first invocation of the famous ‘poverty of the stimulus’ argument, to be discussed in more detail in §2.2 below — it is unclear that conditioning could even in principle give rise to a set of dispositions rich enough to generate the full range of a person's linguistic behavior. In order, for example, to acquire the appropriate set of dispositions concerning the word car, one would have to be trained on vast numbers of sentences containing that word: one would have to hear car in object position and car in subject position; car modified by adjectives and car unmodified; car embedded in opaque contexts (e.g. in propositional attitude ascriptions) and car used transparently; and so on. But the ‘primary linguistic data,’ usually referred to as the ‘pld’ and comprising the set of sentences to which a child is exposed during language learning (plus any analysis performed by the child on those sentences; see below), simply cannot be assumed to contain enough of these ‘minimally differing sentences’ to fully determine a person's dispositions with respect to that word. Instead, Chomsky argued, what determines one's dispositions to use car is one's knowledge of that word's syntactic and semantic properties (e.g., car is a noun referring to cars), together with one's knowledge of how elements with those properties function in the language as a whole. So even if language mastery were (in part) a matter of having dispositions concerning car, the mechanism of conditioning would be unable to give rise to them. The training set to which children have access is simply too limited: it doesn't contain enough of the right sorts of exemplars.

In sum: Skinner was mistaken on all counts. Language mastery is not merely a matter of having a set of bare behavioral dispositions. Instead, it involves intricate and detailed knowledge of the properties of one's language. And language learning is not a matter of being trained what to say. Instead, children learn language just from hearing it spoken around them, and they learn it effortlessly, rapidly, and without much in the way of overt instruction.

These insights were to drive linguistic theorizing for the next fifty years, and it's worth emphasizing just how radical and exciting they were at the time. First, the idea that explaining language use involves attributing knowledge to speakers flouted the prevailing behaviorist view that talking about mental states was unscientific because mental states are unobservable. It also raised several pressing empirical question that linguists are still debating. For example, what is the content of speakers' knowledge of language?[3] What sorts of facts about language are represented in speakers' heads? And how does this knowledge actually function in the psychological processes of language production and comprehension: what are the mechanisms of language use?

Secondly, the idea that children learn language essentially on their own was a radical challenge to the prevailing behaviorist idea that all learning involves reinforcement. In addition, it made clear our need for a more ‘cognitive’ or ‘mentalistic’ conception of how language learning occurs, and vividly raised the question — our focus in this article — of what might be the preconditions for that process. As we will see in the next section, Chomsky was ready with a theory addressing each of these points.

2. Arguments for the Innateness of Language

2.1 What do Children Learn when they Learn Language?

At the same time as the behaviorist program in psychology was waning under pressure from Chomsky and others, linguists were abandoning what is known as ‘American Structuralism’ in the theory of syntax. Like the behaviorists, the structuralists (e.g., Harris, 1951) refused to postulate irreducibly theoretical entities; they insisted that syntactic categories (such as ‘noun phrase’ (‘NP’) or ‘verb phrase’ (‘VP’), etc.) be reducible to properties of actual utterances (collected in ‘corpora’ — lists of things people have said). In his landmark book, Syntactic Structures (1957), however, Chomsky argued that because corpora can contain only finitely many sentences, no attempt at reduction can succeed. Linguists need theoretical constructs that capture regularities going beyond the set of actual utterances, and that allow them to predict the properties of novel utterances. But if the category NP, for instance, is to include noun phrases that haven't been uttered yet, the meaning of noun phrase can't be exhausted by what's in the corpus: the structuralists' positivistic strictures on theoretical kinds are misguided.

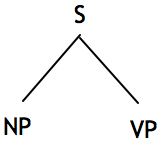

In addition, the structuralists had attempted to capture the syntactic properties of languages in terms of simple rewrite rules known as ‘phrase structure rules.’ Phrase structure rules describe the internal syntactic structures of sentence types; interpreted as rewrite rules, they can be used to generate or construct sentences. Thus, the rule S → NP VP, for instance, says that a sentence symbol S can be rewritten as the symbol NP followed by the symbol VP, and tells you that a sentence consists of a noun phrase followed by a verb phrase. (This information can be represented via a tree-diagram, as in Fig. 1a, or by a phrasemarker (or labeled bracketing), as in Fig. 1b.)

[[___]NP[___]VP]S (a) (b) Figure 1. Phrasemarkers representing a sentence as consisting of a noun phrase and a verb phrase via (a) a tree diagram or (b) a labeled bracketing.

Other rules, (such as NP → Det N, VP → V NP, Det → a, the, …, etc., V→ hit, kiss…, etc.; N → boy, girl,…, etc.) are subsequently applied, and (with still further rules not discussed here) allow for the generation of sentences such as The boy kissed the girl, The girl hits the boy, and so on.

Chomsky argued (on technical grounds; see Chomsky 1957, ch.1) that grammars must be enriched with a second type of rule, known as ‘transformations.’ Unlike phrase structure rules, transformations operate on whole sentences (or more strictly, their phrasemarkers); they allow for the generation of new sentences (/phrasemarkers) out of old ones. The Passive transformation described in Chomsky 1957:112, for instance, specifies how to turn an active sentence (/phrasemarker) into a passive one. Simplifying somewhat, you take an active phrasemarker of the form NP — Aux — V — NP, like Kate is biting Mark, and rearrange its elements x1 — x2 — x3 — x4 as follows: x4 — x2 + be + en — x3 + by — x1 to get Mark bite (+ is + en) by Kate. The parenthetical + en and + is invoke further operations on the verb bite that transform it into is being bitten, and ultimately Kate is biting Mark is ‘transformed’ into Mark is being bitten by Kate.

Only a grammar containing both phrase structure and transformation rules, Chomsky argued, could generate a natural language — ‘generate’ in the sense that by stepwise application of the rules, one could in principle build up from scratch all and only the sentences that the language contains. Hence, Chomsky urged the development of generative grammars of this type.

Syntactic theory has now gone well beyond this early vision — both phrase structure and transformation rules were abandoned in successive linguistic revolutions wrought by Chomsky and his students and colleagues (see Newmeyer 1986, 1997 for a history of generative linguistics).

But what has not changed — and what is important for our purposes — is that in every version of the grammar of (say) English, the rules governing the syntactic structure of sentences and phrases are stated in terms of syntactic categories that are highly abstracted from the properties of utterances that are accessible to experience. As an example of this, consider the notion of a trace. Traces are symbols that appear in phrasemarkers and mark the path of an element as it is moved from one position to another at various stages of a sentence's derivation, as in (1), where ti markes the NP Jacob's position at an earlier stage in the derivation.

- Jacobi seems [ti to have vanished]

But while traces are vital to the statement of many syntactic rules and regularities, they are ‘empty categories’ — they are not audible in the sentence as spoken. (See Chomsky 1981 and Lasnik and Uriagereka 1986 for more on traces and other empty categories.) Traces (and other similarly abstract properties of languages) thus raise a question for the theory of language acquisition. For if, as Chomsky maintains, mastery of language involves knowledge of rules stated in terms of sentences' syntactic properties, and if those properties are not so to speak ‘present’ in the data, but are rather highly abstract and ‘unobservable,’ then it becomes hard to see how children could possibly acquire knowledge of the rules concerning them. As a consequence, children's feat in learning a language appears miraculous: how could a child learn the myriad rules governing linguistic expression given only her exposure to the sentences spoken around her?[4]

In response to this question, most 20th century theorists followed Chomsky in holding that language acquisition could not occur unless much of the knowledge eventually attained were innate or inborn. The gap between what speaker-hearers know about language (its grammar, among other things) and the data they have access to during learning (the pld) is just too broad to be bridged by any process of learning alone. It follows that since children patently do learn language, they are not linguistic ‘blank slates.’ Instead, Chomsky and his followers maintained, human children are born knowing the ‘Universal Grammar’ or ‘UG,’ a theory describing the most fundamental properties of all natural languages (e.g., the facts that elements leave traces behind when they move, and that their movements are constrained in various ways). Learning a particular language thus becomes the comparatively simple matter of elaborating upon this antecedently possessed knowledge, and hence appears a much more tractable task for young children to attempt.

Over the years, two conceptions of the innate contribution to language learning and its elaboration during the learning process have been proposed. In earlier writings (e.g., Chomsky 1965), Chomsky saw learning a language as basically a matter of formulating and testing hypotheses about its grammar — unconsciously, of course. He argued that in order to acquire the correct grammar, the child must innately know a “a linguistic theory that specifies the form of the grammar of a possible human language” (1965:25) — she must know UG in other words. He saw this knowledge as being embodied in a suite of innate linguistic abilities, concepts, and constraints on the kinds of grammatical rules learners can propose for testing. On this view (1965:30-31), the inborn UG includes (i) a way of analyzing and representing the incoming linguistic data; (ii) a set of linguistic concepts with which to state grammatical hypotheses; (iii) a way of telling how the data bear on those hypotheses (an ‘evaluation metric’); and (iv) a very restrictive set of constraints on the hypotheses that are available for consideration. (i) through (iv) constitute the ‘initial state’ of the language faculty, and the child arrives at the final state (knowledge of her language) by performing what is basically a kind of scientific inquiry into its nature.

By the 1980's, a less intellectualized conception of how language is acquired began to supplant the hypothesis-testing model. Whereas the early model saw the child as a ‘little scientist,’ actively (if unconsciously) figuring out the rules of grammar, the new ‘parameter-setting’ model conceived language acquisition as a kind of growth or maturation; language acquisition is something that happens to you, not something you do. The innate UG was no longer viewed as a set of tools for inference; rather, it was conceived as a highly articulated set of representations of actual grammatical principles. Of course, since not everyone ends up speaking the same language, these innate representations must allow for some variation. This is achieved in this model via the notion of a ‘parameter’: some of the innately represented grammatical principles contain variables that may take one of a certain (highly restricted) range of values. These different ‘parameter settings’ are determined by the child's linguistic experience, and result in the acquisition of different languages. Thus, Chomsky (1988:61-62) compared the learner to a switchbox: just as a switchbox's circuitry is all in place but for some switches that need to be flicked to one position or another, the learner's knowledge of language is basically all in place, but for some linguistic ‘switches’ that are set by linguistic experience.

To illustrate how parameter setting works, consider a simplified example (discussed in more detail in Chomsky 1990:644-45). All languages require that sentences have subjects, but whereas some languages (like English) require that the subject be overt in the utterance, other languages (like Spanish) allow you to leave the subject out of the sentence when it is written or spoken. Thus, a Spanish speaker who wanted to say that he speaks Spanish could say Hablo español (leaving out the first personal pronoun yo) without violating the rules of Spanish, whereas an English speaker wanting to express that thought could not say *Speak Spanish without violating the rules of English: to speak grammatically, he must say I speak Spanish. The parameter-setting model accommodates this sort of difference by proposing that there is a ‘Null Subject Parameter,’ which is set differently in English and Spanish speakers: Spanish speakers set it to ‘Subject Optional,’ whereas in English speakers, it is set to ‘Subject Obligatory.’ How? One proposal is that the parameter is set by default to ‘Subject Obligatory’ and that hearing a subjectless sentence causes it to be set to ‘Subject Optional.’ Since children learning Spanish frequently hear subjectless sentences, whereas those learning English do not, the parameter setting is switched in the Spanish learner, but remains set at the default for the English learner. (Roeper and Williams 1987 is the locus classicus for parameter-setting models; Ayoun 2003 is more up-to-date; Pinker, 1997: ch.3 provides a helpful, non-technical overview.)

These two approaches to language acquisition clearly differ significantly in their conception of the nature of the learning process and the learner's role in it, but we are not concerned to evaluate their respective merits here. Rather, the important point for our purposes is that they both attribute substantial amounts of innate information about language to the language learner. In what follows, we will look in more detail at the various arguments that have been used to support this ‘nativist’ theory of language acquisition. We will focus on the following question:

What evidence is there that children come to the language learning task equipped with a specialized store of inborn linguistic information, such as that specified in the linguist's theory of Universal Grammar?

Terminological Note: As Chomsky acknowledges (e.g., 1986:28-29), ‘Universal Grammar’ is used with a systematic ambiguity in his writings. Sometimes, the term refers to the inborn knowledge of language that learners are hypothesized to possess — the content of the ‘initial state’ of the language faculty — whatever that knowledge (/content) turns out to be. Other times, ‘Universal Grammar’ is used to refer to certain specific proposals as to the content of our innate linguistic knowledge, such as the Government-Binding theorist's claim that we have inborn knowledge of such things as the Principle of Structure Dependence, Binding theory, Theta theory, the Empty Category Principle, etc.

This ambiguity is important when one is evaluating Chomskyan claims that we have innate knowledge of UG. For on the first reading of ‘Universal Grammar’ distinguished above, that claim will be true so long as any form of nativism turns out to be true of language learners (i.e., so long as they possess any inborn knowledge about language). On the second reading, however, it is possible that learners have innate knowledge of language without that knowledge's being knowledge of UG (as currently described by linguists): learners might know things about language, yet not know Binding Theory, or the Principle of Structure Dependence, etc.

In this entry, ‘Universal Grammar’ will always be used in the second of these senses, to refer to a specific theory as to the content of learners' innate knowledge of language. Where the issue concerns merely their having some or other innate knowledge about language (and is neutral on the question of whether any particular theory about that knowledge is true), I will talk of ‘innate linguistic information.’ Clearly, an argument to the effect that speakers have inborn knowledge of UG entails the claim that they have innate linguistic information at their disposal. The reverse, however, is not the case: there might be reason to think that a speaker knows something about language innately, without its constituting reason to think that what they know is Universal Grammar as described by Chomksyan linguists; Chomksy might be right that we have innate knowledge about language, but wrong about what the content of that knowledge is. These issues will be clarified, as necessary, below.

2.2 Chomsky's ‘Poverty of the Stimulus’ Argument for the Innateness of Language

As we saw in §1.1, one of the conclusions Chomsky drew from his (1959) critique of the Skinnerian program was that language cannot be learned by mere association of ideas (such as occurs in conditioning). Since language mastery involves knowledge of grammar, and since grammatical rules are defined over properties of utterances that are not accessible to experience, language learning must be more like theory-building in science. Children appear to be ‘little linguists,’ making highly theoretical hypotheses about the grammar of their language and testing them against the data provided by what others say (and do):

It seems plain that language acquisition is based on the child's discovery of what from a formal point of view is a deep and abstract theory — a generative grammar of his language — many of the concepts and principles of that are only remotely related to experience by long and intricate chains of quasi-inferential steps. (Chomsky 1965:58)

However, argued Chomsky, just as conditioning was too weak a learning strategy to account for children's ability to acquire language, so too is the kind of inductive inference or hypothesis-testing that goes on in science. Successful scientific theory-building requires huge amounts of data, both to suggest plausible-seeming hypotheses and to weed out any false ones. But the data children have access to during their years of language learning (the ‘primary linguistic data’ or ‘pld’) are highly impoverished, in two important ways:

- they constitute a small finite sample of the infinitely many sentences natural languages contain

- they do not reliably contain the kinds of sentences that learners need to falsify incorrect hypotheses

The first type of inadequacy is, of course, endemic to any kind of empirical inquiry: it is simply the problem of the underdetermination of theories by their evidence. Cowie has argued elsewhere that underdetermination per se cannot be taken to be evidence for nativism: if it were, we would have to be nativists about everything that people learn (Cowie 1994; 1999). What of the second kind of impoverishment? If the evidence about language available to children does not enable them to reject false hypotheses, and if they nonetheless hit on the correct grammar, then language learning could not be a kind of scientific inquiry, which depends in part on being able to find evidence to weed out incorrect theories. And indeed, this is what Chomsky argues: since the pld are not sufficiently rich or varied to enable a learner to arrive at the correct hypothesis about the grammar of the language she is learning, language could not be learned from the pld.

For consider: The fact (i) that the pld are finite whereas natural languages are infinite shows that children must be generalizing beyond the data when they are learning their language's grammar: they must be proposing rules that cover as-yet unheard utterances. This, however, opens up room for error. In order to recover from particular sorts of error, children would need access to particular kinds of data. If those data don't exist, as (ii) asserts, then children would not be able to correct their mistakes. Thus, since children do eventually converge on the correct grammar for their language, they mustn't be making those sorts of errors in the first place: something must be stopping them from making generalizations that they cannot correct on the basis of the pld.

Chomsky (e.g., 1965: 30-31) expresses this last point in terms of the need for constraints — on grammatical concepts, on the hypothesis space, on the interpretation of data — and proposes that it is innate knowledge of UG that supplies the needed limitations. On this view, children learning language are not open-minded or naïve theory generators — they are not ‘little scientists.’ Instead, the human language-learning mechanism (the ‘language acquisition device’ or ‘LAD’) embodies built-in knowledge about human languages, knowledge that prevents learners from entertaining most possible grammatical theories. As Chomsky puts it:

A consideration of…the degenerate quality and narrowly limited extent of the available data … leave[s] little hope that much of the structure of the language can be learned by an organism initially uninformed as to its general character. (1965:58)

Chomsky rarely states the argument from the poverty of the stimulus in its general form, as Cowie has done here. Instead, he typically presents it via an example. One of these concerns learning how to form ‘polar interrogatives,’ i.e., questions demanding yes or no by way of answer, via a mechanism known as ‘auxiliary fronting.’[5] Suppose that a child heard pairs of sentences like the following:

1a. Jacob is happy today 1b. Is Jacob happy today? 2a. The girls are dancing 2b. Are the girls dancing?

She wants to figure out the rule you use to turn declaratives like (1a) and (2a) into interrogatives like (1b) and (2b). Here are two possibilities:

H1. Find the first occurrence of is in the sentence and move it to the front. H2. Find the first occurrence of is following the subject nounphrase (‘NP’) of the sentence, and move it to the front.

Both hypotheses are adequate to account for the data the learner has so far encountered. To any unbiased scientist, though, H1 would surely appear preferable to H2, for it is simpler — it is shorter, for one thing, and does not refer to theoretical properties, like being a NP, being instead formulated in terms of ‘observable’ properties like word order. Nonetheless, H1 is false, as is evident when you look at examples like (3):

3a. [The girl who is in the jumping castle]NP is Kayley's daughter 3b. *Is [the girl who in the jumping castle]NP is Kayley's daughter? 3c. Is [the girl who is in the jumping castle]NP Kayley's daughter?

H1 generates the ungrammatical question (3b), whereas H2 generates the correct version, (3c).[6] Now, you and I and every other English speaker know (in some sense — see §3.2.1a) that H1 is false and H2 is correct. That we know this is evident, Chomsky argues, from the fact that we all know that (3b) is not the right way to say (3c). The question is how we could have learnt this.

Suppose, for example, that based on her experience of (1) and (2), a child were to adopt H1. How would she discover her error? There would seem to be two ways to do this. First, she could use H1in her own speech, utter a sentence like (3b), and be corrected by her parents or caregivers; second, she could hear a sentence like (3c) uttered by a competent speaker, and realize that that sentence is not generated by her hypothesis, H1. But typically parents don't correct their children's ill formed utterances (see §2.2.1(c) for more on this), and worse, according to Chomsky, sentences like (3c) — sentences that are not generated by the incorrect rule H1 and hence would falsify it— do not occur often enough in the pld to guarantee that every native English speaker will be able to get it right.

So in answer to the question: how do we learn that H2 is better than H1, Chomsky argued that we don't learn this at all! A better explanation of how we all know that H2 is right and H1 is wrong is that we were born knowing this fact. Or, more accurately, we were born knowing a certain principle of UG (the ‘Principle of Structure Dependence’), which tells us that rules like H1 are not worth pursuing, their ostensible ‘simplicity’ notwithstanding, and that we should always prefer rules, like H2, which are stated in terms of sentences' structural properties. In sum, we know that H2 is a better rule than H1, but we didn't learn this from our experience of the language. Rather, this fact is a consequence of our inborn knowledge of UG.

Chomskyans contest that there are many other cases in which speaker-hearers know grammatical rules, the critical evidence in favor of which is missing from the pld. Kimball 1973:73-5, for instance, argues that complex auxiliary sequences like might have been are “vanishingly rare” in the pld, hence that children acquire competence with these constructions (in the sense of knowing the order in which to put the modal, perfect and progressive elements) without relevant experience. (Pullum and Scholz 2002, discuss two other well known examples.) Nativists thus conclude that numerous other principles of UG are innately known as well. Together, these UG principles place strong constraints on learners' linguistic theorizing, preventing them from making errors for which there are no falsifying data.

So endemic is the impoverishment of the pld, according to Chomskyans, that it began to seem as if the entire learning paradigm were inapplicable to language. As more and more and stricter and stricter innate constraints needed to be imposed on the learner's hypothesis space to account for their learning rules in the absence of relevant data, notions like hypothesis generation and testing seemed to have less and less purchase. This situation fuelled the recent shift away from hypothesis testing models of language acquisition and towards parameter setting models discussed in §2.1 above.

2.2.1 Criticisms of the Poverty of the Stimulus Argument

Many, probably most theorists in modern linguistics and cognitive science have accepted Chomsky's poverty of the stimulus argument for the innateness of UG. As a result, a commitment to linguistic nativism has underpinned most research into language acquisition over the last 40-odd years. Nonetheless, it is important to understand what criticisms have been leveled against the argument, which I schematize as follows for convenience:

The General Form of the Argument from the Poverty of the Stimulus

- Mastery of a language consists (in part) of knowing its grammar.

- In order to learn a certain rule of grammar, G children would have to have access to certain sorts of data, D, which falsify competing hypotheses.

- The primary linguistic data (pld) do not contain D.

So

- G could not be learned.

- This situation is quite general: many rules of grammar are unlearnable from the pld.

So

- UG is innately known.

2.2.1(a) Premiss 1: Knowledge of grammar

In the 1970's, philosophers contested Chomsky's use of the word ‘know’ to describe speakers' relations to grammar, arguing that unlike standard cases of propositional knowledge, most speakers are utterly unaware of grammatical rules (e.g., “Anaphors are bound, and pronominals and R-expressions are free in their binding domains”) and many probably wouldn't understand them even if told what they are (Stich 1971). In response, Chomsky (e.g., 1980:92) began to use a technical term, ‘cognize,’ to describe the speaker-grammar relation, avoiding the philosophically loaded term, ‘knowledge.’

However, while it is certainly legitimate to propose a special relationship between speakers and grammars, unanswered questions remain about the precise nature of cognizance. Is it a representational relation, like belief? If not, what does ‘learning a grammar’ amount to? If so, are speakers' representations of grammar ‘explicit’ or ‘implicit’ or ‘tacit’ — and what, exactly, do any of these terms mean? (See the papers collected in MacDonald 1995, for discussion of this last issue; see Devitt 2006 for arguments that there is no good reason to suppose that speakers use any representations of grammatical rules in their production and comprehension of language.) Relatedly, how does a speaker's cognizance of grammar (her ‘competence,’ in Chomskyan parlance) function in her linguistic ‘performance’ — i.e., in the actual production or comprehension of an utterance?

These issues bear on the argument from the poverty of the stimulus because that argument may appear more or less impressive depending on the answers one gives to them. If, for instance, one held that grammars are belief-like entities, explicitly represented in our heads in some internal code (cf. Stich 1978), then the question of how those beliefs are acquired and justified is indeed a pressing one — as, for different reasons, is the question of how they function in performance (see Harman 1967, 1969). However, if one were to deny that grammar is represented at all in the heads of speakers, like Devitt 2006 and Soames 1984, then the issue of how language is learned and what role ‘evidence’ etc. might play in that process takes on a very different cast. Or if, to take a third possibility, one were to reject generative syntax altogether and adopt a different conception of what the content of speakers' grammatical knowledge is — along the lines of Tomasello (2003), say — then that again affects how one views the learning process. In other words, one's ideas about what is learned affect one's conception of what is needed to learn it. Less ‘demanding’ conceptions of the outputs of language acquisition require less demanding conceptions of its input (whether experiential or inborn); this last approach to the problem of language learning is discussed further in §2.2.1 below.

2.2.1(b) Premiss 2: The learning algorithm

In the example of polar interrogatives, discussed above, we saw how children apparently require explicit falsifying evidence in order to rule out the plausible-seeming but false hypothesis, H1. Premiss 2 of the argument generalizes this claim: there are many instances in which learners need specific kinds of falsifying data to correct their mistakes (data that the argument goes on to assert are unavailable). These claims about the data learners would need in order to learn grammar are underpinned by certain assumptions about the learning algorithm they employ. For example, the idea that false hypotheses are rejected only when they are explicitly falsified in the data suggests that learners are incapable of taking any kind of probabilistic or holistic approach to confirmation and disconfirmation. Likewise, the idea that learners unequipped with inborn knowledge of UG are very likely indeed to entertain false hypotheses suggests that their method of generating hypotheses is insensitive to background information or past experience. (e.g., information about what sorts of generalizations have worked in other contexts

The non-nativist language learner as envisaged by Chomsky in the original version of the poverty of the stimulus argument, in other words, is limited to a kind of Popperian methodology — one that involves the enumeration of all possible grammatical hypotheses, each of which is tested against the data, and each of is rejected just in case it is explicitly falsified. As much work in philosophy of science over the last half century has indicated, though, nothing much of anything can be learned by this method: the world quite generally fails to supply falsifying evidence. Instead, hypothesis generation must be inductively based, and (dis)confirmation is a holistic matter.

Thus arise two problems for the Chomskyan argument. First, it is not all that surprising to discover that if language learners employed a method of conjecture and refutation, then language could not be learned from the data. In other words, the poverty of the stimulus argument doesn't tell us much we didn't know already. Secondly, and as a result, the argument is quite weak: it makes the negative point that language acquisition does not occur via a Popperian learning strategy, but it favors no specific alternative to this acquisition theory. In particular, the argument gives no more support to a nativist (UG-based) theory than to one that proposed (say) that learners formulate grammatical hypotheses based on their extraction of statistical information about the pld and that they may reject them for reasons other than outright falsification — because they lack explicit confirmation, or because they do not cohere with other parts of the grammar, for instance.

In reply, some Chomskyans (e.g., Matthews 2001) challenge non-nativists to produce these alternative theories and submit them to empirical test. It's pointless, they claim, for nativists to try to argue against theories that are mere gleams in the empiricist's eye, particularly when Chomsky's approach has been so fruitful and thus may be supported by a powerful inference to the best explanation. Others have argued explicitly against particular non-nativist theories — Marcus 1998, 2001, for instance, discusses the shortcomings of connectionist accounts of language acquisition.

A recent book by Michael Tomasello (Tomasello 2003) addresses the nativist's demand for an alternative theory directly. Tomasello argues that language learners acquire knowledge of syntax by using inductive, analogical and statistical learning methods, and by examining a broader range of data for the purposes of confirmation and disconfirmation. He argues that children formulate abstract syntactic generalizations rather late in the learning process (around the age of 4 or 5) and that their earliest utterances are governed by much less general rules of thumb, or ‘constructions.’ More abstract constructions, framed in increasingly adult-like and ‘syntactic’ terms, are progressively formulated through the application of pattern-recognition skills (‘analogy’) and a kind of statistical analysis of both incoming data and previously acquired constructions, which Tomasello calls ‘functional distributional analysis.’[7]

Tomasello's theory differs from a Chomskyan approach in three important respects. First, and taking up a point mentioned in the previous section, it employs a different conception of linguistic competence, the end state of the learning process. Rather than thinking of competent speakers as representing the rules of grammar in the maximally abstract, simple and elegant format devised by generative linguists, Tomasello conceives of them as employing rules at a variety of different levels of abstraction, and, importantly, as employing rules that are not formulated in purely syntactic terms. He adopts a different type of grammar, called ‘cognitive-functional grammar’ or ‘usage-based grammar,’ in which rules are stated partly in terms of syntactic categories, but also in semantic terms, that is, in terms of their patterns of use and communicative function. A second respect in which Tomasello's approach differs from that of most theorists in the Chomskyan tradition, is in employing a much richer conception of the ‘primary linguistic data,’ or pld. For generative linguists, the pld comprises a set of sentences, perhaps subject to some preliminary syntactic analysis, and the child learning grammar is thought of as embodying a function which maps that set of sentences onto the generative grammar for her language. On Tomasello's conception, the pld includes not just a set of sentences, but also facts about how sentences are used by speakers to fulfill their communicative intentions. On his view, semantic and contextual information is also used by children for the purposes of acquiring grammatical knowledge.

Tomasello argues that by adopting a more ‘user-friendly’ conception of natural language grammars and by radically expanding one's conception of the language-relevant information available to children learning language, the ‘gap’ exploited by the argument from the poverty of the stimulus — that is, the gap between what we know about language and the data we learn it from — in large part disappears. This gives rise to a third important respect in which Tomasello's theory differs from that of the linguistic nativist. On his view, children learn language without the aid of any inborn linguistic information: what children bring to the language learning task — their innate endowment — is not language-specific. Instead, it consists of ‘mind reading,’ together with perceptual and cognitive skills that are employed in other domains as well as language learning. These skills include: (i) the ability to share attention with others; (ii) the ability to discern others' intentions (including their communicative intentions); (iii) the perceptual ability to segment the speech stream into identifiable units at different levels of abstraction; and (iv) general reasoning skills, such as the ability to recognize patterns of various sorts in the world, the ability to make analogies between patterns that are similar in certain respects, and the ability to perform certain sorts of statistical analysis of these patterns. Thus, Tomasello's theory contrasts strongly with the nativist approach.

Although assessing Tomasello's theory of language acquisition is beyond the scope of this entry, this much can be said: the oft-repeated charge that empiricists have failed to provide comprehensive, testable alternatives to Chomskyanism is no longer sustainable, and if the what and how of language acquisition are along the lines that Tomasello describes, then the motivation for linguistic nativism largely disappears.

2.2.1(c) Premiss 3: What do the pld contain?

A third problem with the poverty of the stimulus argument is that there has been little systematic attempt to provide empirical evidence supporting its assertions about what the pld contain. This is an old complaint (cf. Sampson 1989) which has recently been renewed with some vigor by Pullum and Scholz 2002, Scholz and Pullum 2002, and Sampson 2002. Pullum and Scholz provide evidence that, contrary to what Chomsky asserts in his discussion of polar interrogatives, children can expect to encounter plenty of data that would alert them to the falsity of H1. Sampson 2002, mines the ‘British National Corpus/demographic,’ a 100 million word corpus of everyday British speech (available online at http://info.ox.ac.uk/bnc/), for evidence that contrary to Kimball's contention that complex auxiliaries are ‘vanishingly rare,’ they in fact occur quite frequently (somewhere from once every 10,000 words to once every 70,000 words, or once every couple of days to once a week).

Chomskyans respond in two main ways to findings like this. First, they argue, it is not enough to show that some children can be expected to hear sentences like Is the girl in the jumping castle Kayley's daughter? All children learn the correct rule, so the claim must be that all children are guaranteed to hear sentences of this form — and this claim is still implausible, data like those just discussed notwithstanding.[8] In order to take this question further, it would be necessary to determine when in fact children master the relevant structures, and vanishingly little work has been done on this topic. Sampson 2002:82ff. found no well-formed auxiliary fronted questions (like Is the girl who is in the jumping castle Kayley's daughter?) in his sample of the British National Corpus. He notes that in addition to supporting Chomsky's claims about the poverty of the pld, such data simultaneously problematize his claims about children's knowledge of the auxiliary-fronting rule itself. Sampson found that speakers invariably made errors when apparently attempting to produce complex auxiliary-fronted questions, and often emended their utterance to a tag form instead (e.g., The girl who's in the jumping castle is Kayley's daughter, isn't she?). Hespeculates that the construction is not idiomatic even in adult language, and that speakers learn to form and decode such questions much later in life, after encountering them in written English. If that were the case, then the lack of complex auxiliary fronted questions in the pld would be both unsurprising and unproblematic: young children don't hear the sentences, but nor do they learn the rule. To my knowledge, children's competence with the auxiliary fronting rule has not been addressed empirically.[9]

Secondly, Chomskyans may produce other versions of the poverty of the stimulus argument. For instance, Crain 1991 constructs a poverty of the stimulus argument concerning children's acquisition of knowledge of certain constraints on movement. However, while Crain's argument carefully documents children's conformity to the relevant grammatical rules, its nativist conclusion still relies on unsubstantiated intuitions as to the non-occurrence of relevant forms or evidence in the pld. It is thus inconclusive. (Cf. Crain 1991; Crain's experiments and their implications are discussed in Cowie 1999 ; Cf. also Crain and Pietrowski 2001, 2002).

2.2.1(d) The validity of the argument

The argument from (1), (2), and (3) to (4) appears valid. However, as is implicit in my discussion of premiss (2), an equivocation between different senses of ‘learning’ threatens. What (1)-(3) show, if true, is that grammar G can't be learned from the pld by a learner using a ‘Popperian’ learning strategy, that is, a strategy of ‘bold conjecture’ and refutation. What (4) concludes, however, is that G is unlearnable, period, from the pld — a move that several authors, particularly connectionists, have objected to. (See especially Elman et al. 1996 and Elman 1998 for criticisms of Chomskyan nativism along these lines; see Marcus 1998 and 2001 for responses.)

Chomskyans typically take this point, conceding that the argument from the poverty of the stimulus is not apodeictic. Nonetheless, they claim, it's a very good argument, and the burden of proof belongs with their critics. After all, nativists have shown the falsity of the only non-nativist acquisition theories that are well-enough worked out to be empirically testable, namely, Skinnerian behaviorism and Popperian conjecture and refutation. In addition, they have proposed an alternative theory, Chomskyan nativism, which is more than adequate to account for the phenomena. In empirical science, this is all that they can reasonably be required to do. The fact that there might be other possible acquisition algorithms which might account for children's ability to learn language is neither here nor there; nativists are not required to argue against mere possibilities.

In response, some non-nativists have argued that UG-based theories are not in fact good theories of language acquisition. Tomasello (2003: 182ff.), for instance, identifies two major areas of difficulty for UG-based theories, such as the principles-and-parameters approach. First, there is the ‘linking’ problem, deriving from the fact of linguistic diversity: almost no UG-based accounts explain how children link the highly abstract categories of UG to their instantiations in the particular language they happen to be learning.[10] His example is the category ‘Head,’ In order to set the ‘Head parameter,’ a child needs to be able to identify which words in the stream of noise she is hearing are in fact clausal heads. But heads “do not come with identifying tags on them in particular languages; they share no perceptual features in common across languages, and so their means of identification cannot be specified in [UG]” (Tomasello 2003:183). Second, there is the problem of developmental change, also emphasized by Sokolov and Snow, 1991. It is difficult to see how UG-based approaches can account for the fact that children's linguistic performance seems to emerge piecemeal over time, rather than emerging in adult-like form all at once, as the parameter-setting model suggests it should.[11] In response, generativists have appealed to such notions as ‘maturational factors’ or ‘performance factors.’ But, Tomasello argues, such measures are ad hoc in the absence of a detailed specification of what these maturational or performance factors are, and how they give rise to children's actual performance.

At the very least, such objections serve to equalize the burden of proof: non-nativists certainly have work to do, but so too do nativists. Merely positing an innate UG and a ‘triggering’ mechanism by which it ‘grows’ into full-fledged language is insufficient. Nativists need to show how their theory can account for the known course of language acquisition. Merely pointing out that there is a possibility that such theories are true, and that they would, if true, explain how language learning occurs in the face of an allegedly impoverished stimulus, is only part of the job.

2.2.1(e) Premiss 5: How general is the poverty of the stimulus?

Because they are defending the view that all of UG is inborn, Chomskyans must be credited with holding that the primary data are impoverished quite generally. That is, if the innateness of UG tout court is to be supported by poverty of the stimulus considerations, the idea must be that the cases that nativists discuss in detail (polar interrogatives, complex auxiliaries, etc.) are but the tip of the unlearnable iceberg. Nativists quite reasonably do not attempt to defend this claim by endless enumeration of cases. Rather, they turn to another kind of argument to support the ‘global impoverishment’ position. This argument is sometimes called the ‘Logical Problem of Language Acquisition’; here, we will call it ‘The Unlearning Problem.’ It will be discussed in section 3.

2.2.1(f) The validity of the argument (II): What is inborn?

Suppose that the primary linguistic data were impoverished in all the ways that nativists claim and suppose, too, that children know a bunch of things for which there is no evidence available — suppose, as Hornstein and Lightfoot (1981:9) put it, that “[p]eople attain knowledge of the structure of their language for which no evidence is available in the data to which they are exposed as children.” What follows from this is that there must be constraints on the learning mechanism: children do not enumerate all possible grammatical hypotheses and test them against the data. Some possible hypotheses must be ruled out a priori. But, critics allege, what does not follow from this is any particular view about the nature of the requisite constraints. (Cowie 1999: ch.8.) A fortiori, what does not follow from this is the view that Universal Grammar (construed as a theory about the structural properties common to all natural languages, per Terminological Note 2 above) is inborn.

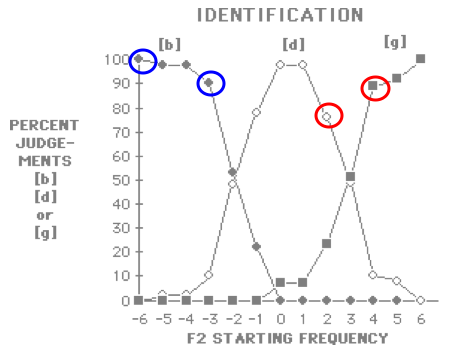

For all the poverty of the stimulus argument shows, the constraints in question might indeed be language-specific and innate, but with contents quite different from those proposed in current theories of UG. Or, the constraints might be innate, but not language-specific. For instance, as Tomasello 2003 argues, children's early linguistic theorizing appears to be constrained by their inborn abilities to share attention with others and to discern others' communicative intentions. On his view, a child's early linguistic hypotheses are based on the assumption that the person talking to him is attempting to convey information about the thing(s) that they are both currently attending to. (Another example of an innate but non-language specific constraint on language learning derives from the structure of the mammalian auditory system; ‘categorical perception,’ and is relation to the acquisition of phonological knowledge is discussed below, §3.3.4.). Another alternative is that the constraints might be learned, that is, derived from past experiences. An example again comes from Tomasello (2003). He argues that entrenchment, or the frequency with which a linguistic element has been used with a certain communicative function, is an important constraint on the development of children's later syntactic knowledge. For instance, it has been shown experimentally that the more often a child hears an element used for a particular communicative purpose, the less likely she is to extend that element to new contexts. (See Tomasello 2003:179).

In short, there are many ways to constrain learners' hypotheses about how their language works. Since the poverty of the stimulus argument merely indicates the need for constraints, it does not speak to the question of what sorts of constraints those might be.

In response to this kind of point, Chomskyans point out that the innateness of UG is an empirical hypothesis supported by a perfectly respectable inference to the best explanation. Of course there is a logical space between the conclusion that something constrains the acquisition mechanism and the Chomskyan view that these constraints are inborn representations of Binding Theory, Theta theory, the ECP, the principle of Greed or Shortest Path and so on. But the mere fact that the argument from the poverty of the stimulus doesn't prove that UG is innately known is hardly reason to complain. This is science, after all, and demonstrative proofs are neither possible nor required. What the argument from the poverty of the stimulus provides is good reason to think that there are strong constraints on the learning mechanism. UG is at hand to supply a theory of those constraints. Moreover, that theory has been highly productive of research in numerous areas (linguistics, psycholinguistics, developmental psychology, second language research, speech pathology etc. etc.) over the last 50 years. These successes far outstrip anything that non-nativist learning theorists have able to achieve even in their wildest dreams, and support a powerful inference to the best explanation in the Chomskyan's favor.

2.2.1(g) Who has the burden of proof?

As seen above (§2.2.1(d)), however, the strength of the Chomskyan's ability to explain the phenomena of language acquisition has been questioned, and with it, implicitly, the strength of her inference to the best explanation. In addition, there is a general debate within the philosophy of science as to the soundness of inferences to the best explanation: does an explanation's being the best available give any additional reason (over and above its ability to account for the phenomena within its domain) to suppose it true? [Link to Encyclopedia Article ‘Abduction’ by Peter Achinstein for more on this topic.]

In the linguistic case, what sometimes seems to underpin people's positions on such issues is differing intuitions as to who has the burden of proof in this debate. Empiricists or non-nativists contend that Chomskyans have not presented enough data (or considered enough alternative hypotheses) to establish their case. Chomskyans reply that they have done more than enough, and that the onus is on their critics either to produce data disconfirming their view or to produce a testable alternative to it.

That such burden-shifting is endemic to discussions of linguistic nativism (the exchange in Ritter 2002 is illustrative) suggests to me that neither side in this debate has as yet fulfilled its obligations. Empiricists about language acquisition have ably identified a number of points of weakness in the Chomskyan case, but have only just begun to take on the demanding task of developing develop non-nativist learning theories, whether for language or anything much else. Nativists have rested content with hypotheses about language acquisition and innate knowledge that are based on plausible-seeming but largely unsubstantiated claims about what the pld contain, and about what children do and do not know and say.

It is unclear how to settle such arguments. While some may disagree (especially some Chomskyans), it seems that much work still needs to be done to understand how children learn language — and not just in the sense of working out the details of which parameters get set when, but in the sense of reconceiving both what linguistic competence consists in, and how it is acquired. In psychology, a new, non-nativist paradigm for thinking about language and learning has begun to emerge over the last 10 or so years, thanks to the work of researchers like Elizabeth Bates, Jeffrey Elman, Patricia Kuhl, Michael Tomasello and others. The reader is referred to Elman et al. 1996, Tomasello 2003 and §3 below for an entrée into this way of thinking.

For now, considerations of space demand a return to our topic, viz., linguistic nativism, rather than further discussion of alternatives to it.

2.3 The Argument from the ‘Unlearning Problem’

We saw in the previous section that in order to support the view that all of UG is innately known, nativists about language need to hold not just that the data for language learning is impoverished in a few isolated instances, but that it's impoverished across the board. That is, in order to support the view that the innate contribution to language acquisition is something as rich and detailed as knowledge of Universal Grammar, nativists must hold that the inputs to language acquisition are defective in many and widespread cases. (After all, if the inputs were degenerate only in a few isolated instances, such as those discussed above, the learning problem could be solved simply by positing innate knowledge of a few relevant linguistic hints, rather than all of UG.)

Pullum and Scholz (2002:13) helpfully survey a number of ways in which nativists have made this point, including:

- Finiteness: the pld (primary linguistic data) are finite, whereas languages contain infinitely many sentences.

- Underdetermination: the pld are always compatible with infinitely many grammatical hypotheses.

- Degeneracy: the pld contain ungrammatical and incomplete sentences.

- Idiosyncrasy: different children learning the same language are exposed to different samples of sentences.

- Positivity: the pld contain only positive instances (what is a sentence of the language to be learned, a.k.a. the ‘target language’).

- No Feedback: children are not told or rewarded when they get things right, and are not corrected when they make mistakes.

In this section, I will set aside features (i) and (ii) as being characteristic of any empirical domain: the data are always finite, and they always underdetermine one's theory. No doubt it's an important problem for epistemologists and philosophers of science to explain how general theories can nonetheless be confirmed and believed. No doubt, too, it's an important problem for psychologists to explain the mechanisms by which individuals acquire general knowledge about the world on the basis of their experience. But underdetermination and the finiteness of the data are everyone's problem: if these features of the language learning situation per se supported nativism, then we should accept that all learning, in every domain, requires inborn domain-specific knowledge. But while it's not impossible that everything we know that goes beyond the data is a result of our having domain-specific innate knowledge, this view is so implausible as to warrant no further discussion here.

I also set aside features (iii) and (iv). For one thing, it is unclear exactly how degenerate the pld are; according to one early estimate, an impressive 99.7% of utterances of mothers to their children are grammatically impeccable (Newport, Gleitman and Gleitman 1977). And even if the data are messier than this figure suggests, it is not unreasonable to suppose that the vast weight of grammatically well-formed utterances would easily swamp any residual noise. As to the idiosyncrasy of different children's data sets, this is not so much a matter of stimulus poverty as stimulus difference. As such, idiosyncrasy becomes a problem for a non-nativist only on the assumption that different children's states of linguistic knowledge differ from one another less than one would expect given the differences in their experiences. As far as I know, no serious case for this last claim has ever been made.[12]

In this section, we will focus on features (v) and (vi) of the pld. For it is consideration of the positivity of the data set, and the lack of feedback available to children, that has given rise to what I am calling the ‘Unlearning Problem,’ otherwise known (somewhat misleadingly) as the ‘Logical Problem of Language Acquisition.’ (For statements of the argument, see, e.g., Baker 1979; Lasnik; 1989:89-90; Pinker 1989.)

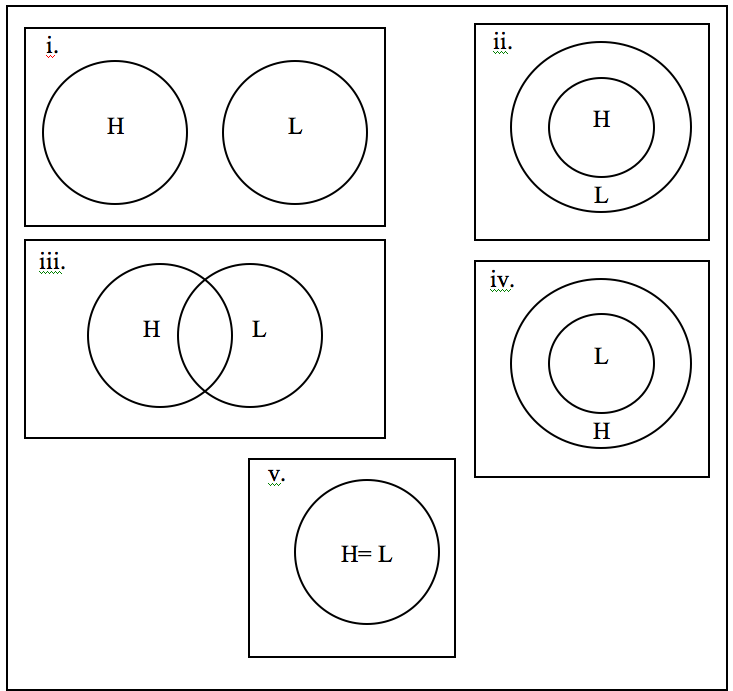

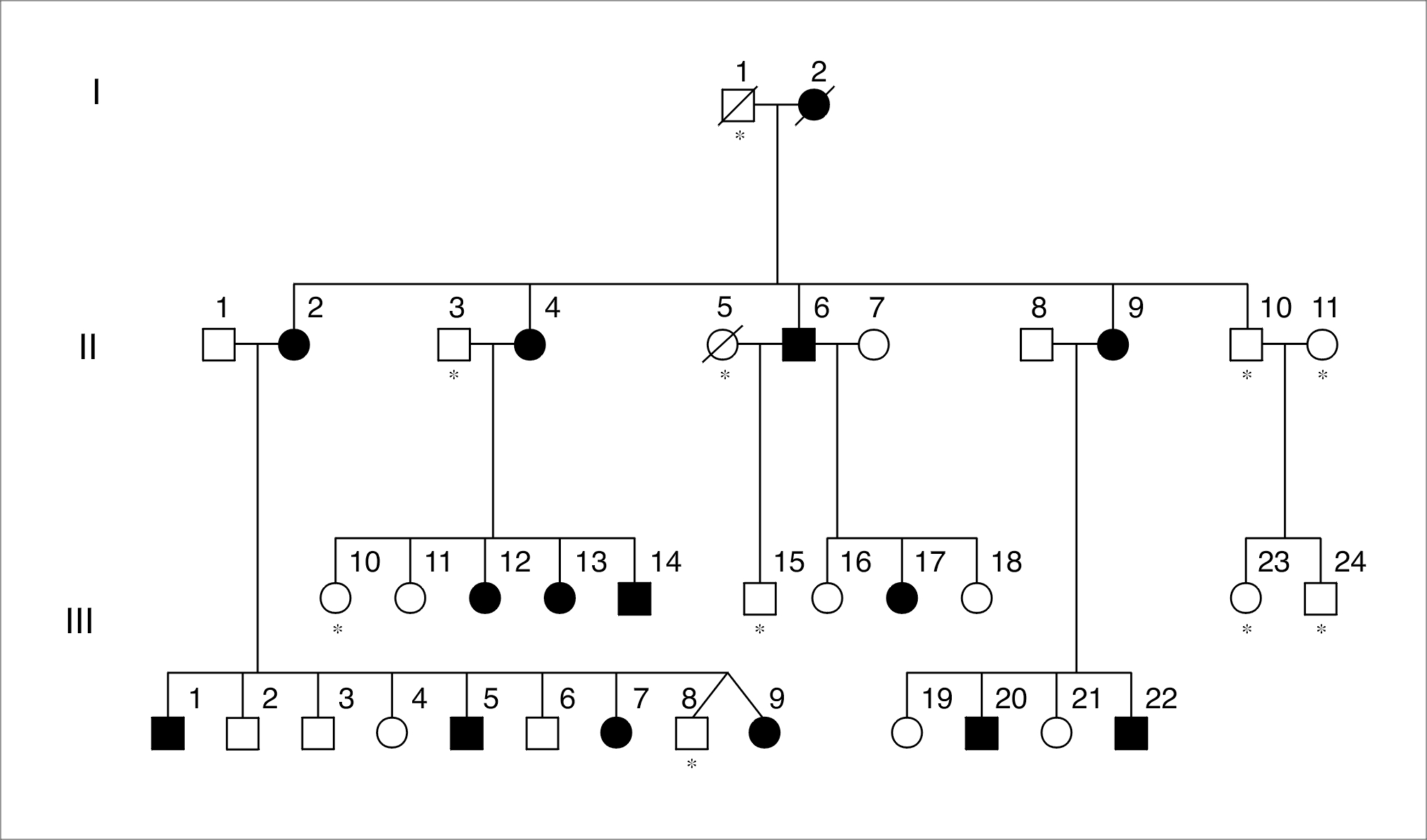

Figure 2. Five possible relations between the language generated by hypothesis (H) and the target grammar (L)

Take a child learning the grammar of her language, L. Figure 2 represents the 5 possible relations that might obtain between the language generated by her current hypothesis, H, and that generated by the target grammar, L. (v) represents the end point of the learning process: the learner has figured out the correct grammar for her language. A learner in situation (i), (ii) or (iii) is in good shape, for she can easily use the pld as a basis for correcting her hypothesis as follows: whenever she encounters a sentence in the data (i.e., a sentence of L) that is not generated by H, she has to ‘expand’ her hypothesis so that it generates that sentence. In this way, H will keep moving, as desired, towards L. However, suppose that the learner finds herself in situation (iv), where her hypothesis generates all of the target language, L, and more besides. (Children frequently find themselves in this position, for example, they invariably go through a phase in which they overgeneralize regular past tense verb endings to irregular verbs; their grammars generate the incorrect *I breaked it as well as the correct I broke it.) There, she is in deep trouble, for she cannot use the pld to discover her error. Every sentence of L, after all, is already a sentence of H. In order to ‘shrink’ her hypothesis — to ‘unlearn’ the rules that generate *I breaked it — she needs to know which sentences of H are not sentences of L — she needs to figure out that *I breaked it is not a sentence of English. But — and this is the problem — this kind of evidence, often called ‘negative evidence,’ is held to be unavailable to language learners.

For as we have seen, the pld is mostly just a sample of sentences, of positive instances of the target language. It contains little, if any, information about strings of words that are not sentences. For instance, children aren't given lists of ungrammatical strings. Nor are they typically corrected when they make mistakes. And nor can they simply assume that strings that haven't made their way into the sample are ungrammatical: there are infinitely many sentences that are absent from the data for the simple reason that no-one's had occasion to say them yet.

In sum: a child who is in situation (iv) — a child whose grammar ‘overgenerates’ — would need negative evidence in order to recover from her error. Negative evidence, however, does not appear to exist. Since children do manage to learn languages, they must never get themselves into situation (iv): they must never need to ‘unlearn’ any grammatical rules. There are two ways they could do this. One would be never to generalize beyond the data at all. But clearly, children do generalize, else they'd never succeed in learning a language. The other would be if there were something that ensured that when they generalize beyond the data, they don't overgeneralize, something, that is, that ensures that children don't make errors that they could only correct on the basis of negative evidence. According to the linguistic nativist, this something is innate knowledge of UG.

2.3.1 Criticisms of the ‘Unlearning’ Argument

2.3.1 (a) What is lacking? Negative data vs. Negative Evidence

First, let's make a distinction between:

Negative Data: explicit information that a given string of words is not a sentence of the target language. (E.g., “No, that's not how you say it,” or “It's I broke it not I breaked it,” or “That string of words is ungrammatical,” etc.)

and

Negative Evidence: information that would enable a learner to tell that a given hypothesis is (very likely to be) incorrect. (See below for examples.)

Second, let's abandon the idea, which reappears in many presentations of the Argument from the Unlearning Problem; that learners' hypotheses must be explicitly falsified in the data in order to be rejected. Let's suppose instead that learners proceed more like actual scientists do — provisionally abandoning theories due to lack of confirmation, making theoretical inferences to link data with theories, employing statistical information, and making defeasible, probabilistic (rather than decisive, all-or-nothing) judgments as to the truth or falsity of their theories.[13]

Intuitively, viewing the learner as employing more stochastic and probabilistic inductive techniques enables one to see how the unlearning problem might have been overblown. What the argument claims, rightly, is that negative data near enough do not exist in the pld. However, what learners need in order to recover from overgeneralizations, is not negative data per se, but negative evidence, and arguably, the pld do contain significant amounts of that. For example:

- Failures of understanding or communication: others' failures to understand children's linguistic productions (evidenced either by requests for repetition or by communicative failure) are evidence to the learner that there is something wrong with the rule(s) she was using to generate her utterance. This evidence is not decisive (maybe Granny just couldn't hear her properly), but it is negative evidence nonetheless.

Non-occurrence of structural types as negative evidence: Suppose that a child's grammar predicted that a certain string is part of the target language. Suppose further that that string never appears in the data, even when the context seems appropriate. Proponents of the unlearning problem say that non-occurrence cannot constitute negative evidence — maybe Dad simply always chooses to say The girl who is in the jumping castle is Kayley's daughter, isn't she? rather than the auxiliary-fronted version, Is the girl who is in the jumping castle Kayley's daughter? If so, it would be a mistake for the child to conclude on the basis of this information that the latter string is ungrammatical.

But suppose that the child is predicting not strings of words, simpliciter, but rather strings of words under a certain syntactic description (or, perhaps more plausibly, quasi-syntactic description — the categories employed need not be the same as those employed in adult grammars).[14] This would enable her to make much better use of non-occurrence as negative evidence. For non-occurring strings will divide into two broad kinds: those whose structures have been encountered before in the data, and those whose structures have not been heard before. In the former case, the child has positive evidence that strings of that kind are grammatical, evidence that would enable her to suppose that the non-occurrence of that particular string was just an accident. (E.g., she could reason that since she's heard Is that mess that is on the floor in there yours? many times, and since that string has the same basic structure as Is that girl that's in the jumping castle Kayley's daughter?, the latter string is probably OK even though Dad chose not to say it.)

In the case in which the relevant form has never been encountered before in the data, however, the child is better off: the fact that she has never heard any utterance with the structure of *Is that girl who in the jumping castle is Kayley's daughter or *Is that mess that that on the floor in there is yours? is evidence that strings of that type are not sentences. Again, the evidence is not decisive, and the child should be prepared to revise her grammar should strings of that kind start appearing. Nonetheless, the non-occurrence of a string, suitably interpreted in the light of other linguistic information, can constitute negative evidence and provide learners with reason to reject overgeneral grammars.

Positive Evidence as Negative Evidence. Relatedly, learners can also exploit positive evidence as to which strings occur in the pld as a source of negative evidence — again in a tentative and revisable way.[15] Suppose that the child's grammar generated two strings as appropriate in a given kind of context, but that only one sort of string was ever produced by those around her. The fact that only strings of the first kind occur is in this case negative evidence — defeasible, to be sure, but negative evidence nonetheless.

In fact, the use of positive evidence to disconfirm hypotheses is endemic to science. For instance, Millikan used positive evidence to disconfirm the theory that electrical charge is a quantity that varies continuously. In his famous ‘Oil Drop’ experiment, he found that the amount of charge possessed by a charged oil drop was always a whole-number multiple of —(1.6 x 10-19)C. The finding that all observed charges were ‘quantized’ in this manner disconfirmed the competing ‘continuous charges’ hypothesis in the same way that positive evidence can disconfirm grammatical hypotheses.[16]

- Feedback The Argument from the ‘Unlearning Problem’ also points to the lack of feedback provided to children learning language. In a famous study often cited by proponents of the argument, Brown and Hanlon 1970 (see also Brown, 1973 and Brown, Cazden, and Bellugi 1969) found no overt disapproval by mothers of the syntactic errors of their children, and moreover found that caregivers had no trouble understanding their charges' ill-formed utterances. Only semantic errors were occasionally corrected; grammatical mistakes went unremarked.

However, more recent findings have uncovered evidence indicating that failures of understanding occur with some regularity, and that there is a wealth of feedback about correct usage in the language-learning environment. For example:

- Hirsh-Pasek, Trieman and Schneiderman (1984) studied interactions between 2 year olds and their parents, and discovered that caregivers repeated and corrected 20.8% of flawed sentences, whereas they only repeated (without correction) 12.0% of well-formed utterances.

- Demetras, Post and Snow (1986) found that in general, only well-formed sentences were repeated verbatim by parents, and that ill-formed sentences were not repeated verbatim, but were rather followed by clarification questions (“What?” — indicating a lack of understanding) or expansions and/or recasts, correcting the error.