Philosophy of Linguistics

Philosophy of linguistics is the philosophy of science as applied to linguistics. This differentiates it sharply from the philosophy of language, traditionally concerned with matters of meaning and reference.

As with the philosophy of other special sciences, there are general topics relating to matters like methodology and explanation (e.g., the status of statistical explanations in psychology and sociology, or the physics-chemistry relation in philosophy of chemistry), and more specific philosophical issues that come up in the special science at issue (simultaneity for philosophy of physics; individuation of species and ecosystems for the philosophy of biology). General topics of the first type in the philosophy of linguistics include:

- What the subject matter is,

- What the theoretical goals are,

- What form theories should take, and

- What counts as data.

Specific topics include issues in language learnability, language change, the competence-performance distinction, and the expressive power of linguistic theories.

There are also topics that fall on the borderline between philosophy of language and philosophy of linguistics: of “linguistic relativity” (see the supplement on the linguistic relativity hypothesis in the Summer 2015 archived version of the entry on relativism), language vs. idiolect, speech acts (including the distinction between locutionary, illocutionary, and perlocutionary acts), the language of thought, implicature, and the semantics of mental states (see the entries on analysis, semantic compositionality, mental representation, pragmatics, and defaults in semantics and pragmatics). In these cases it is often the kind of answer given and not the inherent nature of the topic itself that determines the classification. Topics that we consider to be more in the philosophy of language than the philosophy of linguistics include intensional contexts, direct reference, and empty names (see the entries on propositional attitude reports, intensional logic, rigid designators, reference, and descriptions).

This entry does not aim to provide a general introduction to linguistics for philosophers; readers seeking that should consult a suitable textbook such as Akmajian et al. (2010) or Napoli (1996). For a general history of Western linguistic thought, including recent theoretical linguistics, see Seuren (1998). Newmeyer (1986) is useful additional reading for post-1950 American linguistics. Tomalin (2006) traces the philosophical, scientific, and linguistic antecedents of Chomsky’s magnum opus (1955/1956; published 1975), and Scholz and Pullum (2007) provide a critical review. Articles that have focused on the philosophical implications of generative linguistics include Ludlow (2011) and Rey (2020). For recent articles on the philosophy of linguistics more generally, Itkonen (2013) discusses various aspects of the field from its early Greek beginnings, Pullum (2019) details debates that have engaged philosophers from 1945 to 2015, and Nefdt (2019a) discusses connections with contemporary issues in the philosophy of science.

- 1. Three Approaches to Linguistic Theorizing: Externalism, Emergentism, and Essentialism

- 2. The Subject Matter of Linguistic Theories

- 3. Linguistic Methodology and Data

- 4. Language Acquisition

- 5. Language Evolution

- Bibliography

- Academic Tools

- Other Internet Resources

- Related Entries

1. Three Approaches to Linguistic Theorizing: Externalism, Emergentism, and Essentialism

The issues we discuss have been debated with vigor and sometimes venom. Some of the people involved have had famous exchanges in the linguistics journals, in the popular press, and in public forums. To understand the sharp disagreements between advocates of the approaches it may be useful to have a sketch of the dramatis personae before us, even if it is undeniably an oversimplification.

We see three tendencies or foci, divided by what they take to be the subject matter, the approach they advocate for studying it, and what they count as an explanation. We characterize them roughly in Table 1.

| externalists | emergentists | essentialists | |

| Primary phenomena | Actual utterances as produced by language users | Facts of social cognition, interaction, and communication | Intuitions of grammaticality and literal meaning |

| Primary subject matter | Language use; structural properties of expressions and languages | Linguistic communication, cognition, variation, and change | Abstract universal principles that explain the properties of specific languages |

| Aim | To describe attested expression structure and interrelations, and predicting properties of unattested expressions | To explain structural properties of languages in terms of general cognitive mechanisms and communicative functions | To articulate universal principles and provide explanations for deep and cross-linguistically constant linguistic properties |

| Linguistic structure | A system of patterns, inferrable from generally accessible, objective features of language use | A system of constructions that range from fixed idiomatic phrases to highly abstract productive types | A system of abstract conditions that may not be evident from the experience of typical language users |

| Values | Accurate modeling of linguistic form that accords with empirical data and permits prediction concerning unconsidered cases | Cognitive, cultural, historical, and evolutionary explanations of phenomena found in linguistic communication systems | Highly abstract, covering-law explanations for properties of language as inferred from linguistic intuitions |

| Children’s language | A nascent form of language, very different from adult linguistic competence | A series of stages in an ontogenetic process of developing adult communicative competence | Very similar to adult linguistic competence though obscured by cognitive, articulatory, and lexical limits |

| What is acquired | A grasp of the distributional properties of the constituents of expressions of a language | A mainly conventional and culturally transmitted system for linguistic communication | An internalized generative device that characterizes an infinite set of expressions |

Table 1. Three Approaches to the Study of Language

A broad and varied range of distinct research projects can be pursued within any of these approaches; one advocate may be more motivated by some parts of the overall project than others are. So the tendencies should not be taken as sharply honed, well-developed research programs or theories. Rather, they provide background biases for the development of specific research programs—biases which sometimes develop into ideological stances or polemical programs or lead to the branching off of new specialisms with separate journals. In the judgment of Phillips (2010), “Dialog between adherents of different approaches is alarmingly rare.”

The names we have given these approaches are just mnemonic tags, not descriptions. The Externalists, for example, might well have been called ‘structural descriptivists’ instead, since they tend to be especially concerned to develop models that can be used to predict the structure of natural language expressions. The Externalists have long been referred to by Essentialists as ‘empiricists’ (and sometimes Externalists apply that term to themselves), though this is misleading (see Scholz and Pullum 2006: 60–63): the ‘empiricist’ tag comes with an accusation of denying the role of learning biases in language acquisition (see Matthews 1984, Laurence and Margolis 2001), but that is no part of the Externalists’ creed (see e.g. Elman 1993, Lappin and Shieber 2007).

Emergentists are also sometimes referred to by Essentialists as ‘empiricists’, but they either use the Emergentist label for themselves (Bates et al. 1998, O’Grady 2008, MacWhinney 2005) or call themselves ‘usage-based’ linguists (Barlow and Kemmer 2002, Tomasello 2003) or ‘construction grammarians’ (Goldberg 1995, Croft 2001). Newmeyer (1991), like Tomasello, refers to the Essentialists as ‘formalists’, because of their tendency to employ abstractions, and to use tools from mathematics and logic.

Despite these terminological inconsistencies, we can look at what typical members of each approach would say about their vision of linguistic science, and what they say about the alternatives. Many of the central differences between these approaches depend on what proponents consider to be the main project of linguistic theorizing, and what they count as a satisfying explanation.

Many researchers—perhaps most—mix elements from each of the three approaches. For example, if Emergentists are to explain the syntactic structure of expressions by appeal to facts about the nature of the use of symbols in human communication, then they will presuppose a great deal of Externalist work in describing linguistic patterns, and those Externalists who work on computational parsing systems frequently use (at least as a starting point) rule systems and ‘structural’ patterns worked out by Essentialists. Certainly, there are no logical impediments for a researcher with one tendency from simultaneously pursuing another; these approaches are only general centers of emphasis.

1.1 The Externalists

If one assumes, with the Externalists, that the main goal of a linguistic theory is to develop accurate models of the structural properties of the speech sounds, words, phrases, and other linguistic items, then the clearly privileged information will include corpora (written and oral)—bodies of attested and recorded language use (suitably idealized). The goal is to describe how this public record exhibits certain (perhaps non-phenomenal) patterns that are projectable.

American structural linguistics of the 1920s to 1950s championed the development of techniques for using corpora as a basis for developing structural descriptions of natural languages, although such work was really not practically possible until the wide-spread availability of cheap, powerful, and fast computers. André Martinet (1960: 1) notes that one of the basic assumptions of structuralist approaches to linguistics is that “nothing may be called ‘linguistic’ that is not manifest or manifested one way or another between the mouth of the speaker and the ears of the listener”. He is, however, quick to point out that “this assumption does not entail that linguists should restrict their field of research to the audible part of the communication process—speech can only be interpreted as such, and not as so much noise, because it stands for something else that is not speech.”

American structuralists—Leonard Bloomfield in particular—were attacked, sometimes legitimately and sometimes illegitimately, by certain factions in the Essentialist tradition. For example, it was perhaps justifiable to criticize Bloomfield for adopting a nominalist ontology as popularized by the logical empiricists. But he was later attacked by Essentialists for holding anti-mentalist views about linguistics, when it is arguable that his actual view was that the science of linguistics should not commit itself to any particular psychological theory. (He had earlier been an enthusiast for the mentalist and introspectionist psychology of Wilhelm Wundt; see Bloomfield 1914.)

Externalism continues to thrive within computational linguistics, where the American structuralist vison of studying language through automatic analysis of corpora has enjoyed a recrudescence, and very large, computationally searchable corpora are being used to test hypotheses about the structure of languages (see Sampson 2001, chapter 1, for discussion).

1.2 The Emergentists

Emergentists aim to explain the capacity for language in terms of non-linguistic human capacities: thinking, communicating, and interacting. Edward Sapir expressed a characteristic Emergentist theme when he wrote:

Language is primarily a cultural or social product and must be understood as such… It is peculiarly important that linguists, who are often accused, and accused justly, of failure to look beyond the pretty patterns of their subject matter, should become aware of what their science may mean for the interpretation of human conduct in general. (Sapir 1929: 214)

The “pretty patterns” derided here are characteristic of structuralist analyses. Sociolinguistics, which is much closer in spirit to Sapir’s project, studies the influence of social and linguistic structure on each other. One particularly influential study, Labov (1966), examines the influence of social class on language variation. Other sociolinguists examine the relation between status within a group on linguistic innovation (Eckert 1989). This interest in variation within languages is characteristic of Emergentist approaches to the study of language.

Another kind of Emergentist, like Tomasello (2003), will stress the role of theory of mind and the capacity to use symbols to change conspecifics’ mental states as uniquely human preadaptations for language acquisition, use, and invention. MacWhinney (2005) aims to explain linguistic phenomena (such as phrase structure and constraints on long distance dependencies) in terms of the way conversation facilitates accurate information-tracking and perspective-switching.

Functionalist research programs generally fall within the broad tendency to approach the study of language as an Emergentist. According to one proponent:

The functionalist view of language [is] as a system of communicative social interaction… Syntax is not radically arbitrary, in this view, but rather is relatively motivated by semantic, pragmatic, and cognitive concerns. (Van Valin 1991, quoted in Newmeyer 1991: 4; emphasis in original)

And according to Russ Tomlin, a linguist who takes a functionalist approach:

Syntax is not autonomous from semantics or pragmatics…the rejection of autonomy derives from the observation that the use of particular grammatical forms is strongly linked, even deterministically linked, to the presence of particular semantic or pragmatic functions in discourse. (Tomlin 1990, quoted by Newmeyer (1991): 4)

The idea that linguistic form is autonomous, and more specifically that syntactic form (rather than, say, phonological form) is autonomous, is a characteristic theme of the Essentialists. And the claims of Van Valin and Tomlin to the effect that syntax is not independent of semantics and pragmatics might tempt some to think that Emergentism and Essentialism are logically incompatible. But this would be a mistake, since there are a large number of nonequivalent autonomy of form theses.

Even in the context of trying to explain what the autonomy thesis is, Newmeyer (1991: 3) talks about five formulations of the thesis, each of which can be found in some Essentialists’ writings, without (apparently) realizing that they are non-equivalent. One is the relatively strong claim that the central properties of linguistic form must not be defined with essential reference to “concepts outside the system”, which suggests that no primitives in linguistics could be defined in psychological or biological terms. Another takes autonomy of form to be a normative claim: that linguistic concepts ought not to be defined or characterized in terms of non-linguistic concepts. The third and fourth versions are ontological: one denies that central linguistic concepts should be ontologically reduced to non-linguistic ones, and the other denies that they can be. And in the fifth version the autonomy of syntax is taken to deny that syntactic patterning can be explained in terms of meaning or discourse functions.

For each of these versions of autonomy, there are Essentialists who agree with it. Probably the paradigmatic Essentialist agrees with them all. But Emergentists need not disagree with them all. Paradigmatic functionalists like Tomlin, Van Valin and MacWhinney could in principle hold that the explanation of syntactic form, for example, will ultimately be in terms of discourse functions and semantics, but still accept that syntactic categories cannot be reduced to non-linguistic ones.

1.3 The Essentialists

If Leonard Bloomfield is the intellectual ancestor of Externalism, and Sapir the father of Emergentism, then Noam Chomsky is the intellectual ancestor of Essentialism. The researcher with predominantly Essentialist inclinations aims to identify the intrinsic properties of language that make it what it is. For a huge majority of practitioners of this approach—researchers in the tradition of generative grammar associated with Chomsky—this means postulating universals of human linguistic structure, unlearned but tacitly known, that permit and assist children to acquire human languages. This generative Essentialism has a preference for finding surprising characteristics of languages that cannot be inferred from the data of usage, and are not predictable from human cognition or the requirements of communication.

Rather than being impressed with language variation, as are Emergentists and many Externalists, the generative Essentialists are extremely impressed with the idea that very young children of almost any intelligence level, and just about any social upbringing, acquire language to the same high degree of mastery. From this it is inferred that there must be unlearned features shared by all languages that somehow assist in language acquisition.

A large number of contemporary Essentialists who follow Chomsky’s teaching on this matter claim that semantics and pragmatics are not a central part of the study of language. In Chomsky’s view, “it is possible that natural language has only syntax and pragmatics” (Chomsky 1995: 26); that is, only “internalist computations and performance systems that access them”; semantic theories are merely “part of an interface level” or “a form of syntax” (Chomsky 1992: 223).

Thus, while Bloomfield understood it to be a sensible practical decision to assign semantics to some field other than linguistics because of the underdeveloped state of semantic research, Chomsky appears to think that semantics as standardly understood is not part of the essence of the language faculty at all. (In broad outline, this exclusion of semantics from linguistics comports with Sapir’s view that form is linguistic but content is cultural.)

Although Chomsky is an Essentialist in his approach to the study of language, excluding semantics as a central part of linguistic theory clearly does not follow from linguistic Essentialism (Katz 1980 provides a detailed discussion of Chomsky’s views on semantics). Today there are many Essentialists who do hold that semantics is a component of a full linguistic theory.

For example, many linguists today are interested in the syntax-semantics interface—the relationship between the surface syntactic structure of sentences and their semantic interpretation. This area of interest is generally quite alien to philosophers who are primarily concerned with semantics only, and it falls outside of Chomsky’s syntactocentric purview as well. Linguists who work in the kind of semantics initiated by Montague (1974) certainly focus on the essential features of language (most of their findings appear to be of universal import rather than limited to the semantic rules of specific languages). Useful works to consult to get a sense of the modern style of investigation of the syntax-semantics interface would include Partee (1975), Jacobson (1996), Szabolcsi (1997), Chierchia (1998), Steedman (2000).

1.4 Comparing the three approaches

The discussion so far has been at a rather high level of abstraction. It may be useful to contrast the three tendencies by looking at how they each would analyze a particular linguistic phenomenon. We have selected the syntax of double-object clauses like Hand the guard your pass (also called ditransitive clauses), in which the verb is immediately followed by a sequence of two noun phrases, the first typically denoting a recipient and the second something transferred. For many such clauses there is an alternative way of expressing roughly the same thing: for Hand the guard your pass there is the alternative Hand your pass to the guard, in which the verb is followed by a single object noun phrase and the recipient is expressed after that by a preposition phrase with to. We will call these recipient-PP clauses.

1.4.1 A typical Essentialist analysis

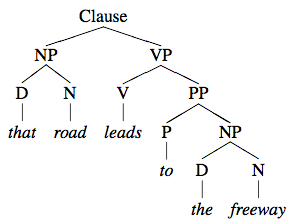

Larson (1988) offers a generative Essentialist approach to the syntax of double-object clauses. In order to provide even a rough outline of his proposals, it will be very useful to be able to use tree diagrams of syntactic structure. A tree is a mathematical object consisting of a set of points called nodes between which certain relations hold. The nodes correspond to syntactic units; left-right order on the page corresponds to temporal order of utterance between them; and upward connecting lines represent the relation ‘is an immediate subpart of’. Nodes are labeled to show categories of phrases and words, such as noun phrase (NP); preposition phrase (PP); and verb phrase (VP). When the internal structure of some subpart of a tree is basically unimportant to the topic under discussion, it is customary to mask that part with an empty triangle. Consider a simple example: an active transitive clause like (Ai) and its passive equivalent (Aii).

- (A)

-

- i.

- The guard checked my pass. [active clause]

- ii.

- My pass was checked by the guard. [passive clause]

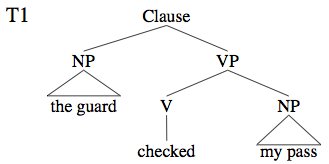

A tree structure for (Ai) is shown in (T1).

In analyses of the sort Larson exemplifies, the structure of an expression is given by a derivation, which consists of a sequence of successively modified trees. Larson calls the earliest ones underlying structures. The last (and least abstract) in the derivation is the surface structure, which captures properties relevant to the way the expression is written and pronounced. The underlying structures are posited in order to better identify syntactic generalizations. They are related to surface structures by a series of operations called transformations (which generative Essentialists typically regard as mentally real operations of the human language faculty).

One of the fundamental operations that a transformation can effect is movement, which involves shifting a part of the syntactic structure of a tree to another location within it. For example, it is often claimed that passive clauses have very much the same kinds of underlying structures as the synonymous active clauses, and thus a passive clause like (Aii) would have an underlying structure much like (T1). A movement transformation would shift the guard toward the end of the clause (and add by), and another would shift my pass into the position before the verb. In other words, passive clauses look much more like their active counterparts in underlying structure.

In a similar way, Larson proposes that a double-object clause like (B.ii) has the same underlying structure as (B.i).

- (B)

-

- i.

- I showed my pass to the guard. [recipient-PP]

- ii.

- I showed the guard my pass. [double object]

Moreover, he proposes that the transformational operation of deriving the surface structure of (B.ii) from the underlying structure of (B.i) is essentially the same as the one that derives the surface structure of (A.ii) from the underlying structure of (A.i).

Larson adopts many assumptions from Chomsky (1981) and subsequent work. One is that all NPs have to be assigned Case in the course of a derivation. (Case is an abstract syntactic property, only indirectly related to the morphological case forms displayed by nominative, accusative, and genitive pronouns. Objective Case is assumed to be assigned to any NP in direct object position, e.g., my pass in (T1), and Nominative Case is assigned to an NP in the subject position of a tensed clause, e.g., the guard in (T1).)

He also makes two specific assumptions about the derivation of passive clauses. First, Case assignment to the position immediately after the verb is “suppressed”, which entails that the NP there will not get Case unless it moves to some other position. (The subject position is the obvious one, because there it will receive Nominative Case.) Second, there is an unusual assignment of semantic role to NPs: instead of the subject NP being identified as the agent of the action the clause describes, that role is assigned to an adjunct at the end of the VP (the by-phrase in (A.ii); an adjunct is a constituent with an optional modifying role in its clause rather than a grammatically obligatory one like subject or object).

Larson proposes that both of these points about passive clauses have analogs in the structure of double-object VPs. First, Case assignment to the position immediately after the verb is suppressed; and since Larson takes the preposition to to be the marker of Case, this means in effect that to disappears. This entails that the NP after to will not get Case unless it moves to some other position. Second, there is an unusual assignment of semantic role to NPs: instead of the direct object NP being identified as the entity affected by the action the clause describes, that role is assigned to an adjunct at the end of the VP.

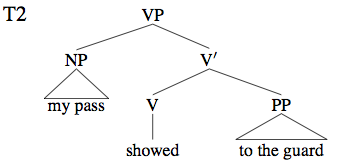

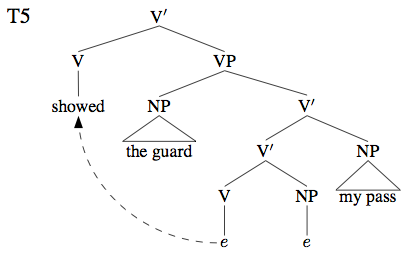

Larson makes some innovative assumptions about VPs. First, he proposes that in the underlying structure of a double-object clause the direct object precedes the verb, the tree diagram being (T2).

This does not match the surface order of words (showed my pass to the guard), but it is not intended to: it is an underlying structure. A transformation will move the verb to the left of my pass to produce the surface order seen in (B.i).

Second, he assumes that there are two nodes labeled VP in a double-object clause, and two more labeled V′, though there is only one word of the verb (V) category. (Only the smaller VP and V′ are shown in the partial structure (T2).)

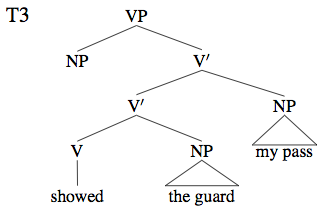

What is important here is that (T2) is the basis for the double-object surface structure as well. To produce that, the preposition to is erased and an additional NP position (for my pass) is attached to the V′, thus:

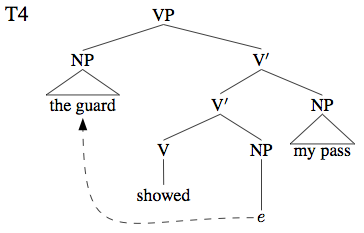

The additional NP is assigned the affected-entity semantic role. The other NP (the guard) does not yet have Case; but Larson assumes that it moves into the NP position before the verb. The result is shown in (T4), where ‘e’ marks the empty string left where some words have been moved away:

Larson assumes that in this position the guard can receive Case. What remains is for the verb to move into a higher V position further to its left, to obtain the surface order:

The complete sequence of transformations is taken to give a deep theoretical explanation of many properties of (B.i) and (B.ii), including such things as what could be substituted for the two NPs, and the fact there is at least rough truth-conditional equivalence between the two clauses.

The reader with no previous experience of generative linguistics will have many questions about the foregoing sketch (e.g., whether it is really necessary to have the guard after showed in (T3), then the opposite order in (T4), and finally the same order again in (T5)). We cannot hope to answer such questions here; Larson’s paper is extremely rich in further assumptions, links to the previous literature, and additional classes of data that he aims to explain. But the foregoing should suffice to convey some of the flavor of the analysis.

The key point to note is that Essentialists seek underlying symmetries and parallels whose operation is not manifest in the data of language use. For Essentialists, there is positive explanatory virtue in hypothesizing abstract structures that are very far from being inferrable from performance; and the posited operations on those structures are justified in terms of elegance and formal parallelism with other analyses, not through observation of language use in communicative situations.

1.4.2 A typical Emergentist analysis

Many Emergentists are favorably disposed toward the kind of construction grammar expounded in Goldberg (1995). We will use her work as an exemplar of the Emergentist approach. The first thing to note is that Goldberg does not take double-object clauses like (B.ii) to be derived alternants of recipient-PP structures like (B.i), the way Larson does. So she is not looking for a regular syntactic operation that can relate their derivations; indeed, she does not posit derivations at all. She is interested in explaining correlations between syntactic, semantic, and pragmatic aspects of clauses; for example, she asks this question:

How are the semantics of independent constructions related such that the classes of verbs associated with one overlap with the classes of verbs associated with another? (Goldberg 1995: 89)

Thus she aims to explain why some verbs occur in both the double-object and recipient-PP kinds of expression and some do not.

The fundamental notion in Goldberg’s linguistic theory is that of a construction. A construction can be defined very roughly as a way of structurally composing words or phrases—a sort of template—for expressing a certain class of meanings. Like Emergentists in general, Goldberg regards linguistic theory as continuous with a certain part of general cognitive psychological theory; linguistics emerges from this more general theory, and linguistic matters are rarely fully separate from cognitive matters. So a construction for Goldberg has a mental reality: it corresponds to a generalized concept or scenario expressible in a language, annotated with a guide to the linguistic structure of the expression.

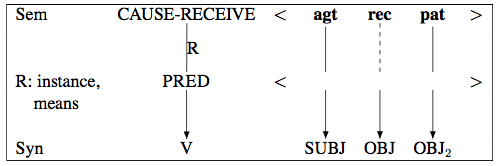

Many words will be trivial examples of constructions: a single concept paired with a way of pronouncing and some details about grammatical restrictions (category, inflectional class, etc.); but constructions can be much more abstract and internally complex. The double-object construction, which Goldberg calls the Ditransitive Construction, is a moderately abstract and complex one; she diagrams it thus (p. 50):

This expresses a set of constraints on how to use English to communicate the idea of a particular kind of scenario. The scenario involves a ternary relation CAUSE-RECEIVE holding between an agent (agt), a recipient (rec), and a patient (pat). PRED is a variable that is filled by the meaning of a particular verb when it is employed in this construction.

The solid vertical lines downward from agt and pat indicate that for any verb integrated into this construction it is required that its subject NP should express the agent participant, and the direct object (OBJ2) should express the patient participant. The dashed vertical line downward from rec signals that the first object (OBJ) may express the recipient but it does not have to—the necessity of there being a recipient is a property of the construction itself, and not every verb demands that it be made explicit who the recipient is. But if there are two objects, the first is obligatorily associated with the recipient role: We sent the builder a carpenter can only express a claim about the sending of a carpenter over to the builder, never the sending of the builder over to where a carpenter is.

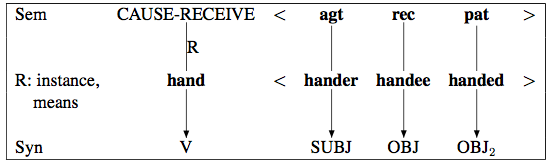

When a particular verb is used in this construction, it may have obligatory accompanying NPs denoting what Goldberg calls “profiled participants” so that the match between the participant roles (agt, rec, pat) is one-to-one, as with the verb hand. When this verb is used, the agent (‘hander’), recipient (‘handee’), and item transferred (‘handed’) must all be made explicit. Goldberg gives the following diagram of the “composite structure” that results when hand is used in the construction:

Because of this requirement of explicit presence, Hand him your pass is grammatical, but *Hand him is not, and neither is *Hand your pass. The verb send, on the other hand, illustrates the optional syntactic expression of the recipient role: we can say Send a text message, which is understood to involve some recipient but does not make the recipient explicit.

The R notation relates to the fact that particular verbs may express either an instance of causing someone to receive something, as with hand, or a means of causing someone to receive something, as with kick: what Joe kicked Bill the ball means is that Joe caused Bill to receive the ball by means of a kicking action.

Goldberg’s discussion covers many subtle ways in which the scenario communicated affects whether the use of a construction is grammatical and appropriate. For example, there is something odd about ?Joe kicked Bill the ball he was trying to kick to Sam: the Ditransitive Construction seems best suited to cases of volitional transfer (rather than transfer as an unexpected side effect of a blunder). However, an exception is provided by a class of cases in which the transfer is not of a physical object but is only metaphorical: That guy gives me the creeps does not imply any volitional transfer of a physical object.

Metaphorical cases are distinguished from physical transfers in other ways as well. Goldberg notes sentences like The music lent the event a festive air, where the music is subject of the verb lend despite the fact that music cannot literally lend anything to anyone.

Goldberg discusses many topics such as metaphorical extension, shading, metonymy, cutting, role merging, and also presents various general principles linking meanings and constructions. One of these principles, the No Synonymy Principle, says that no two syntactically distinct constructions can be both semantically and pragmatically synonymous. It might seem that if any two sentences are synonymous, pairs like this are:

- (C)

-

- i.

- She gave her husband an iPod. [double object]

- ii.

- She gave an iPod to her husband. [recipient-PP]

Yet the two constructions cannot be fully synonymous, both semantically and pragmatically, if the No Synonymy Principle is correct. And to support the principle, Goldberg notes purported contrasts such as this:

- (D)

-

- i.

- She gave her husband a new interest in music. [double object]

- ii.

- ?She gave a new interest in music to her husband. [recipient-PP]

There is a causation-as-transfer metaphor here, and it seems to be compatible with the double object construction but not with the recipient-PP. So (in Goldberg’s view) the two are not fully synonymous.

It is no part of our aim here to provide a full account of the content of Goldberg’s discussion of double-object clauses. But what we want to highlight is that the focus is not on finding abstract elements or operations of a purely syntactic nature that are candidates for being essential properties of language per se. The focus for Emergentists is nearly always on the ways in which meaning is conveyed, the scenarios that particular constructions are used to communicate, and the aspects of language that connect up with psychological topics like cognition, perception, and conceptualization.

1.4.3 A typical Externalist analysis

One kind of work that is representative of the Externalist tendency is nicely illustrated by Bresnan et al. (2007) and Bresnan and Ford (2010). Bresnan and her colleagues defend the use of corpora—bodies of attested written and spoken texts. One of their findings is that a number of types of expressions that linguists have often taken to be ungrammatical do in fact turn up in actual use. Essentialists and Emergentists alike have often, purely on the basis of intuition, asserted that sentences like John gave Mary a kiss are grammatical but sentences like John gave a kiss to Mary are no, as we see above with Goldberg’s (D)(ii). Bresnan and her colleagues find numerous occurrences of the latter sort on the World Wide Web, and conclude that they are not ungrammatical or even unacceptable, but merely dispreferred.

Bresnan and colleagues used a three-million-word collection of recorded and transcribed spontaneous telephone conversations known as the Switchboard corpus to study the double-object and recipient-PP constructions. They first annotated the utterances with indications of a number of factors that they thought might influence the choice between the double-object and recipient-PP constructions:

- Discourse accessibility of NPs: does a particular NP refer to something already mentioned, or to something new to the discourse?

- Relative lengths of NPs: what is the difference in number of words between the recipient NP and the transferred-item NP?

- Definiteness: are the recipient and transferred-item NPs definite like the bishop or indefinite like some members

- Animacy: do the recipient and transferred-item NPs denote animate beings or inanimate things?

- Pronominality: are the recipient and transferred-item NPs pronouns?

- Number: are the recipient and transferred-item NPs singular or plural?

- person: are the recipient and transferred-item NPs first-person or second-person pronouns, or third person?

They also coded the verb meanings by assigning them to half a dozen semantic categories:

- Abstract senses (give it some thought);

- Transfer of possession (give him an armband);

- Future transfer of possession (I owe you a dollar);

- Prevention of possession (They denied me my rights);

- Communication verb sense (tell me your name).

They then constructed a statistical model of the corpus: a mathematical formula expressing, for each combination of the factors listed above, the ratio of the probabilities of the double object and the recipient-PP. (To be precise, they used the natural logarithm of the ratio of p to 1 − p, where p is the probability of a double-object or recipient-PP in the corpus being of the double-object form.) They then used logistic regression to predict the probability of fit to the data.

To determine how well the model generalized to unseen data, they divided the data randomly 100 times into a training set and a testing set, fit the model parameters on each training set, and scored its predictions on the unseen testing set. The average percent of correct predictions on unseen data was 92%. All components of the model except number of the recipient NP made a statistically significant difference—almost all at the 0.001 level.

What this means is that knowing only the presence or absence of the sort of factors listed above they were reliably able to predict whether double-object or recipient-PP structures would be used in a given context, with a 92% score accuracy rate.

The implication is that the two kinds of structure are not interchangeable: they are reliably differentiated by the presence of other factors in the texts in which they occur.

They then took the model they had generated for the telephone speech data and applied it to a corpus of written material: the Wall Street Journal corpus (WSJ), a collection of 1987–9 newspaper copy, only roughly edited. The main relevant difference with written language is that the language producer has more opportunity to reflect thoughtfully on how they are going to phrase things. It was reasonable to think that a model based on speech data might not transfer well. But instead the model had 93.5% accuracy. The authors conclude is that “the model for spoken English transfers beautifully to written”. The main difference between the corpora was found to be a slightly higher probability of the recipient-PP structure in written English.

In a very thorough subsequent study, Bresnan and Ford (2010) show that the results also correlate with native speakers’ metalinguistic judgments of naturalness for sentence structures, and with lexical decision latencies (speed of deciding whether the words in a text were genuine English words or not), and with a sentence completion task (choosing the most natural of a list of possible completions of a partial sentence). The results of these experiments confirmed that their model predicted participants’ performance.

Among the things to note about this work is that it was all done on directly recorded performance data: transcripts of people speaking to each other spontaneously on the phone in the case of the Switchboard corpus, stories as written by newspaper journalists in the case of WSJ, measured responses of volunteer subjects in a laboratory in the case of the psycholinguistic experiments of Bresnan and Ford (2010). The focus is on identifying the factors in linguistic performance that permit accurate prediction of future performance, and the methods of investigation have a replicability and checkability that is familiar in the natural sciences.

However, we should make it clear that the work is not some kind of close-to-the-ground collecting and classifying of instances. The models that Bresnan and her colleagues develop are sophisticated mathematical abstractions, very far removed from the records of utterance tokens. They claim that these models “allow linguistic theory to solve more difficult problems than it has in the past, and to build convergent projects with psychology, computer science, and allied fields of cognitive science” (Bresnan et al. 2007: 69).

1.4.4 Conclusion

It is important to see that the contrast we have drawn here is not just between three pieces of work that chose to look at different aspects of the phenomena associated with double-object sentences. It is true that Larson focuses more on details of tree structure, Goldberg more on subtle differences in meaning, and Bresnan et al. on frequencies of occurrence. But that is not what we are pointing to. What we want to stress is that we are illustrating three different broad approaches to language that regard different facts as likely to be relevant, and make different assumptions about what needs to be accounted for, and what might count as an explanation.

Larson looks at contrasts between different kinds of clause with different meanings and see evidence of abstract operations affecting subtle details of tree structure, and parallelism between derivational operations formerly thought distinct.

Goldberg looks at the same facts and sees evidence not for anything to do with derivations but for the reality of specific constructions—roughly, packets of syntactic, semantic, and pragmatic information tied together by constraints.

Bresnan and her colleagues see evidence that readily observable facts about speaker behavior and frequency of word sequences correlate closely with certain lexical, syntactic, and semantic properties of words.

Nothing precludes defenders of any of the three approaches from paying attention to any of the phenomena that the other approaches attend to. There is ample opportunity for linguists to mix aspects of the three approaches in particular projects. But in broad outline there are three different tendencies exhibited here, with stereotypical views and assumptions roughly as we laid them out in Table 1.

2. The Subject Matter of Linguistic Theories

The complex and multi-faceted character of linguistic phenomena means that the discipline of linguistics has a whole complex of distinguishable subject matters associated with different research questions. Among the possible topics for investigation are these:

- the capacity of humans to acquire, use, and invent languages;

- the abstract structural patterns (phonetic, morphological, syntactic, or semantic) found in a particular language under some idealization;

- systematic structural manifestations of the use of some particular language;

- the changes in a language or among languages across time;

- the psychological functioning of individuals who have successfully acquired particular languages;

- the psychological processes underlying speech or linguistically mediated thinking in humans;

- the evolutionary origin of (i), and/or (ii).

There is no reason for all of the discipline of linguistics to converge on a single subject matter, or to think that the entire field of linguistics cannot have a diverse range of subject matters. To give a few examples:

- The influential Swiss linguist Ferdinand de Saussure (1916) distinguished between langue, a socially shared set of abstract conventions (compare with (ii)) and parole, the particular choices made by a speaker deploying a language (compare (iii)).

- The anthropological linguist Edward Sapir (1921, 1929) thought that human beings have a seemingly species-universal capacity to acquire and use languages (compare (i)), but his own interest was limited to the systematic structural features of particular languages (compare (ii)) and the psychological reality of linguistic units such as the phoneme (an aspect of (vi)), and the psychological effects of language and thought (an aspect of (v)).

- Bloomfield (1933) showed a strong interest in historical linguistic change (compare (iv)), distinguishing that sharply (much as Saussure did) from synchronic description of language structure ((ii) again) and language use (compare (iii)), arguing that the study of (iv) presupposed (vi).

- Bloomfield famously eschewed all dualistic mentalistic approaches to the study of language, but since he rejected them on materialist ontological grounds, his rejection of mentalism was not clearly a rejection of (vi) or (vii): his attempt to cast linguistics in terms of stimulus-response psychology indicates that he was sympathetic to the Weissian psychology of his time and accepted that linguistics might have psychological subject matter.

- Zellig Harris, on the other hand, showed little interest in the psychology of language, concentrating on mathematical techniques for tackling (ii).

Most saliently of all, Harris’s student Chomsky reacted strongly against indifference toward the mind, and insisted that the principal subject matter of linguistics was, and had to be, a narrow psychological version of (i), and an individual, non-social, and internalized conception of (ii).

In the course of advancing his view, Chomsky introduced a number of novel pairs of terms into the linguistics literature: competence vs. performance (Chomsky 1965); ‘I-language’ vs. ‘E-language’ (Chomsky 1986); the faculty of language in the narrow sense vs. the and faculty of language in the broad sense (the ‘FLN’ and ‘FLB’ of Hauser et al. 2002). Because Chomsky’s terminological innovations have been adopted so widely in linguistics, the focus of sections 2.1–2.3 will be to examine the use of these expressions as they were introduced into the linguistics literature and consider their relation to (i)–(vii).

2.1 Competence and performance

Essentialists invariably distinguish between what Chomsky (1965) called competence and performance. Competence is what knowing a language confers: a tacit grasp of the structural properties of all the sentences of a language. Performance involves actual real-time use, and may diverge radically from the underlying competence, for at least two reasons: (a) an attempt to produce an utterance may be perturbed by non-linguistic factors like being distracted or interrupted, changing plans or losing attention, being drunk or having a brain injury; or (b) certain capacity limits of the mechanisms of perception or production may be overstepped.

Emergentists tend to feel that the competence/performance distinction sidelines language use too much. Bybee and McClelland put it this way:

One common view is that language has an essential and unique inner structure that conforms to a universal ideal, and what people say is a potentially imperfect reflection of this inner essence, muddied by performance factors. According to an opposing view…language use has a major impact on language structure. The experience that users have with language shapes cognitive representations, which are built up through the application of general principles of human cognition to linguistic input. The structure that appears to underlie language use reflects the operation of these principles as they shape how individual speakers and hearers represent form and meaning and adapt these forms and meanings as they speak. (Bybee and McClelland 2005: 382)

And Externalists are often concerned to describe and explain not only language structure, but also the workings of processing mechanisms and the etiology of performance errors.

However, every linguist accepts that some idealization away from the speech phenomena is necessary. Emergentists and Externalists are almost always happy to idealize away from sporadic speech errors. What they are not so keen to do is to idealize away from limitations on linguistic processing and the short-term memory on which it relies. Acceptance of a thoroughgoing competence/performance distinction thus tends to be a hallmark of Essentialist approaches, which take the nature of language to be entirely independent of other human cognitive processes (though of course capable of connecting to them).

The Essentialists’ practice of idealizing away from even psycholinguistically relevant factors like limits on memory and processing plays a significant role in various important debates within linguistics. Perhaps the most salient and famous is the issue of whether English is a finite-state language.

The claim that English is not accepted by any finite-state automaton can only be supported by showing that every grammar for English has center- embedding to an unbounded depth (see Levelt 2008: 20–23 for an exposition and proof of the relevant theorem, originally from Chomsky 1959). But even depth-3 center-embedding of clauses (a clause interrupting a clause that itself interrupts a clause) is in practice extraordinarily hard to process. Hardly anyone can readily understand even semantically plausible sentences like Vehicles that engineers who car companies trust build crash every day. And such sentences virtually never occur, even in writing. Karlsson (2007) undertakes an extensive examination of available textual material, and concludes that depth-3 center-embeddings are vanishingly rare, and no genuine depth-4 center-embedding has ever occurred at all in naturally composed text. He proposes that there is no reason to regard center-embedding as grammatical beyond depth 3 (and for spoken language, depth 2). Karlsson is proposing a grammar that stays close to what performance data can confirm; the standard Essentialist view is that we should project massively from what is observed, and say that depth-n center-embedding is fully grammatical for all n.

2.2 ‘I-Language’ and ‘E-Language’

Chomsky (1986) introduced into the linguistics literature two technical notions of a language: ‘E-Language’ and ‘I-Language’. He deprecates the former as either undeserving of study or as a fictional entity, and promotes the latter as the only scientifically respectable object of study for a serious linguistics.

2.2.1 ‘E-language’

Chomsky’s notion ‘E-language’ is supposed to suggest by its initial ‘E’ both ‘extensional’ (concerned with which sentences happen to satisfy a definition of a language rather than with what the definition says) and ‘external’ (external to the mind, that is, non-mental). The dismissal of E-language as an object of study is aimed at critics of Essentialism—many but not all of those critics falling within our categories of Externalists and Emergentists.

Extensional. First, there is an attempt to impugn the extensional notion of a language that is found in two radically different strands of Externalist work. Some Externalist investigations are grounded in the details of attested utterances (as collected in corpora), external to human minds. Others, with mathematical or computational interests, sometimes idealize languages as extensionally definable objects (typically infinite sets of strings) with a certain structure, independently of whatever device might be employed to characterize them. A set of strings of words either is or is not regular (finite-state), either is or is not recursive (decidable), etc., independently of forms of grammar statement. Chomsky (1986) basically dismissed both corpus-based work and mathematical linguistics simply on the grounds that they employ an extensional conception of language that is, a conception that removes the object of study from having an essential connection with the mental.

External. Second, a distinct meaning based on ‘external’ was folded into the neologism ‘E-language’ to suggest criticism of any view that conceives of a natural language as a public, intersubjectively accessible system used by a community of people (often millions of them spread across different countries). Here, the objection is that languages as thus conceived have no clear criteria of individuation in terms of necessary and sufficient conditions. On this conception, the subject matter of interest is a historico-geographical entity that changes as it is transmitted over generations, or over mountain ranges. Famously, for example, there is a gradual valley-to-valley change in the language spoken between southeastern France and northwestern Italy such that each valley’s speakers can understand the next. But the far northwesterners clearly speak French and the far southeasterners clearly speak Italian. It is the politically defined geographical border, not the intrinsic properties of the dialects, that would encourage viewing this continuum as two different languages.

Perhaps the most famous quotation by any linguist is standardly attributed to Max Weinreich (1945): ‘A shprakh iz a dialekt mit an armey un flot’ (‘A language is a dialect with an army and navy’; he actually credits the remark to an unnamed student). The implication is that E-languages are defined in terms of non-linguistic, non-essential properties. Essentialists object that a scientific linguistics cannot tolerate individuating French and Italian in a way that is subject to historical contingencies of wars and treaties (after all, the borders could have coincided with a different hill or valley had some battle had a different outcome).

Considerations of intelligibility fare no better. Mutual intelligibility between languages is not a transitive relation, and sometimes the intelligibility relation is not even symmetric (smaller, more isolated, or less prestigious groups often understand the dialects of larger, more central, or higher-prestige groups when the converse does not hold). So these sociological facts cannot individuate languages either.

Chomsky therefore concludes that languages cannot be defined or individuated extensionally or mind-externally, and hence the only scientifically interesting conception of a ‘language’ is the ‘I-language’ view (see for example Chomsky 1986: 25; 1992; 1995 and elsewhere). Chomsky says of E-languages that “all scientific approaches have simply abandoned these elements of what is called ‘language’ in common usage” (Chomsky 1988, 37); and “we can define E-language in one way or another or not at all, since the concept appears to play no role in the theory of language” (Chomsky 1986: 26; in saying that it appears to play no role in the theory of language, here he means that it plays no role in the theory he favours).

This conclusion may be bewildering to non-linguists as well as non-Essentialists. It is at odds with what a broad range of philosophers have tacitly assumed or explicitly claimed about language or languages: ‘[A language] is a practice in which people engage…it is constituted by rules which it is part of social custom to follow’ (Dummett 1986: 473–473); ‘Language is a set of rules existing at the level of common knowledge’ and these rules are ‘norms which govern intentional social behavior’ (Itkonen 1978: 122), and so on. Generally speaking, those philosophers influenced by Wittgenstein also take the view that a language is a social-historical entity. But the opposite view has become a part of the conceptual underpinning of linguistics for many Essentialists.

Failing to have precise individuation conditions is surely not a sufficient reason to deny that an entity can be studied scientifically. ‘Language’ as a count noun in the extensional and socio-historical sense is vague, but this need not be any greater obstacle to theorizing about them than is the vagueness of other terms for historical entities without clear individuation conditions, like ‘species’ and ‘individual organism’ in biology.

At least some Emergentist linguists, and perhaps some Externalists, would be content to say that languages are collections of social conventions, publicly shared, and some philosophers would agree (see Millikan 2003, for example, and Chomsky 2003 for a reply). Lewis (1969) explicitly defends the view that language can be understood in terms of public communications, functioning to solve coordination problems within a group (although he acknowledges that the coordination could be between different temporal stages of one individual, so language use by an isolated person is also intelligible; see the appendix “Lewis’s Theory of Languages as Conventions” in the entry on idiolects, for further discussion of Lewis). What Chomsky calls E-languages, then, would be perfectly amenable to linguistic or philosophical study. Santana (2016) makes a similar argument in terms of scientific idealization. He argues that since all sciences idealize their targets, Chomsky needs to do more to show why idealizations concerning E-languages are illicit (see also Stainton 2014).

2.2.2 ‘I-language’

Chomsky (1986) introduced the neologism ‘I-language’ in part to disambiguate the word ‘grammar’. In earlier generative Essentialist literature, ‘grammar’ was (deliberately) ambiguous between (i) the linguist’s generative theory and (ii) what a speaker knows when they know a language. ‘I-language’ can be regarded as a replacement for Bever’s term ‘psychogrammar’ (see also George 1989): it denotes a mental or psychological entity (not a grammarian’s description of a language as externally manifested).

I-language is first discussed under the sub-heading of ‘internalized language’ to denote linguistic knowledge. Later discussion in Chomsky 1986 and 1995 makes it clear that the ‘I’ of ‘I-language’ is supposed to suggest at least three English words: ‘individual’, ‘internal’, and ‘intensional’. And Chomsky emphasizes that the neologism also implies a kind of realism about speakers’ knowledge of language.

Individual. A language is claimed to be strictly a property of individual human beings—not groups. The contrast is between the idiolect of a single individual, and a dialect or language of a geographical, social, historical, or political group. I-languages are properties of the minds of individuals who know them.

Internal. As generative Essentialists see it, your I-language is a state of your mind/brain. Meaning is internal—indeed, on Chomsky’s conception, an I-language

is a strictly internalist, individualist approach to language, analogous in this respect to studies of the visual system. If the cognitive system of Jones’s language faculty is in state L, we will say that Jones has the I-language L. (Chomsky 1995: 13)

And he clarifies the sense in which an I-language is internal by appealing to an analogy with the way the study of vision is internal:

The same considerations apply to the study of visual perception along lines pioneered by David Marr, which has been much discussed in this connection. This work is mostly concerned with operations carried out by the retina; loosely put, the mapping of retinal images to the visual cortex. Marr’s famous three levels of analysis—computational, algorithmic, and implementation—have to do with ways of construing such mappings. Again, the theory applies to a brain in a vat exactly as it does to a person seeing an object in motion. (Chomsky 1995: 52)

Thus, while the speaker’s I-language may be involved in performing operations over representations of distal stimuli—representations of other speaker’s utterances—I-languages can and should be studied in isolation from their external environments.

Although Chomsky sometimes refers to this narrow individuation of I-languages as ‘individual’, he clearly claims that I-languages are individuated in isolation from both speech communities and other aspects of the broadly conceived natural environment:

Suppose Jones is a member of some ordinary community, and J is indistinguishable from him except that his total experience derives from some virtual reality design; or let J be Jones’s Twin in a Twin-Earth scenario. They have had indistinguishable experiences and will behave the same way (in so far as behavior is predictable at all); they have the same internal states. Suppose that J replaces Jones in the community, unknown to anyone except the observing scientist. Unaware of any change, everyone will act as before, treating J as Jones; J too will continue as before. The scientist seeking the best theory of all of this will construct a narrow individualist account of Jones, J, and others in the community. The account omits nothing… (Chomsky 1995: 53–54)

This passage can also be seen as suggesting a radically intensionalist conception of language.

Intensional. The way in which I-languages are ‘intensional’ for Chomsky needs a little explication. The concept of intension is familiar in logic and semantics, where ‘intensional’ contrasts with ‘extensional’. The extension of a predicate like blue is simply the set of all blue objects; the intension is the function that picks out in a given world the blue objects contained therein. In a similar way, the extension of a set can be distinguished from an intensional description of the set in terms of a function: the set of integer squares is {1, 4, 9, 16, 25, 36, …}, and the intension could be given in terms of the one-place function f such that f(n) = n × n. One difference between the two accounts of squaring is that the intensional one could be applied to a different domain (any domain on which the ‘×’ operation is defined: on the rationals rather than the integers, for example, the extension of the identically defined function is a different and larger set containing infinitely many fractions).

In an analogous way, a language can be identified with the set of all and only its expressions (regardless of what sort of object an expression is: a word sequence, a tree structure, a complete derivation, or whatever), which is the extensional view; but it can also be identified intensionally by means of a recipe or formal specification of some kind—what linguists call a grammar. Ludlow (2011) considers the first I (individual) to be the weakest link and thus the most expendable. He argues in its stead for a concept of a “Ψ-language” which allows for the possibility of the I-language relating to external objects either constitutively or otherwise.

In natural language semantics, an intensional context is one where substitution of co-extensional terms fails to preserve truth value (Scott is Scott is true, and Scott is the author of Waverley is true, but the truth of George knows that Scott is Scott doesn’t guarantee the truth of George knows that Scott is the author of Waverly, so knows that establishes an intensional context).

Chomsky claims that the truth of an I-language attribution is not preserved by substituting terms that have the same extension. That is, even when two human beings do not differ at all on what expressions are grammatical, it may be false to say that they have the same I-language. Where H is a human being and L is a language (in the informal sense) and R is the relation of knowing (or having, or using) that holds between a human being and a language, Chomsky holds, in effect, that R establishes an intensional context in statements of the theory:

[F]or H to know L is for H to have a certain I-language. The statements of the grammar are statements of the theory of mind about the I-language, hence structures of the brain formulated at a certain level of abstraction from mechanisms. These structures are specific things in the world, with their properties… The I-language L may be the one used by a speaker but not the I-language L′ even if the two generate the same class of expressions (or other formal objects) … L′ may not even be a possible human I-language, one attainable by the language faculty. (Chomsky 1986: 23)

The idea is that two individuals can know (or have, or use) different I-languages that generate exactly the same strings of words, and even give them exactly the same structures. This situation forms the basis of Quine’s (1972) infamous critique of the psychological reality of generative grammars (see Johnson 2015 for a solution in terms of invariance of ‘behaviorally equivalent grammar formalisms, to use Quine’s terminology, see also Nefdt 2021 for a similar resolution in terms of structural realism in the philosophy of science).

The generative Essentialist conception of an I-language is antithetical to Emergentist research programs. If the fundamental explanandum of scientific linguistics is how actual linguistic communication takes place, one must start by looking at both internal (psychological) and external (public) practices and conventions in virtue of which it occurs, and consider the effect of historical and geographic contingencies on the relevant underlying processes. That would not rule out ‘I-language’ as part of the explanans; but some Emergentists seem to be fictionalists about I-languages, in an analogous sense to the way that Chomsky is a fictionalist about E-languages. Emergentists do not see a child as learning a generative grammar, but as learning how to use a symbolic system for propositional communication. On this view grammars are mere artifacts that are developed by linguists to codify aspects of the relevant systems, and positing an I-language amounts to projecting the linguist’s codification illegitimately onto human minds (see, for example, Tomasello 2003).

The I-language concept brushes aside certain phenomena of interest to the Externalists, who hold that the forms of actually attested expressions (sentences, phrases, syllables, and systems of such units) are of interest for linguistics. For example, computational linguistics (work on speech recognition, machine translation, and natural language interfaces to databases) must rely on a conception of language as public and extensional; so must any work on the utterances of young children, or the effects of word frequency on vowel reduction, or misunderstandings caused by road sign wordings. At the very least, it might be said on behalf of this strain of Externalism (along the lines of Soames 1984) that linguistics will need careful work on languages as intersubjectively accessible systems before hypotheses about the I-language that purportedly produces them can be investigated.

It is a highly biased claim that the E-language concept “appears to play no role in the theory of language” (Chomsky 1986: 26). Indeed, the terminological contrast seems to have been invented not to clarify a distinction between concepts but to nudge linguistic research in a particular direction.

2.3 The faculty of language in narrow and broad senses

In Hauser et al. (2002) (henceforth HCF) a further pair of contrasting terms is introduced. They draw a distinction quite separate from the competence/performance and ‘I-language’/‘E-language’ distinctions: the “language faculty in the narrow sense” (FLN) is distinguished from the “language faculty in the broad sense” (FLB). According to HCF, FLB “excludes other organism-internal systems that are necessary but not sufficient for language (e.g., memory, respiration, digestion, circulation, etc.)” but includes whatever is involved in language, and FLN is some limited part of FLB (p. 1571) This is all fairly vague, but it is clear that FLN and FLB are both internal rather than external, and individual rather than social.

The FLN/FLB distinction apparently aims to address the uniqueness of one component of the human capacity for language rather than (say) the content of human grammars. HCF say (p. 1573) that “Only FLN is uniquely human”; they “hypothesize that most, if not all, of FLB is based on mechanisms shared with nonhuman animals”; and they say:

[T]he computations underlying FLN may be quite limited. In fact, we propose in this hypothesis that FLN comprises only the core computational mechanisms of recursion as they appear in narrow syntax and the mappings to the interfaces. (ibid.)

The components of FLB that HCF hypothesize are not part of FLN are the “sensory-motor” and “conceptual-intentional” systems. The study of the conceptual-intentional system includes investigations of things like the theory of mind; referential vocal signals; whether imitation is goal directed; and the field of pragmatics. The study of the sensory motor system, by contrast, includes “vocal tract length and formant dispersion in birds and primates”; learning of songs by songbirds; analyses of vocal dialects in whales and spontaneous imitation of artificially created sounds in dolphins; “primate vocal production, including the role of mandibular oscillations”; and “[c]ross-modal perception and sign language in humans versus unimodal communication in animals”.

It is presented as an empirical hypothesis that a core property of the FLN is “recursion”:

All approaches agree that a core property of FLN is recursion, attributed to narrow syntax…FLN takes a finite set of elements and yields a potentially infinite array of discrete expressions. This capacity of FLN yields discrete infinity (a property that also characterizes the natural numbers). (HCF, p. 1571)

HCF leave open exactly what the FLN includes in addition to recursion. It is not ruled out that the FLN incorporates substantive universals as well as the formal property of “recursion”. But whatever “recursion” is in this context, it is apparently not domain-specific in the sense of earlier discussions by generative Essentialists, because it is not unique to human natural language or defined over specifically linguistic inputs and outputs: it is the basis for humans’ grasp of the formal and arguably non-natural language of arithmetic (counting, and the successor function), and perhaps also navigation and social relations. It might be more appropriate to say that HCF identify recursion as a cognitive universal, not a linguistic one. And in that case it is difficult to see how the so-called ‘language faculty’ deserves that name: it is more like a faculty for cognition and communication.

This abandonment of linguistic domain-specificity contrasts very sharply with the picture that was such a prominent characteristic of the earlier work on linguistic nativism, popularized in different ways by Fodor (1983), Barkow et al. (1992), and Pinker (1994). And yet the HCF discussion of FLN seems to incline to the view that human language capacities have a unique human (though not uniquely linguistic) essence.

The FLN/FLB distinction provides earlier generative Essentialism with an answer (at least in part) to the question of what the singularity of the human language faculty consists in, and it does so in a way that subsumes many of the empirical discoveries of paleoanthropology, primatology, and ethnography that have been part of highly influential in Emergentist approaches as well as neo-Darwinian Essentialist approaches. A neo-Darwinian Essentialist like Pinker will accept that the language faculty involves recursion, but also will also hold (with Emergentists) that human language capacities originated, via natural selection, for the purpose of linguistic communication.

Thus, over the years, those Essentialists who follow Chomsky closely have changed the term they use for their core subject matter from ‘linguistic competence’ to ‘I-language’ to ‘FLN’, and the concepts expressed by these terms are all slightly different. In particular, what they are counterposed to differs in each case.

The challenge for the generative Essentialist adopting the FLN/FLB distinction as characterized by HCF is to identify empirical data that can support the hypothesis that the FLN “yields discrete infinity”. That will mean answering the question: discrete infinity of what? HCF write that FLN “takes a finite set of elements and yields a potentially infinite array of discrete expressions” (p. 1571), which makes it clear that there must be a recursive procedure in the mathematical sense, perhaps putting atomic elements such as words together to make internally complex elements like sentences (“array” should probably be understood as a misnomer for ‘set’). But then they say, somewhat mystifyingly:

Each of these discrete expressions is then passed to the sensory-motor and conceptual-intentional systems, which process and elaborate this information in the use of language. Each expression is, in this sense, a pairing of sound and meaning. (HCF, p. 1571)

But the sensory-motor and conceptual-intentional systems are concrete parts of the organism: muscles and nerves and articulatory organs and perceptual channels and neuronal activity. How can each one of a “potentially infinite array” be “passed to” such concrete systems without it taking a potentially infinite amount of time? HCF may mean that for any one of the expressions that FLN defines as well-formed (by generating it) there is a possibility of its being used as the basis for a pairing of sound and meaning. This would be closer to the classical generative Essentialist view that the grammar generates an infinite set of structural descriptions; but it is not what HCF say.

At root, HCF is a polemical work intended to identify the view it promotes as valuable and all other approaches to linguistics as otiose.

In the varieties of modern linguistics that concern us here, the term “language” is used quite differently to refer to an internal component of the mind/brain (sometimes called internal language or I-language).… However, this biologically and individually grounded usage still leaves much open to interpretation (and misunderstanding). For example, a neuroscientist might ask: What components of the human nervous system are recruited in the use of language in its broadest sense? Because any aspect of cognition appears to be, at least in principle, accessible to language, the broadest answer to this question is, probably, “most of it.” Even aspects of emotion or cognition not readily verbalized may be influenced by linguistically based thought processes. Thus, this conception is too broad to be of much use. (HCF, p. 1570)

It is hard to see this as anything other than a claim that approaches to linguistics focusing on anything that could fall under the label ‘E-language’ are to be dismissed as useless.

Some Externalists and Emergentists actually reject the idea that the human capacity for language yields “a potentially infinite array of expressions”. It is often pointed out by empirically inclined computational linguists that in practice there will only ever be a finite number of sentences to be dealt with (though the people saying this may underestimate the sheer vastness of the finite set involved). And naturally, for those who do not believe there are generative grammars in speakers’ heads at all, it holds a fortiori that speakers do not have grammars in their heads generating infinite languages (see Nefdt 2019c for a scientific modeling perspective on the infinity postulate). Externalists and Emergentists tend to hold that the “discrete infinity” that HCF posits is more plausibly a property of the generative Essentialists’ model of linguistic competence, I-language, or FLN, than a part of the human mind/brain. This does not mean that non-Essentialists deny that actual language use is creative, or (of course) that they think there is a longest sentence of English. But they may reject the link between linguistic productivity or creativity and the mathematical notion of recursion (see Pullum and Scholz 2010).

HCF’s remarks about how FLN “yields” or “generates” a specific “array” assume that languages are clearly and sharply individuated by their generators. They appear to be committed to the view that there is a fact of the matter about exactly which generator is in a given speaker’s head. Emergentists tend not to individuate languages in this way, and may reject generative grammars entirely as inappropriately or unacceptably ‘formalist’. They are content with the notion that the common-sense concept of a language is vague, and it is not the job of linguistic theory to explain what a language is, any more than it is the job of physicists to explain what material is, or of biologists to explain what life is. Emergentists, in particular, are interested not so much in identifying generators, or individuating languages, but in exploring the component capacities that facilitate linguistic communication, and finding out how they interact.

Similarly, Externalists are interested in the linguistic structure of expressions, but have little use for the idea of a discrete infinity of them, a view that is not, and cannot be empirically supported, unless one thinks of simplicity and elegance of theory as empirical matters. They focus on the outward manifestations of language, not on a set of expressions regarded as a whole language—at least not in any way that would give a language a definite cardinality. Zellig Harris, an archetypal Externalist, is explicit that the reason for not regarding the set of utterances as finite concerns the elegance of the resulting grammar: “If we were to insist on a finite language, we would have to include in our grammar several highly arbitrary and numerical conditions” (Harris 1957: 208). Infinitude, on his view is an unimportant side consequence of setting up a sentence-generating grammar in an uncluttered and maximally elegant way, not a discovered property of languages (see Pullum and Scholz 2010 for further discussion).

2.4 Linguistic Ontology

Not all Essentialists agree that linguistics studies aspects of what is in the mind or aspects of what is human. There are some who do not see language as either mental or human, and certainly do not regard linguists as working on a problem within cognitive psychology or neurophysiology. The debate on the ontology of language has seen three major options emerging in the literature. Besides the mentalism of Chomskyan linguistics, Katz (1981), Katz and Postal (1991) and Postal (2003) proffered a platonistic alternative and finally nominalism was proposed by Devitt (2006).